By the NStarX Engineering Intelligence Team

There is a particular kind of enthusiasm that sweeps through engineering organizations when a new productivity tool promises to double output overnight. We have seen it with low-code platforms, offshore outsourcing waves, and microservices mandates. Each wave delivered real benefits — and each one left behind a trail of architectural decisions that took years to unwind.

AI-assisted coding is no different in structure, even if it is radically different in capability. Generative AI coding tools — GitHub Copilot, Amazon CodeWhisperer, Cursor, Tabnine, and increasingly agentic coding systems like Devin — are genuinely transformative. They accelerate prototyping, reduce boilerplate burden, and help engineers move from idea to working code faster than any prior tool in the history of software development.

But inside enterprise engineering organizations — the kind building financial infrastructure, clinical decision systems, real-time media platforms, and AI-native SaaS products — velocity is never the only variable that matters. Correctness, maintainability, security posture, architectural coherence, and long-term operational cost are equally in play. And it is precisely in these dimensions that AI-assisted coding reveals its hidden costs.

This article is not a critique of AI coding tools. It is a map of the terrain that executives and engineering leaders must understand before deploying these tools at enterprise scale. We draw on our experience building enterprise AI platforms, agentic systems, LLMOps pipelines, and AI governance frameworks to offer a practitioner’s perspective on what the productivity numbers do not show.

The Promise: Why AI Coding Assistants Are Being Adopted at Scale

It would be intellectually dishonest to discuss the risks of AI coding tools without first acknowledging the genuine, measurable value they deliver. The adoption of GitHub Copilot reached over 1.8 million paid subscribers by early 2024. According to a McKinsey report on developer productivity, organizations using AI coding assistants reported task completion improvements ranging from 20 to 55 percent depending on the type of work involved. These numbers represent real engineering hours and real business value.

The efficiency gains are most visible in specific categories of work. For boilerplate-heavy tasks — CRUD API scaffolding, test fixture generation, database migration scripts, configuration templates, CI/CD pipeline definitions — AI tools can compress hours of low-value work into minutes. A junior developer who once needed three days to stand up a new microservice with proper logging, error handling, and unit test scaffolding can now accomplish the same task in an afternoon. That is not a marginal improvement; it is a structural shift in how engineering capacity is allocated.

Internal tooling is another category where AI-assisted development has proven particularly effective. Building internal dashboards, administrative consoles, data export tools, and monitoring utilities — the kind of work that consistently gets deprioritized because it competes with product roadmap features — can now be delivered faster and at lower cost. Engineering teams that previously struggled to support non-technical stakeholders with basic automation can now say yes to requests they would have previously deferred.

API integration work, historically a significant source of friction in enterprise development, has also benefited considerably. Generating client SDK wrappers, handling authentication flows, parsing response schemas, and writing retry logic are tasks that AI models handle competently for most major API providers. The combination of pattern recognition and code generation means that connecting to a payment gateway, a CRM, or a healthcare data exchange can happen in a fraction of the time it once required.

Executives are particularly drawn to the democratization narrative. The idea that AI coding tools reduce the dependency on specialized senior engineers for routine tasks — freeing those engineers for architectural and strategic work — is genuinely compelling. There is also a legitimate case that AI tools accelerate the onboarding of new developers, giving them a context-aware pair programmer that can explain unfamiliar codebases, suggest idiomatic patterns, and catch syntactic errors in real time.

The promise is real. The question is what the fine print says.

The Productivity Paradox of AI-Assisted Development

Faster code generation is not the same as better engineering productivity. This distinction is critical, and it is one that gets lost in conversations that focus exclusively on velocity metrics. Engineering productivity in enterprise systems is not measured by lines of code written per hour. It is measured by working software delivered, maintained, and evolved over time without compounding operational risk.

The productivity paradox of AI-assisted development emerges from a fundamental shift in where engineering effort is spent. Before AI coding tools, engineers spent significant time writing code. With AI coding tools, they spend less time writing and significantly more time reading, validating, debugging, and integrating code that they did not fully author. This is not inherently bad, but it is a different kind of cognitive work — and in many cases, it is more demanding, not less.

Consider the debugging problem. AI-generated code often compiles and passes basic tests while containing subtle logical errors, hallucinated API calls, or incorrect assumptions about external system behavior. A developer using an AI assistant to generate a data pipeline connecting an enterprise ERP to a downstream analytics platform may receive syntactically correct code that references an API endpoint that does not exist, applies incorrect pagination logic, or mishandles null values in ways that only manifest under production load. Catching these issues requires deeper knowledge of the external systems than the code itself reveals — knowledge that AI cannot always possess and developers may not always exercise.

The code review burden is a related issue that engineering managers are beginning to feel acutely. When AI-generated code enters a review queue, reviewers cannot assume the author has deeply reasoned through every decision. They must approach the review with heightened skepticism, checking not just for style and obvious errors but for architectural coherence, security assumptions, and behavioral correctness. In teams that have rapidly adopted AI coding tools without adjusting review processes, this has led to review bottlenecks and, more dangerously, review fatigue — a state where the volume of code overwhelms the capacity for careful scrutiny.

Integration challenges compound the problem at the system level. AI tools are generally effective at generating code that works in isolation but are frequently poor at reasoning about how that code will behave in the context of an existing enterprise architecture. They do not know about the proprietary message broker your platform uses, the specific retry semantics your orchestration layer expects, or the authentication token lifecycle enforced by your identity provider. The gap between generated code and integrated, production-ready code is often larger than it appears on first review.

Perhaps the most insidious form of hidden cost is technical debt generated by AI tools operating at speed. When code generation happens faster than architectural review, systems accumulate inconsistencies — duplicate abstractions, competing patterns for the same problem, modules with overlapping responsibilities. This is not a failure of AI; it is a structural consequence of velocity outpacing governance.

Hidden Cost #1: AI-Generated Technical Debt

Technical debt is not a new problem in enterprise software engineering. But AI coding tools have introduced a qualitatively different form of debt — one that accumulates faster, is less visible, and is harder to attribute than traditional handcrafted debt.

The mechanism is straightforward. When developers use AI assistants to generate code, they are implicitly accepting suggestions that are statistically plausible but not necessarily architecturally coherent with the system they are building. AI models trained on public code repositories have absorbed enormous quantities of patterns, anti-patterns, outdated idioms, and context-specific conventions that may be appropriate in some settings but wrong in others. When applied at high velocity across a codebase, these suggestions can create what engineers call architecture drift — a gradual divergence from the intended system design toward a fragmented collection of locally reasonable but globally inconsistent implementations.

A concrete example illustrates the pattern. Imagine an enterprise platform team building a suite of microservices for a healthcare data integration product. The team adopts AI-assisted development and, over three months, ships eight new services faster than projected. Each developer generates their service code with AI assistance, working independently to deliver their sprint commitments.

At first glance, the outcome looks like a success. Services are delivered on time. Tests pass. Demos work. But at the three-month mark, the platform team begins to notice problems. Three of the eight services have implemented their own bespoke retry logic with different exponential backoff parameters. Four use different logging libraries. Two have duplicated a data transformation utility that should be shared. The error response schemas are inconsistent across services in ways that complicate the API gateway configuration. None of this was deliberate; it emerged from AI-generated suggestions that were locally reasonable but globally uncoordinated.

The long-term cost of this pattern is substantial. Onboarding new engineers becomes harder because the codebase does not behave consistently. Debugging distributed failures becomes more complex because logging and tracing are not uniform. Refactoring one service requires understanding four different abstraction patterns for what should be a single concept. What appeared as a velocity gain begins to manifest as an operational tax.

The technical debt problem is further compounded by undocumented dependencies. AI-generated code frequently introduces third-party libraries to solve specific problems — sometimes well-chosen, sometimes not. Over time, a codebase can accumulate a dependency graph that no one fully understands, with libraries at different versions, some unmaintained, some with known vulnerabilities, and some with licensing implications that only surface during a compliance audit.

Addressing AI-generated technical debt requires proactive architecture governance, mandatory code standards enforcement, and regular codebase audits — investments that are easy to defer but costly to postpone indefinitely.

Hidden Cost #2: Security and Compliance Risks

The security implications of AI-assisted coding deserve serious attention from enterprise leaders, particularly in regulated industries where the consequences of a vulnerability extend well beyond a service outage. AI models generate code based on statistical patterns, not security analysis. They do not inherently understand the threat model of your specific application, the regulatory requirements of your industry, or the sensitivity of the data flowing through your systems.

Several categories of security risk are elevated in AI-assisted development environments. The first is insecure code patterns. AI models frequently suggest code that follows common patterns but skips security best practices — hardcoding credential references, using deprecated cryptographic functions, generating SQL queries without proper parameterization, or implementing input validation that is incomplete. These are patterns that trained security reviewers catch routinely in manual development, but they can slip through when review processes have not been adapted for AI-generated code volume.

Dependency vulnerabilities are a second concern. When AI tools suggest third-party libraries to solve specific problems, they may recommend packages with known CVEs, abandoned packages with no active maintenance, or packages that have been compromised in supply chain attacks. In a high-velocity development environment, developers may accept these suggestions without the due diligence that would apply to a manually selected dependency.

Data leakage risk is particularly acute in healthcare and financial services. AI-generated code for data processing pipelines may handle sensitive data without the proper masking, encryption, or access controls required by HIPAA, PCI-DSS, or GDPR. An AI assistant helping a developer build a patient data export service may generate code that logs PHI fields in debug output, serializes sensitive data to a non-encrypted cache, or transmits data over an insecure channel — not because the AI is careless, but because it does not have the contextual understanding of data classification that a trained engineer in a regulated environment would apply.

The rise of AI-powered applications introduces an additional attack surface that requires new security thinking: prompt injection. In systems where user input flows into AI model prompts — a growing category that includes enterprise chatbots, intelligent search interfaces, and AI-assisted workflow tools — AI-generated code may not implement the input sanitization and prompt boundary controls necessary to prevent malicious users from manipulating model behavior. This is a category of vulnerability for which most enterprise security scanning tools have limited coverage.

For media technology companies, the risk profile extends to content manipulation and rights management systems where AI-generated code may inadvertently create pathways for unauthorized content access or distribution. For fintech platforms, AI-generated trading or transaction logic that contains subtle errors can have immediate and material financial consequences. The security governance model must be calibrated to the specific risk profile of each environment.

Hidden Cost #3: The Erosion of Engineering Depth

Perhaps the most strategically significant hidden cost of AI-assisted development — and the one least discussed in vendor-sponsored productivity studies — is the potential erosion of deep engineering expertise within development teams.

Engineering depth is not measured by the ability to write code quickly. It is measured by the capacity to reason about systems under uncertainty, to diagnose non-obvious failures, to design architectures that remain coherent under evolution, and to make principled tradeoffs between competing constraints. These capabilities are built through deliberate practice — through the experience of having written algorithms, designed data structures, debugged distributed systems failures, and architected solutions from first principles.

When developers consistently rely on AI to generate code rather than writing it themselves, they are engaging in a fundamentally different cognitive activity. They are selecting, validating, and integrating code rather than constructing it. This is not without value, but it develops a different and shallower set of skills. Over time, in teams where AI-assisted development becomes the default mode of operation, engineering leadership may find that the bench of engineers capable of deep architectural work has thinned — not through attrition, but through underuse.

There is an analogy to navigation. Before GPS, drivers built a mental model of the geography around them. They understood road networks, developed spatial reasoning, and could navigate in the absence of technology. After a decade of GPS dependence, many drivers have lost this capability. The system works fine until it does not — until the signal fails in a critical moment and the underlying skill is no longer there.

For engineering organizations, the equivalent failure mode is the moment when a production system exhibits behavior that AI tools cannot explain, when an architectural decision must be made without a clear precedent in training data, or when a novel security vulnerability requires understanding the underlying cryptographic or network protocol at a level below the abstraction the AI operates at. At these moments, the depth of the engineering team is what determines outcomes.

Engineering leadership must therefore think carefully about how AI tools are integrated into talent development pathways. Using AI assistants as a complement to deep engineering education is valuable. Using them as a substitute produces an engineering organization that is fast but brittle — capable of high-velocity delivery in familiar territory but poorly equipped for the unexpected.

Hidden Cost #4: AI-Induced System Complexity

Complexity is the primary enemy of reliable software systems. Complexity increases the cognitive load required to understand system behavior, makes debugging harder, increases the blast radius of changes, and raises the barrier to entry for new team members. Traditional software development processes create complexity organically over time. AI-assisted development can create it at industrial scale, in compressed timeframes, before governance mechanisms have a chance to intervene.

The mechanism is code proliferation. When AI tools make code generation cheap, the natural response is to generate more code. Features that might previously have been deferred pending prioritization are now feasible because the implementation cost seems low. Utility functions get generated rather than discovered in the existing library. New modules get created rather than extending existing ones. The result is a codebase that grows faster than the team’s ability to reason about it.

Microservice architectures are particularly vulnerable to this pattern. The microservices model was designed to reduce complexity by decomposing systems into independently deployable units with clear boundaries. But AI-assisted development can invert this benefit — generating microservices that are too fine-grained, poorly bounded, and inconsistently designed, transforming a manageable service mesh into a distributed monolith that preserves all the operational costs of distribution while forfeiting the benefits of modularity.

We have observed this pattern in fast-scaling AI application companies that adopted AI coding assistants early. A startup that began with a clean, well-architected platform of eight services found itself, eighteen months later, operating forty-three services — many generated at speed during growth sprints, several with overlapping responsibilities, a significant number with undocumented behavioral dependencies on other services. The operational overhead of this complexity — in monitoring, deployment coordination, failure diagnosis, and onboarding — had grown to consume a material fraction of engineering capacity.

The undocumented module problem is a related symptom. AI-generated code is often underdocumented because the development workflow does not naturally include a pause for documentation. A developer accepts a generated function, tests that it works, commits it, and moves on. The function works correctly but exists without explanation of its design assumptions, its behavioral edge cases, or its intended lifecycle. Multiply this by hundreds of AI-assisted contributions and the result is a codebase that is functionally operational but epistemically opaque.

Reversing AI-induced complexity requires the same tools as reversing conventional complexity — disciplined refactoring, architectural review, and service consolidation — but at a scale that can be difficult to staff when the primary engineering investment is in new feature delivery.

Why Enterprises Need AI Governance for Software Development

The engineering risks described in the preceding sections are not arguments against AI coding tools. They are arguments for governance. The distinction matters. A governance framework for AI-assisted development is not a set of restrictions that reduce the velocity benefits of AI tools. It is the operational infrastructure that makes those benefits sustainable over time.

The first element of an effective governance framework is an AI coding policy — a clear organizational statement of where AI tools are permitted, what categories of code require human authorship or enhanced review, and what data handling obligations apply when code is submitted to AI services. This policy must be technically informed, practically implementable, and regularly reviewed as tool capabilities evolve.

Code validation pipelines represent the technical enforcement layer. Modern CI/CD systems can be extended with AI-specific validation steps: static analysis tuned for AI-generated code patterns, dependency scanning with license and vulnerability checks, architecture conformance tests that verify new code adheres to established patterns, and security scanning configured for the specific vulnerability categories elevated by AI-assisted development. These are not new categories of tooling; they are existing categories applied with heightened configuration and priority.

AI testing frameworks deserve particular attention. One of the structural risks of AI-assisted development is that tests and the code they test may be generated by the same model, with the same blind spots. A test generated for an AI-generated function may pass consistently while failing to cover the behavioral edge cases that matter. Enterprise engineering organizations must establish testing standards that go beyond code coverage metrics to validate behavioral correctness against documented requirements, and where appropriate, use adversarial testing approaches that probe for the specific failure modes of AI-generated logic.

LLMOps integration is increasingly relevant as AI coding tools become more agentic. When AI systems are not just suggesting code but executing it, committing changes, and coordinating across repositories, the governance model must extend to model usage tracking, prompt audit trails, and behavioral guardrails. Platform engineering teams at leading AI-native organizations are beginning to build internal platforms that mediate between developers and AI coding tools — providing governance, observability, and cost management in a unified layer.

The organizational dimension of governance is equally important. Someone must own the AI coding governance function — either as a dedicated role within platform engineering or as a responsibility distributed across a senior engineering council. Without clear ownership, governance frameworks exist on paper but not in practice.

What We Are Seeing in Real Enterprise AI Engineering Projects

Across the enterprise AI engineering engagements we support at NStarX — spanning healthcare, financial services, media technology, and ISV platforms — we are observing a consistent set of patterns in how organizations are grappling with the realities of AI-assisted development at scale.

AI-assisted refactoring is one of the most promising practical applications we see. Organizations with legacy codebases — systems with hundreds of thousands or millions of lines of code, written across multiple technology generations, with documentation that is either incomplete or outdated — are finding genuine value in using AI tools to accelerate code comprehension and refactoring. One financial services client is using AI assistants to accelerate a multi-year modernization of a trading platform with over two million lines of legacy Java and C++ code. The AI tools are not making architectural decisions; they are handling the translation of well-understood patterns into modern equivalents, under close engineering supervision, within a structured Strangler Fig migration framework.

Platform modernization projects are revealing the importance of architectural guardrails. In several engagements, we have seen AI-assisted development produce high-velocity early results followed by architectural fragmentation that required significant rework. The lesson consistently reinforced is that AI coding tools perform best within a tightly governed architectural context — when the patterns, interfaces, and conventions are pre-established and the AI’s role is to implement within defined boundaries, not to invent structural patterns.

AI-native software architectures are creating new demands on testing infrastructure. Systems that incorporate AI model calls in their business logic cannot be tested with conventional deterministic testing alone. The non-determinism of model outputs, the latency variability of model inference, and the potential for model behavior to shift across versions all require testing frameworks that go beyond pass/fail assertions. We are building and deploying AI testing frameworks for enterprise clients that combine behavioral contract testing, regression testing against golden outputs, adversarial input testing, and production monitoring with anomaly detection.

The DevOps-to-LLMOps evolution is happening faster than most organizations anticipated. The operational model for managing software that incorporates AI components is fundamentally different from conventional software operations. Model versions, context window management, prompt versioning, inference cost governance, and output quality monitoring are categories of operational concern that do not have direct analogues in traditional DevOps tooling. Organizations that are ahead of this curve are those that have treated LLMOps as a first-class engineering discipline rather than an afterthought.

Code quality governance is the area where we see the widest variance in enterprise maturity. Some organizations have proactively extended their quality frameworks to account for AI-generated code — adding AI-specific linting rules, adjusting review checklists, and implementing training for engineers on how to critically evaluate AI suggestions. Others are operating with quality processes designed for a world where code is written slowly and carefully by humans, and the gap between process intent and reality is growing with every sprint.

Best Practices for Using AI Coding Tools Responsibly

For engineering leaders who are committed to capturing the productivity benefits of AI coding tools while managing the risks described in this article, the following framework provides a practical starting point.

1. Establish Architectural Guardrails Before Scaling AI Adoption

Before deploying AI coding tools broadly, document the architectural patterns, interface contracts, and coding conventions that must be followed. These guardrails give AI tools the context they need to generate coherent code and give reviewers the criteria they need to evaluate it. Without this foundation, AI-assisted development accelerates entropy.

2. Implement AI-Aware Code Review Processes

Adjust review checklists and reviewer training to account for the specific failure modes of AI-generated code: hallucinated dependencies, missing error handling, inconsistent patterns, and under-specified edge cases. Consider implementing AI code review tiers, where AI-generated code receives enhanced scrutiny relative to human-authored code.

3. Deploy Mandatory Validation Pipelines

Extend CI/CD pipelines with AI-specific validation: static analysis for AI-generated pattern anti-patterns, dependency vulnerability scanning, architecture conformance testing, and security scanning tuned for AI-elevated risk categories. Make pipeline passage a non-negotiable gate before merge.

4. Adopt Behavioral Testing Frameworks

Move beyond code coverage metrics to behavioral correctness validation. Implement property-based testing, adversarial input testing, and contract testing to verify that AI-generated code behaves correctly under the full range of conditions it will encounter in production.

5. Maintain Human Authorship for Critical Paths

Define categories of code where AI assistance is supplementary and human authorship is primary: security-critical logic, core architectural components, data handling for sensitive categories, and algorithmic implementations with material correctness requirements. For these areas, AI tools can assist but should not lead.

6. Automate Documentation Generation

Use AI tools to generate documentation for AI-generated code — requiring that every accepted AI code contribution is accompanied by generated documentation that explains its purpose, assumptions, and behavioral boundaries. This practice partially compensates for the documentation deficit that high-velocity AI development creates.

7. Invest in Engineering Depth

Counterbalance AI tool adoption with deliberate investment in deep engineering skills. Create protected time for engineers to work on problems without AI assistance, implement internal knowledge-sharing programs that build algorithmic and systems knowledge, and ensure that AI tools supplement rather than substitute for engineering judgment.

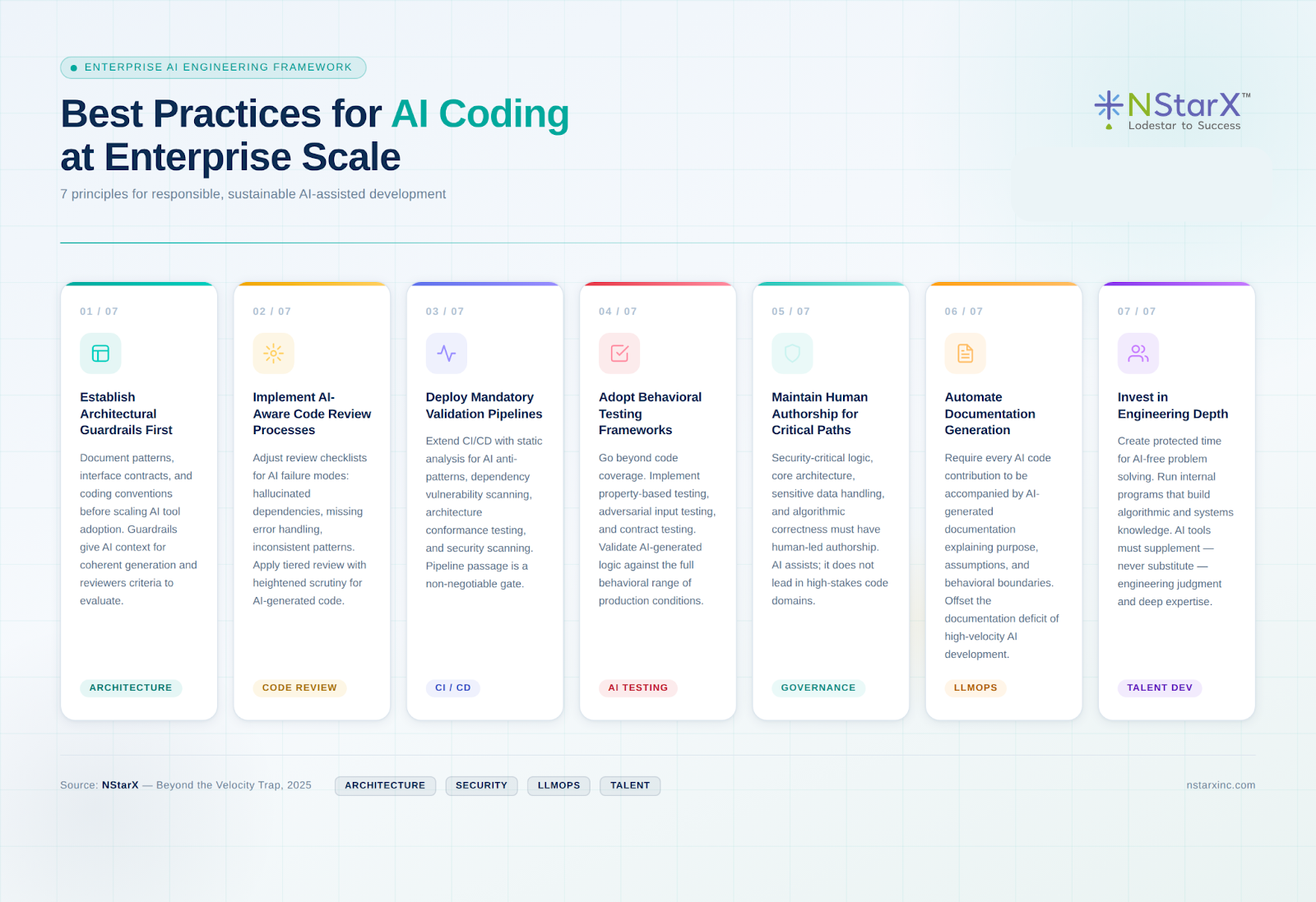

Below is the pictorial way to represent the best practices:

Figure 1: Best Practices for AI Coding at Enterprise Scale

The Future of AI-Augmented Software Engineering

The AI coding tools available today are, by any reasonable projection, early iterations. The trajectory is toward greater autonomy, broader context awareness, and more sophisticated reasoning about system behavior. GitHub Copilot Workspace, Devin, and the emerging category of agentic coding systems represent a near-term future in which AI moves from suggesting code to planning, implementing, testing, and refactoring features with limited human direction.

This evolution will amplify both the benefits and the risks described in this article. Agentic coding systems that can autonomously refactor large codebases will create new possibilities for technical debt management and platform modernization — tasks that are currently bottlenecked by human engineering capacity. But they will also introduce new risks: agentic systems that accumulate scope creep, make irreversible architectural changes, or introduce subtle behavioral regressions across large surface areas before humans have a chance to review.

AI-native development environments — IDEs that understand the full semantic context of an application, reason about distributed system behavior, and maintain architectural coherence across concurrent development streams — are an emerging category that will fundamentally reshape the engineering workflow. In this environment, the engineering organization’s competitive advantage will not be raw coding speed but the quality of the architectural vision, governance framework, and testing infrastructure it brings to AI-assisted development.

The engineering leaders who will navigate this transition successfully are those who treat AI coding tools not as a shortcut to the existing destination but as a new mode of travel requiring new maps, new navigation skills, and new safety equipment. The destination — reliable, secure, maintainable software that delivers business value — remains unchanged. The path to it is being rebuilt in real time.

Conclusion: Responsible Acceleration in the Age of AI-Native Engineering

The productivity case for AI coding tools in the enterprise is strong enough that the question is no longer whether to adopt them but how to adopt them wisely. The organizations that will extract the most durable value from AI-assisted development are not those that deploy these tools fastest but those that deploy them within a governance model sophisticated enough to preserve the engineering quality that enterprise software demands.

The hidden costs we have mapped in this article — AI-generated technical debt, elevated security and compliance risk, the erosion of engineering depth, and AI-induced system complexity — are not inevitable. They are the predictable consequences of velocity-first adoption without corresponding investment in validation, governance, and talent development. Organizations that invest in these countermeasures alongside their AI tool deployments will find that the tools deliver their promised benefits without the accompanying liabilities.

Engineering leadership in the AI era requires a different kind of judgment than the engineering leadership of previous decades. The challenge is no longer primarily about making development fast enough. It is about making AI-accelerated development coherent, safe, and sustainable at enterprise scale. That is a harder problem than it appears in vendor demos and analyst reports — and it is the problem that the best engineering organizations are now working seriously to solve.

The future of software engineering is AI-native. The engineering organizations that will define that future are the ones treating governance, architecture, and testing not as constraints on AI adoption but as the structural conditions that make AI adoption worth doing.

References

- Ross, J., Beath, C., & Mocker, M. (2023). “The Hidden Costs of Coding with Generative AI.” MIT Sloan Management Review. https://sloanreview.mit.edu/article/the-hidden-costs-of-coding-with-generative-ai/

- McKinsey & Company. (2023). “The Economic Potential of Generative AI: The Next Productivity Frontier.” https://www.mckinsey.com/capabilities/mckinsey-digital/our-insights/the-economic-potential-of-generative-ai

- GitHub. (2023). “GitHub Copilot: The World’s Most Widely Adopted AI Developer Tool.” GitHub Blog. https://github.blog/news-insights/research/survey-reveals-ais-impact-on-the-developer-experience/

- Dakhel, A. M., et al. (2023). “GitHub Copilot AI Pair Programmer: Asset or Liability?” Journal of Systems and Software. https://doi.org/10.1016/j.jss.2023.111734

- Pearce, H., et al. (2022). “Asleep at the Keyboard? Assessing the Security of GitHub Copilot’s Code Contributions.” IEEE Symposium on Security and Privacy. https://doi.org/10.1109/SP46214.2022.9833571

- Gartner. (2024). “Magic Quadrant for AI Code Assistants.” Gartner Research. https://www.gartner.com/en/documents/5223063

- Fowler, M. (2018). “Strangler Fig Application.” MartinFowler.com. https://martinfowler.com/bliki/StranglerFigApplication.html

- OWASP. (2023). “OWASP Top 10 for Large Language Model Applications.” https://owasp.org/www-project-top-10-for-large-language-model-applications/

- Wiggers, K. (2024). “Cognition Launches Devin, the First AI Software Engineer.” TechCrunch. https://techcrunch.com/2024/03/12/cognition-launches-devin-the-first-ai-software-engineer/

- Kleppmann, M. (2017). Designing Data-Intensive Applications. O’Reilly Media. https://dataintensive.net/

- National Institute of Standards and Technology (NIST). (2024). “AI Risk Management Framework (AI RMF 1.0).” https://www.nist.gov/system/files/documents/2023/01/26/AI%20RMF%201.0.pdf

- Google Cloud. (2024). “MLOps: Continuous Delivery and Automation Pipelines in Machine Learning.” https://cloud.google.com/architecture/mlops-continuous-delivery-and-automation-pipelines-in-machine-learning

- NStarX Inc. (2025). “Enterprise AI Platform Engineering: DLNP and LLMOps Reference Architecture.” https://nstarxinc.com

Download Whitepaper

Beyond the Velocity Trap

The Hidden Engineering Costs of AI-Assisted Development in the Enterprise

Explore how organizations can evaluate the real business impact of autonomous AI systems beyond simple productivity metrics. This white paper introduces practical frameworks to quantify value, manage hidden costs, and align agentic AI investments with enterprise outcomes. Designed for technology and business leaders, the paper provides a clear roadmap for assessing and scaling agentic AI responsibly.

Complete the short form below to access and download your copy of the whitepaper.