A side-by-side analyst view of NVIDIA’s biggest strategic pivot — and what enterprise technology leaders must do before the window closes.

1. Introduction — The Big Shift

Every year, NVIDIA’s GTC conference functions less as a product launch and more as a state-of-the-union for the entire technology industry. Jensen Huang does not simply introduce chips. He tells us what computing will become — and in doing so, obliquely tells every CIO, CTO, and enterprise architect what they should have already started building.

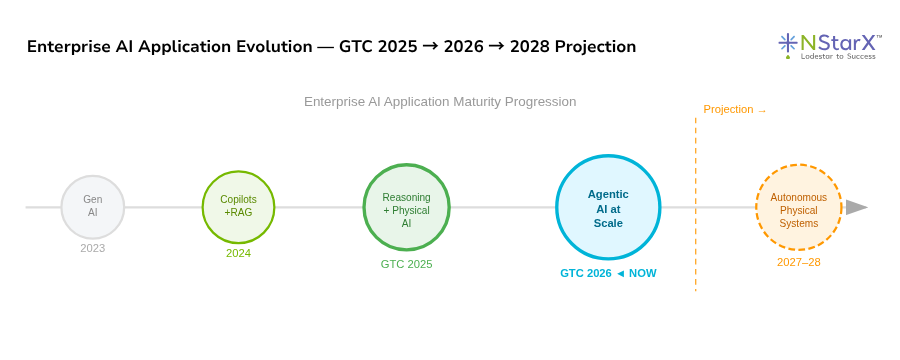

In March 2025, the dominant story was physical AI: the idea that intelligence was moving from digital screens into the physical world — into robots, factories, cars, and industrial systems. It was a compelling vision, but one still heavily dressed in the language of possibility. The infrastructure was being assembled. The models were being trained. The future was impressive, but distant.

In March 2026, the language changed fundamentally. The future arrived — and it arrived with a trillion-dollar price tag.

This is not hyperbole. At GTC 2026, Jensen Huang told a capacity crowd at the SAP Center in San Jose that NVIDIA now has high-confidence purchase orders and demand visibility of at least $1 trillion through 2027 for its Blackwell and Vera Rubin platforms. That figure is double what he projected at GTC 2025. More revealing than the number is what he said after it: “I believe that computing demand has increased by 1 million times in the last two years.”

The shift from 2025 to 2026 is the shift from building the track to running the train at full speed. This report breaks down exactly what changed, what it means for enterprise architecture, and what service companies like NStarX must do to stay relevant in a world where AI is no longer a pilot — it is industrial infrastructure.

- $1T — GTC 2026 demand visibility through 2027 (doubled from $500B in 2025)

- 1M× — Jensen’s estimate of compute demand growth in two years

- 28 — Cities where Uber deploys NVIDIA-powered robotaxis by 2028

- 20 — Years of CUDA — the moat behind NVIDIA’s platform dominance

2. What Changed Subtly

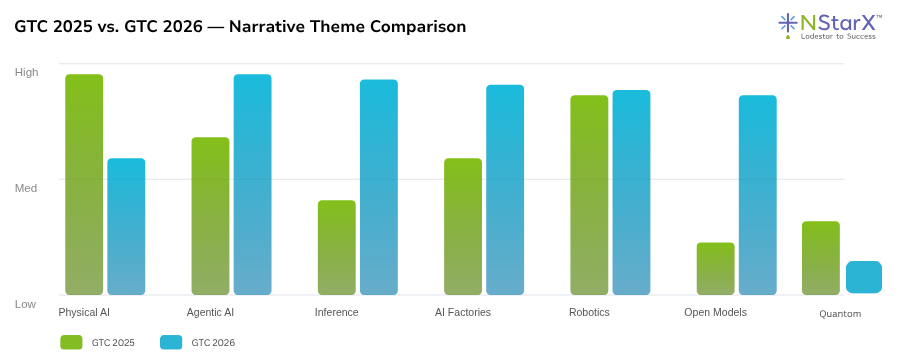

Some of the most important shifts between 2025 and 2026 were not in the hardware announcements or the headline numbers. They were in the vocabulary, the focus of the demos, and the implied audience of the keynote.

Language: From “Potential” to “Production”

At GTC 2025, Jensen spoke of the “$50 trillion physical AI opportunity.” That phrasing — an opportunity — signals a future promise. At GTC 2026, the language of opportunity largely disappeared. In its place came the language of execution: production, inflection point, enterprise-ready, now shipping. The word “inference” became the centerpiece of the entire architectural narrative. Huang declared 2026 “the inflection point of inference” — the moment when AI stops being about how many models you can train and starts being about how efficiently you can run them at scale, 24/7, for millions of users simultaneously.

The Target Buyer Shifted

GTC 2025 was still partly a developer conference — aimed at model builders, researchers, and forward-leaning cloud architects. GTC 2026 skewed toward enterprise operators and industrial buyers. The NemoClaw stack is explicitly designed to make OpenClaw agents “enterprise-ready,” with security, privacy, and control built in. The partnerships announced — Uber, Siemens, PTC, Procore, Jacobs, Dassault Systèmes — are not startups. They are Fortune 500 operators embedding AI into revenue-generating systems.

Cost Messaging Became Central

At GTC 2025, NVIDIA’s cost messaging was largely about the raw performance gains of Blackwell. At GTC 2026, Huang dedicated significant keynote time to tokens per watt as the defining metric of AI factories. The Groq LP30 chip acquisition — a $20 billion transaction for technology that specializes in ultra-low-latency token generation — is a direct response to enterprise customers who are now asking: “What does it cost me to run one million queries per day?” This is not a research question. It is an operations question. And NVIDIA is now explicitly in the business of answering it.

CUDA Turns 20 — And Jensen Makes It Personal

The 20th anniversary of CUDA was not just a nostalgic callback. It was a deliberate reminder of the depth of NVIDIA’s moat. No competitor — not AMD, not Google TPUs, not Intel Gaudi — has built a 20-year developer ecosystem. NVIDIA’s “CUDA flywheel” — where developers build on CUDA, which drives hardware adoption, which attracts more developers — is now so deeply embedded in every cloud and every major enterprise that it is functionally irreversible at any reasonable planning horizon. This was a message to hyperscalers, enterprise procurement teams, and investors alike: this platform is not going anywhere.

3. What Changed Dramatically

Three shifts between 2025 and 2026 are so significant that they deserve to be called structural, not incremental.

1. The Inference Revolution

Training large models has been the defining compute challenge of the past four years. At GTC 2025, the discussion was still anchored in training infrastructure — how many Blackwell GPUs you need to train a frontier model, how to optimize memory bandwidth, how to parallelize across thousands of chips. At GTC 2026, the conversation pivoted hard. Huang described the moment as the “inflection point of inference” — the point at which AI systems are doing real, continuous, economically valuable work at scale. Inference is the daily use of AI models: every query, every agent loop, every autonomous decision. The fact that this now requires specialized hardware — NVIDIA built an entire new chip category (the Groq LP30 LPU) explicitly to optimize for low-latency token generation — tells you everything about where the compute bottleneck has shifted.

2. Agentic AI Went From Buzzword to Architecture

At GTC 2025, “agentic AI” appeared in the narrative as a future direction — something coming after large language models matured. By GTC 2026, it had become the organizing principle of the entire product line. Vera Rubin, NVIDIA’s flagship new platform, is explicitly “designed for agentic AI workloads.” NemoClaw is an enterprise-grade agent runtime. OpenClaw (an external agentic AI platform) received a full keynote segment. Huang called the OpenClaw event “understated” in its importance. NVIDIA is now building the infrastructure layer for a world in which AI systems do not just respond to prompts — they reason, plan, use tools, execute multi-step tasks, and operate autonomously for extended periods. This is the architectural inflection that enterprises have been underestimating.

3. The AI Factory as the New Unit of Enterprise Infrastructure

At GTC 2025, NVIDIA introduced the term “AI Factory” — a data center optimized not for storing and retrieving data, but for continuously generating AI outputs (tokens, decisions, actions). At GTC 2026, it became the anchor of the entire keynote. Huang described AI factories as “the industrial infrastructure of the AI era,” detailed their hardware evolution from individual GPUs to rack-scale systems, and announced that NVIDIA is now producing multi-gigawatts of AI factory capacity per month from its supply chain. This is not a concept anymore. It is a thing you buy, configure, and operate.

4. Infrastructure Layer Shift

| Layer | GTC 2025 | GTC 2026 |

|---|---|---|

| GPU Platform | Blackwell (full production), Blackwell Ultra preview (H2 2025) | Vera Rubin NVLink 72 (full production), Rubin Ultra roadmap, Feynman (2028) |

| Inference | Blackwell inference improvements; Dynamo (open-source, early) | Groq LP30 LPU — dedicated low-latency inference chip; Dynamo 1.0 as AI infra OS |

| System Architecture | DGX systems, NVLink interconnects, AI factory concept introduced | Vera Rubin: 7 chips across 5 rack-scale computers as one unified supercomputer |

| Networking | Co-packaged optics, advanced NVLink, InfiniBand | Dual copper + optical strategy confirmed; NVLink scale-up, optical scale-out |

| Data Libraries | CUDA-X libraries (general) | cuDF (structured data), cuVS (vector/unstructured data) — inference-optimized |

| Demand Visibility | ~$500B through 2026 (Blackwell + early Rubin) | ≥$1T through 2027 (Blackwell + Vera Rubin) |

| Supply Output | 6M Blackwell GPUs in first 3.5 quarters; 20M more ordered | Thousands of rack-scale systems per week; multi-gigawatt monthly AI factory capacity |

The Vera Rubin architecture deserves particular attention. This is not an incremental GPU upgrade. NVIDIA has re-architected its flagship system from the ground up for one specific workload class: agentic AI. Agentic workloads are fundamentally different from training or even standard inference. They require heavy memory (agents maintain long context), massive storage access (agents retrieve tools, documentation, and state), and extremely low-latency token generation (agents loop continuously). Vera Rubin integrates seven distinct chip types into five rack-scale computers that operate as a single unified supercomputer. This is computing infrastructure designed for systems that think, not systems that merely process.

The Groq LP30 is equally significant. NVIDIA spent $20 billion — its largest acquisition ever — to bring Groq’s Language Processing Unit technology in-house. The Groq LP30 contains 500 megabytes of on-chip SRAM and is designed as a “deterministic data flow processor” with static compilation for ultra-low-latency token generation. The practical implication: when an enterprise agent needs to generate a response in under 100 milliseconds to be useful in a real-time workflow, Vera Rubin handles the complex reasoning and Groq handles the output. NVIDIA’s Dynamo 1.0 software disaggregates inference across these two chips automatically. This is vertical integration at the system level.

“If most of your workload is high throughput, stick with 100% Vera Rubin. If a lot of your workload is coding and high-valued engineering token generation, I would add Groq to 25% of your data center.” — Jensen Huang, GTC 2026

NStarX Point of View — Infrastructure Strategy

The cloud-first default is not wrong — but it is increasingly insufficient as a complete strategy. Enterprises that assumed “rent GPU capacity from AWS/Azure/GCP indefinitely” are now facing a new reality: the cost of inference at production scale is becoming a primary operating cost, not a pilot budget line.

The emergence of AI factories as a deployable asset class means enterprises now need an AI infrastructure strategy that sits alongside — not subordinate to — their cloud strategy. This means:

- Modeling inference cost per workload type as a first-class financial metric

- Evaluating hybrid AI infrastructure architectures (cloud for burst, colocation or on-premise for steady-state agentic workloads)

- Building internal competency to configure and operate Dynamo-class AI infrastructure stacks, not just consume managed APIs

NStarX’s DLNP platform is specifically architected for this hybrid reality — bringing converged data and model infrastructure to enterprises without the requirement of full hyperscale buildout.

5. Application Layer Shift

The application layer story of GTC 2026 is dominated by two themes that were barely present at GTC 2025: agentic AI platforms and autonomous physical systems. Understanding both is essential for enterprise strategy teams.

The OpenClaw Moment

The amount of keynote time Jensen Huang devoted to OpenClaw — an external, open agentic AI platform — was striking. Huang said the OpenClaw “event cannot be understated.” NVIDIA’s response was to build and release NemoClaw: an enterprise-grade reference stack that makes OpenClaw deployable in corporate environments with proper security, data privacy, and access control. This is NVIDIA explicitly acknowledging that agentic AI is now real enough that enterprises need hardened deployment infrastructure, not research sandboxes. The NemoClaw stack includes OpenShell for open models and a security-first sandbox.

What this signals: the era of building one-off AI chatbots and calling them agents is ending. The next wave is orchestrated multi-agent systems that can autonomously plan, use APIs, read and write documents, query databases, and execute tasks over extended periods without human intervention. This is not science fiction in 2026. It is what NVIDIA’s entire software stack is now optimized to support.

The ChatGPT Moment for Self-Driving Has Arrived

Huang’s declaration that “the ChatGPT moment of self-driving has arrived” is one of the most consequential statements of GTC 2026 — and it is backed by concrete commercial evidence. Uber announced it will deploy NVIDIA Drive AV-powered autonomous fleets across 28 cities on four continents by 2028, starting in Los Angeles and San Francisco. Four major automotive OEMs — Nissan, BYD, Geely, and Hyundai — announced level 4 autonomous vehicles on NVIDIA’s Drive Hyperion program. Isuzu and Japan’s Tier IV are building autonomous buses. These are not pilot programs. These are contractual production commitments.

From Digital Twins to Operational Simulation

At GTC 2025, Omniverse and digital twins were showcased as impressive technical demonstrations. At GTC 2026, NVIDIA’s industrial simulation partnerships — PTC Windchill, Siemens, Dassault Systèmes, Cadence, ETAP, Procore — indicate that digital twins are entering the operational layer of industrial enterprises. Huang suggested a “factor of two” efficiency gain is achievable through proper design simulation. At the scale of an industrial facility or infrastructure project, a factor of two is a billion-dollar number.

NStarX Point of View — Application Layer

Enterprises that are still in “AI pilot mode” in 2026 are not behind by one year. They are behind by one architectural generation. The question for enterprise technology leaders is no longer “should we adopt AI?” It is: “Are we architected to run AI agents in production?”

The answer for most enterprises is no — and the gap is widening. Agentic AI requires infrastructure that most enterprises have not built: persistent agent state management, tool registries, multi-agent orchestration layers, guardrails and security sandboxes, long-context memory systems, and inference pipelines that can serve thousands of concurrent agent loops at low latency.

This is precisely the service opportunity that NStarX’s Agentic Workflow Engineering practice is designed to address — helping enterprise clients move from isolated AI experiments to production-grade agentic architecture.

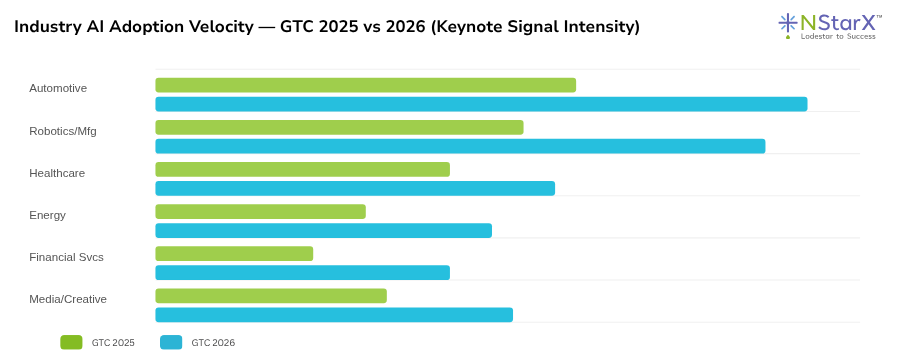

6. Industry Shift

The industry that moved fastest — and most visibly — between GTC 2025 and GTC 2026 is automotive. What was a forward-looking discussion at GTC 2025 (NVIDIA announcing a partnership with General Motors; Isaac GR00T as a foundation model for humanoid robots) became a full-scale commercial deployment narrative at GTC 2026. Five OEMs, one ride-hail giant, 28 cities, four continents, a production timeline anchored in 2027–2028. This is the most concrete industrial AI deployment announcement in GTC history.

Manufacturing and industrial robotics followed closely. ABB, Universal Robots, KUKA, Techman, and a dozen other partners demonstrated production-grade robotic systems trained in NVIDIA Isaac Sim and running on NVIDIA Jetson Thor. The “See, Think, Act” architecture — sensor fusion, generative AI inference, autonomous navigation — is now a standardized industrial stack, not a research framework.

Healthcare showed growing signal. NVIDIA’s BioNeMo model family and Isaac-based surgical and clinical robotics partners indicated that medical AI is moving from diagnostics (interpreting images) to operational AI (autonomous systems operating in clinical environments). The regulatory and deployment timelines for healthcare are longer than automotive, but the foundational technology investment at GTC 2026 suggests a 2028–2030 operational wave.

Financial services received lighter explicit coverage in the keynote, but the infrastructure story has direct implications: the same AI factories powering automotive simulation are the infrastructure that financial services firms need to run real-time risk models, fraud detection agents, and trading intelligence systems at scale. The NVIDIA + IBM + Dell confidential computing integration announced at GTC 2026 is specifically targeted at regulated industries that need to run AI on sensitive data without exposing it.

7. What Enterprises Must Do Now

The standard advice after an NVIDIA GTC is “invest more in GPUs.” That advice misses the point entirely in 2026. The question is not whether to invest in AI infrastructure. The question is whether your organization has the internal capabilities, architecture, and operating model to deploy and run AI at production scale — and specifically, whether you are prepared for the agentic AI wave that is now rolling in.

CIO — Shift from AI Programs to AI Operations

- Appoint an AI Infrastructure lead (distinct from a Data Science lead)

- Build an inference cost model for top-10 AI use cases

- Audit your data architecture for agent-readiness: vector stores, tool registries, state management

- Evaluate on-premise vs. hybrid AI factory economics by FY2027

CTO — Architect for Agentic, Not Just Generative

- Build or adopt a multi-agent orchestration layer (LangChain, LangGraph, custom)

- Evaluate NemoClaw / OpenClaw for enterprise-grade agent deployments

- Investigate Dynamo 1.0 as your AI infrastructure operating system

- Design for disaggregated inference: high-throughput (Vera Rubin class) + low-latency (Groq class)

Chief Data Officer — Build the Data Foundation for Agents

- Migrate from batch analytics pipelines to real-time, agent-queryable data surfaces

- Deploy cuDF / cuVS class GPU-accelerated data libraries for structured and vector workloads

- Implement data access governance for AI agents (not just human users)

- Prioritize federated learning architecture for multi-entity data scenarios

CFO — Model AI as a Capital Asset, Not Just OpEx

- Reframe AI infrastructure capex decisions using tokens-per-watt economics, not just seat licenses

- Establish an AI ROI tracking framework linked to operational outcomes

- Consider Jensen’s own suggestion: AI compute token budgets as a recruiting and productivity lever

- Model AI Factory scenarios at 2027 and 2029 planning horizons

Chief Digital Officer — Move Customer-Facing AI from Copilot to Agent

- Identify top-5 customer journeys suitable for autonomous AI agent handling

- Build internal agent engineering capability or partner with a Service-as-Software firm

- Pilot agentic workflows in 2026, plan production deployments in 2027

- Establish AI agent governance policies before agents go live in customer workflows

Business Unit Leaders — Compete on AI Speed, Not Just AI Presence

- Define “Copilot to Autopilot” roadmap for your function’s top 3 processes

- Request dedicated AI engineering resources (not just access to enterprise APIs)

- Benchmark your AI agent deployment timeline against your fastest competitor

- Understand that the advantage window for first-mover AI automation is 12–18 months

8. NStarX Point of View

NStarX was built for this exact moment. Our thesis — that software and services must converge into measurable, outcome-based delivery — was conceived with the understanding that AI would eventually transform not just what enterprises build, but how they buy capability. GTC 2026 is the clearest signal yet that this transformation has crossed from the theoretical to the operational.

The Service as Software Thesis Gets Validated

The rise of agentic AI is the structural confirmation of the Service as Software model. When AI agents can autonomously execute workflows — onboarding a customer, monitoring a pipeline, generating a regulatory report, optimizing a supply chain leg — the value of a service provider is no longer measured in billable hours. It is measured in outcomes per agent per day. This is what NStarX means when it talks about moving clients from Copilot to Autopilot. The Copilot phase was always a transition state. The destination is always autonomous.

Where NStarX Should Lead

Based on the GTC 2026 signal map, the five highest-value service areas for NStarX in the next 24 months are:

NStarX Strategic Positioning — Post-GTC 2026

- Agentic Workflow Engineering — Designing and deploying production-grade multi-agent systems for enterprise automation. This is the single highest-demand capability emerging from the GTC 2026 narrative.

- AI Factory Strategy & Design — Helping enterprise clients model, architect, and phase the buildout of AI factory infrastructure, including hybrid cloud + on-premise inference strategies anchored in tokens-per-watt economics.

- Enterprise AI Platform Engineering — Building the internal platforms — model registries, agent runtimes, tool orchestration layers, governance frameworks — that enterprises need to operationalize AI at scale.

- Federated Learning & Confidential AI — Serving regulated industries (healthcare, financial services, defense) that need to run AI on sensitive, multi-party data without centralizing it. NVIDIA’s confidential computing integrations with IBM and Dell at GTC 2026 validate this market directly.

- Industrial AI & Digital Twin Engineering — Helping manufacturing, energy, and logistics clients build simulation-backed AI systems using the NVIDIA Isaac / Omniverse ecosystem, particularly as the ETAP, Siemens, and Dassault Systèmes partnerships open new vertical entry points.

The Mistake to Avoid

The biggest mistake NStarX — and every other AI services firm — can make in 2026 is to stay in the AI experimentation economy. The experimentation economy rewards discovery, exploration, and proof-of-concept delivery. The industrialization economy — which GTC 2026 has now confirmed is here — rewards precision, repeatability, and measurable outcomes. The firms that will win the next five years are not the firms with the most impressive demo reel. They are the firms with the most repeatable production deployment playbooks. This is the moment to build those playbooks — or be left behind as the enterprises that hired you to explore AI start hiring others to run it.

9. Conclusion — The Next 5 Years of AI

The arc from GTC 2025 to GTC 2026 describes the transition from the Age of Models to the Age of Systems. In 2025, the prize was having the best model. In 2026, the prize is having the best operational AI system — the best AI factory, the most capable agent fleet, the most efficient inference infrastructure. By 2028, the prize will be having the most capable autonomous physical systems.

NVIDIA’s roadmap makes this trajectory explicit. Vera Rubin ships in 2026. Rubin Ultra follows in 2027. Feynman arrives in 2028. Each generation is not just more compute — it is more capable of supporting more autonomous, more continuous, more physically-grounded AI systems. The hardware roadmap is a capability ladder, and Jensen Huang has been telling us for two years where the top rung leads: a world in which every industry has AI factories, every enterprise workflow has agent coverage, and every physical system has embedded AI intelligence.

The enterprises that will win this decade are not the ones that attended the most GTC keynotes. They are the ones that translated the signal from those keynotes into structural investments in AI platform architecture, agent engineering capability, and outcome-based delivery models — before the window closed.

AI is moving from models → systems → industries. Enterprises are not ready for this shift. The winners will be those that build AI platforms, not AI pilots. The next decade belongs to the companies that build AI factories and agentic enterprises.

The token has become the new unit of economic value in AI. The factory that produces the most tokens — at the lowest cost, with the highest reliability, in service of the most valuable workflows — wins. That is the GTC 2026 thesis. And for the first time in the history of this conference, the factories are already running.

References

- NVIDIA GTC 2026 Official Keynote Blog — NVIDIA. https://blogs.nvidia.com/blog/gtc-2026-news/

- CNBC — “Nvidia GTC 2026: CEO Jensen Huang keynote Blackwell Vera Rubin.” https://www.cnbc.com/2026/03/16/nvidia-gtc-2026-ceo-jensen-huang-keynote-blackwell-vera-rubin.html

- Tom’s Hardware — “Nvidia GTC 2026 keynote live blog.” https://www.tomshardware.com/news/live/nvidia-gtc-2026-keynote-live-blog-jensen-huang

- TechRepublic — “Nvidia GTC 2026: Live Updates.” https://www.techrepublic.com/article/news-nvidia-gtc-2026-live-updates/

- Techloy — “NVIDIA GTC 2026: Everything Jensen Huang Announced at the Keynote.” https://www.techloy.com/nvidia-gtc-2026-everything-jensen-huang-announced-at-the-keynote/

- MarketBeat / Yahoo Finance — “NVIDIA GTC Keynote: Huang Unveils AI Factories, Blackwell-Rubin Roadmap, and $1T Demand View.” https://finance.yahoo.com/sectors/technology/articles/nvidia-gtc-keynote-huang-unveils-090540676.html

- CNBC — “2 of our biggest takeaways from Nvidia CEO Jensen Huang’s GTC keynote speech.” https://www.cnbc.com/2026/03/16/2-of-our-biggest-takeaways-from-nvidia-ceo-jensen-huangs-gtc-keynote-speech.html

- NVIDIA Blog — “GTC 2025 Announcements and Live Updates.” https://blogs.nvidia.com/blog/nvidia-keynote-at-gtc-2025-ai-news-live-updates/

- VentureBeat — “NVIDIA announcements, news and more, from GTC 2025.” https://venturebeat.com/ai/nvidia-announcements-news-and-more-from-gtc-2025

- Network World — “Nvidia GTC 2025: News and insights.” https://www.networkworld.com/article/3833841/nvidia-gtc-2025-what-to-expect-from-the-ai-leader.html

- TechRadar — “Nvidia GTC 2026: ‘It all starts here’ — live blog.” https://www.techradar.com/pro/live/nvidia-gtc-2026-live-coverage-all-the-news-and-updates-as-it-happens

- Analytics Insight — “NVIDIA GTC 2026 Live Updates: Jensen Huang Keynote.” https://www.analyticsinsight.net/artificial-intelligence/nvidia-gtc-2026-live-updates