By Sujay Kumar | Chief Revenue Officer, NStarX Inc. | February 2026

1. GPUs Are Just the Beginning — You Need a Software Stack

There is a persistent myth in enterprise technology circles: that buying the right GPU hardware is the hard part of building AI systems. Get a rack of H100s into your data center, the thinking goes, and you are most of the way there. Anyone who has actually tried to build a production AI workload from bare metal will tell you this view is dangerously wrong.

The raw compute power of modern GPUs — whether from NVIDIA, AMD, or Intel — is extraordinary. A single NVIDIA H100 can deliver nearly 4 petaflops of FP8 tensor core performance. AMD’s MI300X bundles 192GB of HBM3 memory. Intel’s Gaudi 3 is engineered with cost-conscious enterprise buyers in mind. But none of this hardware knows, out of the box, how to load a large language model efficiently, how to manage memory across concurrent requests, how to apply guardrails to agentic outputs, or how to expose a stable API that your applications can reliably call.

Think of it like this: a Formula 1 engine, on its own, is not a racing car. It needs suspension, aerodynamics, software-controlled systems, telemetry, and a pit crew. The engine provides the raw power. Everything else determines whether you actually win races. The same is true in enterprise AI. The GPU provides computational horsepower. The software stack is what actually gets you to production.

Here is what a proper AI inference software stack needs to do, and why each layer matters:

- Model loading and optimization: Different models need to be quantized, pruned, or compiled into formats that specific hardware can execute efficiently. Without this step, you might get a tenth of the actual throughput your GPU is capable of delivering.

- Memory management and KV caching: LLM inference is memory-bound. Managing the key-value cache that enables continuous batching of requests is one of the most technically complex aspects of production inference. Do it wrong and your system either runs out of GPU memory or wastes capacity.

- Concurrent request handling: Enterprise workloads are not sequential — dozens or hundreds of users might be hitting your inference endpoint simultaneously. The software stack must intelligently batch and schedule these requests.

- Containerization and portability: For operations teams to manage AI services the same way they manage everything else in their infrastructure, inference needs to be packaged into reproducible, portable containers with standardized lifecycle management.

- API stability: Developers building applications on top of AI services need stable API contracts. A production-grade stack ensures that model updates or infrastructure changes do not break downstream applications.

- Monitoring, logging, and observability: You cannot operate what you cannot see. Tracking token throughput, latency percentiles, error rates, and hardware utilization requires purpose-built instrumentation.

- Security and governance: In regulated industries — finance, healthcare, government — access control, audit logging, data residency compliance, and output guardrails are not optional extras. They are prerequisites.

Building all of this from scratch, on top of raw GPU drivers, is a months-long engineering project that requires specialized expertise most enterprises simply do not have in-house. That reality is exactly what software stacks like NVIDIA NIM, AMD’s ROCm ecosystem, and Intel’s oneAPI are designed to address — and it is why the software layer has become the decisive battleground in enterprise AI infrastructure.

2. The Developer’s Lament: Building AI Workloads Without the Right Stack

Talk to the engineers who were building enterprise AI pipelines in 2022 and 2023 — before mature inference stacks existed — and you will hear variations of the same story. Getting a model to run on a GPU was the easy part. Making it run reliably, efficiently, at scale, in production, with proper security controls — that was where teams went to die.

The frustration was not abstract. It showed up in sprint after sprint of technical debt, in missed project deadlines, in ML engineers burning out on infrastructure work that had nothing to do with their actual expertise. Here is how practitioners actually described the experience:

“We spent six weeks just trying to get our inference server to handle concurrent requests without running out of GPU memory. The model would work fine in testing with one user. Under real load, it just crashed. We ended up building our own batching logic from scratch, which then broke every time we updated the model version.” — Senior ML Engineer, Fortune 500 Financial Services Firm, shared on Reddit r/MachineLearning

“The hardest part was not the model. The hardest part was the operational stuff — the API gateway, versioning, rollback, monitoring. We basically had to build a mini MLOps platform just to serve one model. Our whole AI team of five people spent three months on infrastructure before we wrote a single line of application code.” — CTO, Mid-market Healthcare Technology Company, LinkedIn discussion thread

“Every time we updated the base model, something would break somewhere else in the stack. Dependencies would conflict. CUDA versions would be incompatible. It felt like we were building on sand. We needed something that just worked as a unit.” — Platform Architect, Manufacturing Enterprise, Hacker News

“Our biggest problem was that our data scientists could build great models, but none of them knew how to tune TensorRT or configure vLLM for our hardware. We were leaving 60-70% of our GPU performance on the table because we did not have the inference optimization expertise in-house.” — VP of Engineering, Retail Technology Company, shared in enterprise AI forum

These are not edge cases. They reflect a structural gap that existed — and in many organizations still exists — between raw hardware capability and production-ready AI services. Enterprise AI deployments consistently faced a cluster of interlocking problems that no single tool addressed in isolation.

The dependency hell problem was particularly insidious. An enterprise AI workload might depend on a specific CUDA version, a specific PyTorch release, a specific version of a model serving framework, and hardware driver versions — all of which needed to be perfectly aligned. A company running multiple models from different vendors quickly discovered that managing these dependencies across models was a full-time engineering problem.

Security and compliance teams posed a different kind of challenge. When something goes wrong in production — a model starts hallucinating, an agentic workflow takes an unintended action, a user extracts sensitive information through prompt injection — you need audit trails, rollback capabilities, and controls. Building those on top of raw inference frameworks meant reinventing security infrastructure that no AI team should have to build from scratch.

3. The Numbers Don’t Lie: The Scale of the AI Deployment Gap

The anecdotes from section two are backed by industry research that paints a stark picture of how poorly enterprises have fared when trying to operationalize AI without proper infrastructure.

According to a 2025 analysis of enterprise AI adoption, only about one in four AI initiatives delivers its expected ROI, and fewer than 20% have been fully scaled across an enterprise. An MIT study found that only 5% of custom AI projects ever reach production at all. For an enterprise sector that is collectively investing billions in AI infrastructure, these are deeply troubling numbers.

The specific failure modes are telling:

- 86% of enterprises require significant upgrades to their existing technology stack to successfully deploy AI agents, according to a Tray.ai survey of over 1,000 enterprise technology leaders published in January 2025.

- 62% of enterprise leaders cite data-related challenges, particularly around access and integration, as their primary obstacle to AI adoption, according to Deloitte’s 2024 State of AI in the Enterprise report.

- 40% of enterprises report inadequate internal AI expertise to meet their goals in 2025 — and this is after the major talent market expansion of the previous two years.

- Computing costs jumped 89% between 2023 and 2025, with 70% of executives citing generative AI as the primary driver. According to IBM research, every single executive surveyed canceled or postponed at least one AI initiative due to cost concerns during this period.

- Only 6% of companies with more than 500 employees globally have actually deployed enterprise AI tools to their workforce — even as 78% report using AI in at least one function.

The deployment-to-production gap is especially pronounced for the most advanced use cases. Gartner projects that 33% of enterprise applications will incorporate agentic AI by 2028, up from less than 1% in 2024. But as of Q1 2025, while 65% of enterprises have agentic AI pilots, only 11% have achieved any form of full production deployment. The gap between “we have a pilot” and “we have a production system” remains enormous.

On the infrastructure side, the performance gap between optimized and unoptimized inference is substantial. NVIDIA’s own benchmarks show that properly configured NIM deployments deliver 1.5x to 3.7x better performance than out-of-the-shelf inference engines across various scenarios, with the gap widening significantly at the higher concurrency levels typical of enterprise workloads. Cloudera has reported a 36x performance boost when shifting from ad-hoc inference setups to NIM-optimized deployments. Teams that are not using optimized inference stacks are not just paying more — they are delivering meaningfully worse user experiences.

The cost-per-token math makes this concrete. At enterprise scale, a 2x improvement in token throughput translates directly into a 50% reduction in GPU cost for the same workload. For an organization running inference at scale, this is the difference between a financially viable AI service and one that gets defunded after the first budget review.

4. The Industry Responds: Inference Stacks From NVIDIA, AMD, and Intel

The severity and breadth of the enterprise deployment problem created a clear market need, and all three major GPU vendors have responded — though with very different approaches, maturity levels, and market success.

NVIDIA NIM: The Production Standard

NVIDIA introduced NIM (NVIDIA Inference Microservices) at GTC in March 2024, and the market response was immediate. NIM packages everything needed to run production AI inference — optimized model weights, TensorRT-LLM inference engines, dependency management, standard APIs, enterprise monitoring, and support — into a single containerized unit that can be deployed in minutes.

The design philosophy is elegantly simple: instead of requiring every enterprise to solve the same hard inference engineering problems independently, NVIDIA solves them once and distributes the solution as enterprise software. NIM containers are pre-validated against specific GPU configurations, which means the performance tuning, memory optimization, and compatibility testing have already been done by NVIDIA’s engineers.

NVIDIA’s full AI software ecosystem around NIM — which includes the NeMo framework for model customization and fine-tuning, NeMo Guardrails for safety and compliance, the Triton Inference Server for multi-framework serving, and CUDA-X microservices for RAG, data processing, and more — provides a comprehensive platform that addresses the full AI application lifecycle. The entire stack runs on NVIDIA’s CUDA platform, which has spent over a decade accumulating library optimizations, framework integrations, and developer tooling that competitors cannot replicate overnight.

AMD ROCm: The Open Alternative

AMD’s answer to CUDA’s dominance is ROCm (Radeon Open Compute), an open-source software stack that emphasizes portability, flexibility, and freedom from vendor lock-in. ROCm integrates directly with PyTorch, TensorFlow, and JAX upstream, meaning teams can theoretically migrate from NVIDIA hardware to AMD hardware by swapping containers and drivers rather than rewriting application code.

ROCm 7, released in mid-2025, represented a major leap — delivering up to 3.5x performance over ROCm 6 across popular inference workloads through a re-engineered token generation pipeline. AMD’s Instinct MI series hardware, particularly the MI300X with its extraordinary 192GB of HBM3 memory, has genuine advantages for large model inference. Major production deployments at Meta, OpenAI, Fireworks AI, and Cohere demonstrate that ROCm is no longer just a research curiosity.

However, ROCm still carries the scars of its earlier versions. Developer forums continue to report significant friction with installation, particularly on Windows via WSL2, and with mid-range GPU support. Enterprises considering AMD face a more complex ecosystem with fewer pre-built, validated inference packages compared to what NVIDIA offers through NIM.

Intel oneAPI and Gaudi: The Standards-Based Approach

Intel’s strategy is built around standardization rather than optimization for any specific hardware. oneAPI is built on SYCL, a C++-based programming model rooted in open industry standards, designed to run across CPUs, GPUs, and other accelerators from multiple vendors. The appeal is clear: code that does not depend on any single vendor’s proprietary runtime.

In practice, oneAPI’s strength is also its challenge. The toolkit is comprehensive — some might say exhaustive — and installation footprint alone (over 25GB for a full installation) signals a platform engineered for industrial-scale deployments rather than the rapid iteration culture of modern AI teams. Intel’s Gaudi 3 accelerators offer competitive pricing and integrate with standard Ethernet networking (avoiding NVLink’s proprietary costs), which resonates with enterprises that prioritize total cost of ownership.

The honest assessment is that Intel’s enterprise AI software story is still maturing. Intel conceded ground in the consumer AI GPU market and is now focusing on cost-sensitive enterprise inference workloads where price-per-token matters more than peak throughput benchmarks.

5. Comparing the Stacks: A Market-Grounded Assessment

The following comparison is based on publicly available benchmark data, developer forum feedback, enterprise customer reviews, and market analysis published through early 2026. It is designed to help enterprise technology leaders understand where each stack excels and where gaps remain.

| Capability | NVIDIA NIM / CUDA-X | AMD ROCm 7 | Intel oneAPI / Gaudi |

|---|---|---|---|

| Time to Production | ★★★★★ Deploy in minutes via pre-built containers. Validated on certified hardware. | ★★★ Improved significantly with ROCm 7. Installation complexity remains on some configs. | ★★★ Large toolkit footprint. Longer setup but well-documented for enterprise scenarios. |

| Inference Throughput | ★★★★★ 2.6x vs off-the-shelf H100. 1.5–3.7x vs open-source engines. | ★★★★ MI355X projects 4.2x over MI300X. Competitive for large model inference. | ★★★ Gaudi 3 competitive on cost-normalized workloads, lags on peak throughput. |

| Ecosystem Maturity | ★★★★★ Deepest ecosystem: cuDNN, TensorRT, 140+ NIM models, 2 decades of CUDA. | ★★★★ ROCm 7 supports all major frameworks. Ecosystem 3–5 years behind CUDA. | ★★★ SYCL/oneAPI growing but nascent compared to CUDA. Strong in HPC segments. |

| Enterprise Support | ★★★★★ NVIDIA AI Enterprise with SLAs, dedicated experts, API stability guarantees. | ★★★★ AMD Instinct enterprise support improving. Less comprehensive than NVIDIA. | ★★★ Intel enterprise support solid for Gaudi deployments. oneAPI support mixed. |

| Hardware Flexibility | ★★★★ Runs on any NVIDIA GPU (cloud, DC, workstation). Limited to NVIDIA hardware. | ★★★★★ Open standard, hardware-agnostic philosophy. MI300X to RDNA 4 range. | ★★★★★ Designed for heterogeneous compute (CPU+GPU+FPGA). Broadest hardware range. |

| Cost Economics | ★★★ Premium pricing. Higher TCO offset by performance and productivity gains. | ★★★★ MI300X offers 20–30% lower cost than comparable NVIDIA configs per AMD. | ★★★★ Gaudi 3 positioned as cost-competitive inference tier. Strong TCO story. |

| Agentic AI Support | ★★★★★ NeMo Guardrails, NIM Agent Blueprints, multi-agent orchestration built-in. | ★★★ Framework-level support. Lacks NVIDIA’s pre-built agentic workflow components. | ★★★ Early-stage. Intel ecosystem partners providing agentic middleware. |

| AI Security / Guardrails | ★★★★★ NeMo Guardrails NIMs for enterprise accuracy, safety, and control of agents. | ★★★ Security at framework level. Relies on third-party for guardrails. | ★★★ Standards-based security. Guardrail tooling via third-party integration. |

| Developer Experience | ★★★★★ 28M CUDA developers. Richest tutorials, forums, documentation. | ★★★★ HIP enables CUDA code migration. Growing community. Forum support adequate. | ★★★ Comprehensive documentation but steep learning curve. Less community activity. |

| Cloud Integration | ★★★★★ AWS, Azure, GCP, Oracle, all major clouds support NIM natively. | ★★★★ Azure, OCI, AWS support MI300X. Cloud footprint growing but smaller. | ★★★ Azure and OCI primary. Gaudi cloud availability limited compared to NVIDIA. |

| RAG / Retrieval Support | ★★★★★ NeMo Retriever NIM, CUDA-X RAG microservices, validated pipeline blueprints. | ★★★ Framework-level. ROCm supports RAG tools but no pre-built validated stack. | ★★★ oneAPI supports RAG frameworks. No purpose-built retrieval microservice tier. |

The table above reflects the market reality as of early 2026: NVIDIA leads decisively on ecosystem maturity, enterprise support, and production readiness. AMD has emerged as a credible alternative — particularly for organizations where memory capacity, open standards, or hardware cost is the primary driver. Intel is competing on price and standards compliance, with an eye on the cost-sensitive inference tier.

For most enterprises building AI applications today, especially those involving complex agentic workflows or requiring production-grade security and governance, NVIDIA’s NIM stack represents the lowest-risk path to reliable production deployments.

6. Inside NVIDIA NIM: Architecture That Makes It Work

To understand why NIM works as well as it does, it helps to understand what is actually inside the container and how the pieces fit together.

The Core NIM Container

A NIM container is not just a packaged model. It contains the model weights (in optimized formats like FP8 or INT4), an inference engine selected and configured for the target GPU, an OpenAI-compatible REST API layer, dependency-managed runtime components, and health check and observability endpoints — all pre-tested and validated together.

The inference engine selection is intelligent rather than fixed. NIM supports TensorRT-LLM, vLLM, SGLang, and Triton backends, automatically choosing the optimal engine for each combination of model architecture and GPU type. This means a Llama-3.1-8B model on an RTX A6000 workstation will be served differently than the same model on a DGX H100 cluster, with each configuration optimized for its target hardware without requiring the operator to understand inference engine internals.

The NVIDIA AI Enterprise Layer

Production NIM deployments operate within NVIDIA AI Enterprise, which wraps the technical stack with enterprise software guarantees: SLA-backed API stability (meaning NVIDIA commits to backward compatibility on a versioned basis), security advisories and patching, access to NVIDIA AI specialists, and validated reference architectures for common deployment topologies.

This enterprise layer matters enormously for regulated industries. A healthcare provider deploying AI in clinical decision support workflows needs documented evidence that the inference software has been validated against specific configurations. A financial services firm needs audit-ready documentation of what models are running, what versions they are, and what controls are in place. NVIDIA AI Enterprise provides this paper trail in a way that open-source inference frameworks — however technically capable — simply do not.

The Customization Pipeline

NIM is not just about deploying foundation models as-is. NVIDIA NeMo provides the fine-tuning infrastructure to adapt base models to specific enterprise domains using proprietary data, with LoRA adapter support that allows efficient tuning without retraining the full model. Fine-tuned models deploy through the same NIM container abstraction, meaning customized models benefit from the same operational infrastructure as foundation model deployments.

Multi-NIM Orchestration and Agentic Patterns

Modern enterprise AI is increasingly multi-modal and multi-agent. NVIDIA’s NIM architecture supports this through composability: individual NIM microservices (an LLM for reasoning, a vision model for document understanding, a speech NIM for voice interfaces, a retrieval NIM for RAG) can be composed into complex pipelines using standard API calls.

NIM Agent Blueprints provide pre-built, customizable reference architectures for common agentic patterns — customer service agents, PDF extraction pipelines, multimodal document processing workflows, drug discovery assistants. These blueprints include the NIM configurations, Helm charts for Kubernetes deployment, and example integration code, dramatically reducing the time from concept to running prototype.

NeMo Guardrails — now available as dedicated NIM microservices since early 2025 — add a programmable safety and accuracy layer specifically designed for agentic AI. Guardrails can enforce topic boundaries, detect and prevent prompt injection, validate factual claims against enterprise knowledge bases, and ensure that agentic actions stay within defined scopes. This is the infrastructure layer that separates experimental AI agents from enterprise-grade ones.

7. How NStarX Helps Enterprises Leverage the NIM Stack

Having the right software stack is necessary but not sufficient. The gap between knowing that NIM exists and successfully deploying a production AI system that delivers measurable business value is where most enterprise AI initiatives still get stuck. This is precisely where NStarX enters the picture.

NStarX is an AI-first enterprise transformation company that operates at the intersection of advanced AI infrastructure and business outcomes. Through the DLNP (Data Lake and Neural Platform), NStarX provides a unified environment that integrates NVIDIA’s NIM stack with enterprise data pipelines, governance frameworks, and application layers — designed specifically for the Russell 2000 and mid-market enterprise that cannot afford the hyperscaler-scale AI engineering teams that companies like Google or Amazon deploy internally.

Meeting Customers Where Their GPUs Live

One of the most common enterprise AI scenarios NStarX encounters: a company has already made capital investments in GPU infrastructure — NVIDIA-powered data center nodes, edge GPUs, or cloud GPU instances — and now needs to turn that hardware investment into actual AI capability. NIM’s portability across cloud, data center, and workstation environments makes it the natural infrastructure layer, but deploying NIM well requires expertise in Kubernetes orchestration, GPU driver management, network configuration, and AI-specific observability.

NStarX’s implementation services cover this full stack: infrastructure readiness assessment, NIM deployment and configuration, integration with existing enterprise data systems, and ongoing operational support. Whether a client is running on-premises DGX infrastructure, NVIDIA-certified systems from Dell, HPE, or Cisco, or managed GPU instances on AWS, Azure, or Oracle Cloud, NStarX delivers NIM-powered AI services that are appropriately tuned for the target environment.

Generative AI Patterns NStarX Addresses Using NIM

The NIM ecosystem enables a range of generative AI patterns that NStarX implements for enterprise clients:

- Enterprise RAG Systems: Retrieval-Augmented Generation using NeMo Retriever NIMs combined with vector databases and enterprise document repositories. This pattern grounds AI responses in authoritative enterprise knowledge, addressing the hallucination problem that makes generic LLMs unsuitable for compliance-sensitive use cases.

- Multimodal Document Intelligence: Combining vision NIMs with language NIMs for document processing pipelines — extracting structured data from contracts, medical records, financial statements, and engineering drawings. NStarX has built production deployments of this pattern for healthcare and financial services clients.

- Domain-Adapted Customer Engagement: Fine-tuned models deployed through NIM, customized on client-specific interaction data, serving as the inference backbone for customer service automation, internal helpdesk systems, and product advisory tools.

- Structured Data Analytics Agents: Connecting LLM NIMs to enterprise databases through NIM-compatible tool-calling interfaces, enabling natural language querying of operational systems without compromising data access controls.

Agentic AI Patterns

Agentic AI — systems that plan, reason, and execute multi-step tasks with varying degrees of autonomy — represents the next major frontier in enterprise AI. NStarX implements agentic AI patterns built on the NIM stack that are designed for enterprise trust requirements:

- Supervised Automation Agents: AI agents that execute defined workflows — data collection, report generation, exception handling — with human-in-the-loop checkpoints at high-stakes decision points. NIM’s API stability and NeMo Guardrails provide the reliability guarantees that supervised automation requires.

- Multi-Agent Orchestration: Coordinating specialized agents — a research agent, a writing agent, a validation agent — into a cohesive pipeline that can tackle complex knowledge work tasks. NStarX implements these patterns using NIM’s composable microservice architecture.

- Federated Learning Agents: NStarX’s federated learning capabilities extend NIM deployments across organizational boundaries, enabling AI agents to learn from distributed enterprise data without centralizing sensitive information. This is particularly valuable for healthcare consortia and financial services networks where data sharing is legally constrained.

AI Security and Governance Layers

Deploying NIM is not the end of the story — it is the beginning. Enterprises in regulated industries need governance and security layers that wrap every AI system, regardless of how well the underlying inference stack is built. NStarX implements a comprehensive governance architecture on top of NIM deployments that addresses:

- Access Control and Identity: Integrating NIM API endpoints with enterprise identity providers (Azure AD, Okta, etc.) through zero-trust authentication, ensuring that AI capabilities are accessible only to authorized users with appropriate data access rights.

- Prompt Injection Defense: Systematic detection and mitigation of prompt injection attacks, using NeMo Guardrails NIMs combined with NStarX’s custom rule sets built from real enterprise threat patterns.

- Output Auditing and Lineage: Every AI-generated output logged with full provenance — which model, which version, which input prompt, which retrieved context. This audit trail satisfies compliance requirements in healthcare (HIPAA audit controls), financial services (SOC 2, model risk management), and government contexts.

- Model Risk Management: Continuous monitoring of model behavior against defined performance benchmarks, with automated alerts when drift or degradation is detected. NStarX integrates this monitoring layer with enterprise incident management systems.

- Data Residency Controls: For clients with data sovereignty requirements, NStarX configures NIM deployments to ensure that all inference occurs on infrastructure within the required geographic boundaries, with explicit controls preventing data egress.

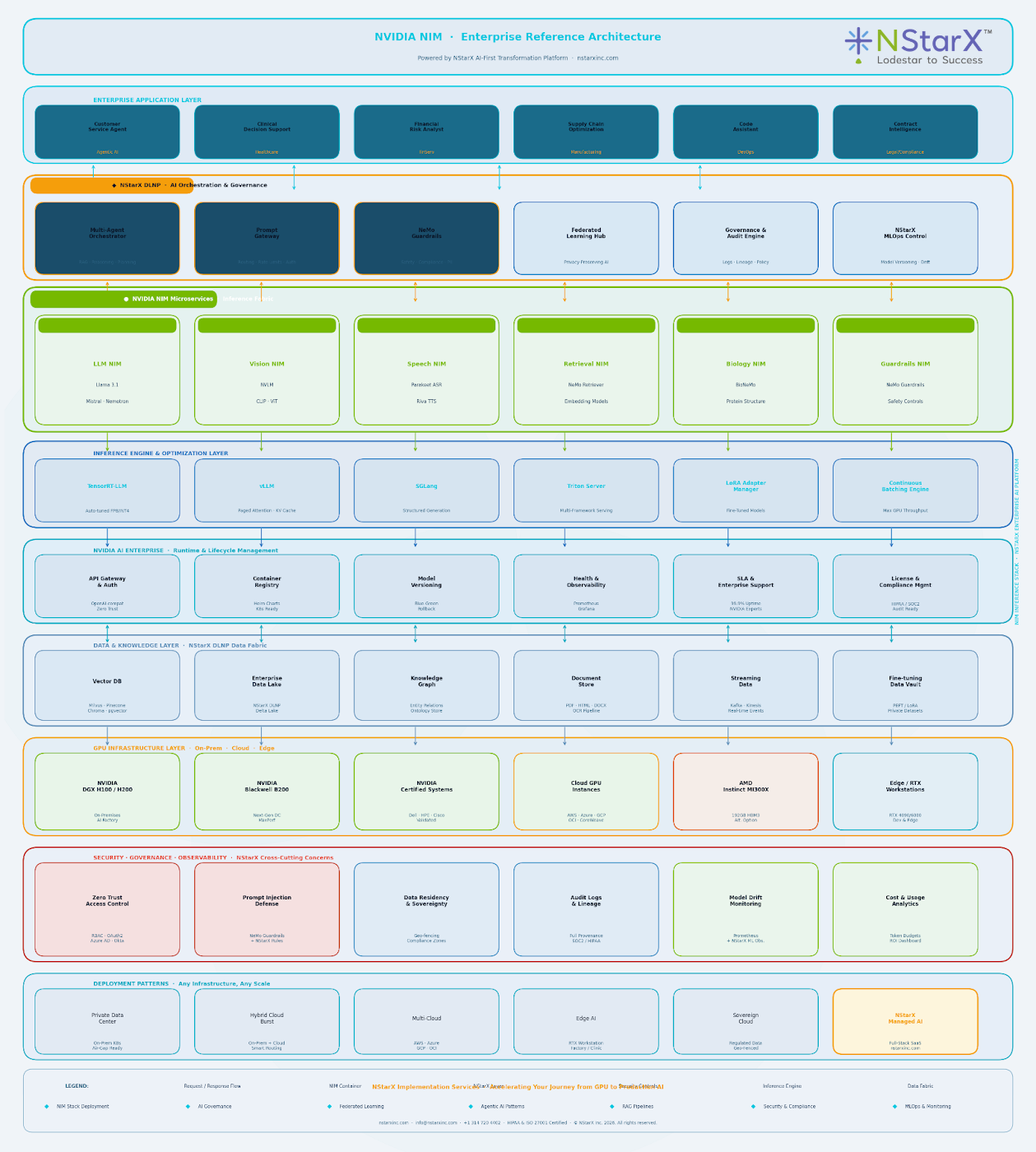

Below is our (NStarX) generic NIMs architecture for enterprise reference as shown in Figure 1:

Figure 1: NStarX NIMs reference architecture

This governance layer is not a bolt-on afterthought in NStarX’s implementation methodology — it is designed alongside the inference infrastructure from day one, because retrofitting governance onto an already-deployed AI system is significantly more expensive and disruptive than building it in from the start.

8. Where This Is All Going: The Next Three to Five Years

The inference stack landscape is moving fast, and enterprises making infrastructure decisions today need to think beyond current capabilities to where the market is heading.

NVIDIA’s NIM Evolution

NVIDIA’s trajectory is clear: expand NIM coverage across more model types, deepen integration with enterprise systems, and build out the agentic AI infrastructure. The January 2025 addition of guardrails NIMs and the ongoing expansion of NIM Agent Blueprints signal a deliberate move from inference infrastructure to full AI application platform. Expect NIM to become the de facto API layer for enterprise AI agents across verticals.

The Blackwell architecture’s support for FP4 inference and the projected performance curves suggest that the cost-per-token economics will continue improving significantly, making AI services that are currently financially marginal at scale much more viable. By 2027, the performance-per-watt improvements from Blackwell and its successors will likely make on-premises inference competitive with cloud inference for many enterprise workloads, reversing some of the momentum that has been pushing workloads to managed cloud APIs.

AMD’s Growing Relevance

AMD is not standing still. ROCm 7’s 3.5x performance improvement over ROCm 6 in a single release cycle demonstrates the pace of development. The MI350 and MI400 series represent aggressive hardware ambitions, and AMD’s 2030 goal of 20x rack-scale energy efficiency improvement targets the total cost of ownership argument that will matter more and more as AI inference scales across organizations.

The enterprise case for AMD hardware will grow as ROCm matures and the memory capacity advantage of the MI series (288GB HBM3e on MI350) becomes increasingly important for running frontier model sizes without model parallelism complexity. Organizations that diversify their GPU estate should expect AMD tooling to be significantly more enterprise-ready in 2027 than it is today.

Intel’s Bet on the Cost-Sensitive Tier

Intel’s play is for the enterprises that will deploy AI inference at massive scale but cannot justify the premium GPU pricing required to run everything on H100-class hardware. As AI inference moves from experimental to operational across industries, a two-tier hardware strategy — expensive hardware for latency-sensitive or high-complexity workloads, cost-optimized hardware for high-volume routine inference — will become common. Intel’s Gaudi series and oneAPI are positioned for that lower tier.

The Open Source Reckoning

The most interesting wildcard in this landscape is the open source ecosystem. Tools like vLLM, SGLang, and Ollama have improved dramatically, and the community around open inference infrastructure is one of the most active in software. The gap between open-source inference tools and proprietary stacks like NIM is real but narrowing.

For enterprises that prioritize avoiding vendor lock-in above all else, the emerging strategy is to build on open-source inference frameworks while using commercial software for the higher-level layers — governance, guardrails, orchestration — where open-source tooling remains less mature. By 2028, expect several open-source inference stacks to achieve functional parity with NIM on core inference performance, forcing NVIDIA to differentiate on the enterprise experience, ecosystem breadth, and upper-stack capabilities rather than inference optimization alone.

The UXL Foundation (backed by Intel, ARM, Samsung, and others) is working toward a genuinely hardware-agnostic AI programming model. If this initiative achieves critical mass — and it has the institutional backing to do so — it could meaningfully disrupt CUDA’s dominance in the 2027-2030 timeframe, creating a world where enterprises can mix and match inference hardware without the switching costs that currently anchor organizations to NVIDIA.

The Rise of AI Factories

NVIDIA’s own framing has shifted — Jensen Huang now talks not about data centers but about “AI factories,” facilities whose primary purpose is generating AI inference at scale. This framing captures something real: as AI services become as fundamental to enterprise operations as databases and communication platforms, the infrastructure to run them will need to be purpose-built, always-on, and industrially managed. The inference software stack — NIM or its successors — will be as fundamental to these AI factories as an operating system is to a general-purpose computing environment.

9. The Future of Inference Stacks and NStarX’s Role

As the inference stack market matures and competition intensifies, the value that systems integrators and AI platform companies provide will shift from basic deployment to strategic architecture and ongoing optimization. This is exactly where NStarX’s positioning is strongest.

The coming years will require enterprises to navigate several decisions simultaneously: which models to run on which hardware, when to use cloud versus on-premises inference, how to manage the economics of AI at scale, how to maintain governance as model capabilities expand, and how to build organizational processes that can actually extract value from increasingly autonomous AI systems.

NStarX is building toward a vision where its DLNP platform serves as the enterprise control plane for all of this — a system that can optimize AI workload placement across different hardware (NVIDIA, AMD, eventually Intel Gaudi), manage the full governance and compliance lifecycle, and provide the business intelligence layer that helps organizations understand whether their AI investment is actually generating the returns it promised.

For NStarX clients, the key advantage is not just technical expertise with NIM or ROCm or oneAPI — it is a methodology that keeps business outcomes at the center of every infrastructure decision. The question is never “which inference stack is technically superior?” It is always “which infrastructure choices will help this specific organization achieve its specific AI objectives reliably, securely, and at an economics that makes sense?”

That framing changes the conversation from technology selection to business transformation — which is where the real value of enterprise AI has always lived.

10. Conclusion

The story of enterprise AI infrastructure over the last three years is fundamentally a story about the gap between what GPUs can do and what enterprises have been able to actually deploy. That gap is not a hardware problem. It is a software problem — specifically, a problem of fragmentation, operational complexity, and the absence of production-grade tooling that enterprises could trust to run mission-critical AI services.

NVIDIA NIM did not invent inference optimization. It packaged two decades of GPU software expertise into a form that enterprises could actually use. By reducing the time from infrastructure to production from weeks or months to minutes, and by wrapping the whole thing in enterprise software guarantees — SLAs, security support, API stability — NIM addressed the structural barrier that had been keeping AI pilots from becoming AI products.

AMD and Intel are building real alternatives. ROCm 7 is meaningfully better than its predecessors, and AMD’s hardware economics and open-source philosophy will attract an increasingly significant slice of enterprise deployments. Intel’s standards-based approach positions Gaudi well for the cost-sensitive inference tier that will grow as AI scales operationally across industries. Healthy competition across these three stacks will benefit enterprises through better performance, lower costs, and reduced vendor dependency over time.

Open source is not standing still either. By the end of this decade, the performance gap between commercial inference stacks and their open-source counterparts will have narrowed significantly. The lasting competitive advantage for commercial vendors like NVIDIA will not be inference performance per se — it will be the surrounding ecosystem: governance, compliance, enterprise support, agentic orchestration, and the network effects of a platform that thousands of enterprises are building on together.

For enterprises navigating this landscape — particularly the mid-market organizations that cannot afford dedicated AI infrastructure teams — the practical advice is straightforward: start on the most mature, lowest-friction stack available, which today means NVIDIA NIM. Build your governance and security architecture alongside your inference infrastructure from day one, not as an afterthought. Choose implementation partners who understand both the technology and the business context well enough to make decisions that will age well as the landscape continues to evolve.

The AI factory era is beginning. The enterprises that build their inference infrastructure on solid foundations now will be the ones positioned to take full advantage of the agentic AI capabilities that are already in early production and will become mainstream within the next two to three years. The software stack you choose today is more consequential than it might appear.

About the Author: Sujay Kumar is Chief Revenue Officer at NStarX Inc., an AI-first enterprise transformation company. NStarX helps mid-market and Russell 2000 enterprises become AI-native through its DLNP platform. Learn more at dev-wp.nstarxinc.com/

11. References

1. NVIDIA NIM Microservices – Official Product Page

https://www.nvidia.com/en-us/ai-data-science/products/nim-microservices/

2. NVIDIA Launches Generative AI Microservices for Developers (GTC 2024) – NVIDIA Newsroom

https://nvidianews.nvidia.com/news/generative-ai-microservices-for-developers

3. NVIDIA NIM and Inference Microservices: Deploying AI at Enterprise Scale – Introl Blog (2025)

https://introl.com/blog/nvidia-nim-inference-microservices-enterprise-deployment-guide-2025

4. NIM for Developers – NVIDIA Developer Portal

https://developer.nvidia.com/nim

5. NVIDIA NIM Offers Optimized Inference Microservices for Deploying AI Models at Scale – NVIDIA Technical Blog

https://developer.nvidia.com/blog/nvidia-nim-offers-optimized-inference-microservices-for-deploying-ai-models-at-scale/

6. Why Do 80% of AI Pilots Fail to Scale? – EPAM Insights (2025)

https://www.epam.com/insights/ai/blogs/enterprise-ai-deployment-challenges

7. The 7 Biggest AI Adoption Challenges for 2025 – Stack AI Blog

https://www.stack-ai.com/blog/the-biggest-ai-adoption-challenges

8. Enterprise AI is at a Tipping Point – World Economic Forum (2025)

https://www.weforum.org/stories/2025/07/enterprise-ai-tipping-point-what-comes-next/

9. New Research Uncovers Top Challenges in Enterprise AI Agent Adoption – Architecture & Governance Magazine (January 2025)

https://www.architectureandgovernance.com/artificial-intelligence/new-research-uncovers-top-challenges-in-enterprise-ai-agent-adoption/

10. 65% of Enterprise AI Deployments Are Stalling — The Real Bottleneck – Jay Cadmus on Medium (November 2025)

https://medium.com/@jay.cadmus/65-of-enterprise-ai-deployments-are-stalling-the-real-bottleneck-3d29d6e85f0a

11. Why Your Enterprise Isn’t Ready for Agentic AI Workflows – Gigster (May 2025)

https://gigster.com/blog/why-your-enterprise-isnt-ready-for-agentic-ai-workflows/

12. Beyond CUDA: Inside the Push to Loosen NVIDIA’s Grip on AI Computing – SDxCentral (January 2026)

https://www.sdxcentral.com/analysis/beyond-cuda-inside-the-push-to-loosen-nvidias-grip-on-ai-computing/

13. The Fragmentation Crisis: AMD ROCm vs. Intel oneAPI vs. NVIDIA – ConcreteUX (December 2025)

https://www.concreteux.com/post/the-fragmentation-crisis

14. AMD Unveils Vision for an Open AI Ecosystem at Advancing AI 2025 – AMD Newsroom (June 2025)

https://ir.amd.com/news-events/press-releases/detail/1255/amd-unveils-vision-for-an-open-ai-ecosystem-detailing-new-silicon-software-and-systems-at-advancing-ai-2025

15. AMD’s AI Surge Challenges NVIDIA’s Dominance – TechNewsWorld (June 2025)

https://www.technewsworld.com/story/amds-ai-surge-challenges-nvidias-dominance-179781.html

16. NVIDIA AI Strategy: Analysis of Sustained Dominance – Klover.ai (July 2025)

https://www.klover.ai/nvidia-ai-strategy-analysis-sustained-dominance-ai/

17. GPU Software for AI: CUDA vs. ROCm in 2026 – AIM Research

https://research.aimultiple.com/cuda-vs-rocm/

18. LLM Inference Hardware: An Enterprise Guide to Key Players – IntuitionLabs (October 2025)

https://intuitionlabs.ai/articles/llm-inference-hardware-enterprise-guide

19. Enterprise AI and Data Architecture in 2025 – Cloudera Blog

https://www.cloudera.com/blog/business/enterprise-ai-and-data-architecture-in-2025-from-experimentation-to-integration.html

20. Deloitte 2024 State of AI in the Enterprise Report – via World Economic Forum

https://www.weforum.org/stories/2025/07/enterprise-ai-tipping-point-what-comes-next/

21. How to Make Enterprise AI Work Through Integration Not Silos – World Economic Forum (2026)

https://www.weforum.org/stories/2026/01/how-to-make-ai-work-in-your-enterprise-through-integration-and-not-silos/

22. NVIDIA: Latest News and Insights – Network World

https://www.networkworld.com/article/3562856/nvidia-latest-news-and-insights.html

23. NStarX Inc. – AI-First Enterprise Transformation

https://nstarxinc.com/