The next landmark company in enterprise technology won’t just sell software. It will deliver outcomes — and NStarX is building exactly that.

Author: NStarX Engineering Leaders

1. Introduction: The Quiet Revolution Nobody Is Talking About Loudly Enough

There is a thunderclap coming for the enterprise IT services industry — and most incumbents are still holding umbrellas.

In March 2026, Sequoia Capital published a deceptively simple thesis: “The next $1T company will be a software company masquerading as a services firm.” The argument is elegant. For every dollar spent on software tools, six dollars are spent on the services that surround them — the analysts, engineers, consultants, and managed services providers who do the actual work. AI is now capable enough to close that gap. Not with tools that assist workers. With systems that do the work.

Sequoia calls it the shift from Copilot to Autopilot — from selling the hammer to delivering the building.

For the global IT engineering services industry, this shift isn’t theoretical. It is underway right now. And the companies that recognize it earliest will capture enormous value. Those that don’t will find their service margins hollowed out by AI-native competitors who deliver outcomes faster, at a fraction of the cost, with compounding improvements baked in.

NStarX was built for this moment.

We are an engineering services company — but increasingly, we are something more. With the NStarX Converged Platform, we are transforming how enterprises consume engineering capability: not as staff augmentation or project-based engagements, but as software-driven, outcome-oriented services. Service as Software. Outcomes on demand. Engineering as a product.

This blog unpacks why this disruption is real, why it matters to you as an enterprise leader, and how NStarX’s platform positions your organization to benefit from — rather than be disrupted by — this shift.

2. Real-World Proof: Companies Already Disrupting the Services Market

The Services-as-Software thesis is not a prediction. It is a pattern already playing out across multiple verticals. Here are five examples where AI-native companies are turning billable hours into automated outcomes:

Legal: Harvey and Crosby

Transactional legal work — NDAs, contract review, regulatory filings — is being automated by Harvey (targeting law firms) and Crosby (targeting the enterprise buyer directly). Crosby doesn’t sell to outside counsel. It sells to the company that needs the NDA drafted, capturing the work budget, not just a productivity tool license.

Accounting: Rillet and Basis

The US has lost 340,000 accountants in five years while demand has grown, with 75% of CPAs nearing retirement. Rillet is building an AI-native ERP that closes the books autonomously. Basis started as a copilot for accountants and is evolving toward a full autopilot. The task being automated — financial close — is already outsourced by most companies and follows rules, making it prime for AI.

Healthcare Revenue Cycle: Anterior

Medical billing and coding is translating clinical notes into ~70,000 standardized ICD-10 codes. It sounds complex, but it follows rules. The outsourcing market here alone is $50–80B. Anterior is building the autopilot that replaces the offshore billing department with an AI-native workflow.

Insurance Brokerage: WithCoverage and Harper

Commercial insurance procurement — gathering requirements, shopping carriers, filling applications — is essentially intelligence work performed by tens of thousands of fragmented brokers. WithCoverage sells directly to the CFO buying the outcome, not the broker wielding the tool.

IT Managed Services: Edra and Serval

Every SMB and mid-market enterprise outsources IT management: patching, monitoring, user provisioning, alert triage. This is pure intelligence work running on repeat. Nobody has yet sold “your IT just runs” as a clean outcome-based product — but Edra and Serval are building exactly that.

The Common Thread

In every case, the disruption follows the same logic: identify a service that is already outsourced, heavily intelligence-driven (rather than judgment-driven), and where the buyer is purchasing an outcome. Replace the human-staffed delivery model with an AI-native one that is faster, cheaper, and continuously improving. The services firm’s margin becomes the AI company’s moat.

3. Autonomous AI and Agentic Systems: How They Will Reshape IT Services

The IT services industry is particularly vulnerable to this disruption because it sits at the intersection of three compounding forces.

The Intelligence-Judgement Shift in Software Engineering

Sequoia’s framework draws a sharp line between intelligence work (tasks with complex but rule-based patterns) and judgment work (decisions requiring accumulated experience and contextual instinct). Software engineering — writing code, testing, debugging, deploying, monitoring — is primarily intelligence work. This is precisely why AI tool adoption in software development is over 50% across all professional categories, while every other profession remains in single digits.

Autonomous agents are now crossing the threshold where they can complete full software engineering tasks end-to-end. As Sequoia notes, “more tasks are started by agents than by humans” in leading development environments today.

What Agentic AI Changes About IT Delivery

Traditional IT services depend on a pyramid: architects at the top, senior engineers in the middle, a wide base of junior engineers and QA staff executing repetitive tasks. Agentic AI inverts this pyramid. Autonomous agents handle the repetitive execution layer — unit testing, code generation from specs, CI/CD pipeline management, infrastructure provisioning, monitoring triage — while human engineers focus on architecture, design decisions, and business alignment.

The implication for IT services is profound: the cost structure of delivery collapses, while the quality floor rises. A ten-person team augmented by agentic AI can deliver what previously required thirty. An AI-native services firm with the right platform can undercut traditional IT services pricing by 40–60% while maintaining or exceeding quality benchmarks — and still be profitable.

The Data Moat Compounds Over Time

Every autopilot that Sequoia describes accumulates proprietary data about what good judgment looks like in its domain. The same applies to AI-augmented IT services. Every project NStarX delivers generates data: what worked, what failed, which architectural patterns solved which problems, which integration approaches performed best at scale. That data — embedded in the platform — becomes a compounding moat. Each engagement makes the next one faster, cheaper, and more precise.

4. Which Areas in the Enterprise Are Ripe for Disruption?

Not all IT services are equally vulnerable to disruption. Using Sequoia’s intelligence-vs-judgment and outsourced-vs-insourced framework, here is where we see the highest disruption potential:

1. Application Modernization (High Intelligence Ratio, Heavily Outsourced)

Legacy application modernization — decomposing monoliths, refactoring codebases, migrating to cloud-native architectures — follows well-defined patterns. It is heavily outsourced to system integrators and consulting firms. Most of the work is intelligence-heavy: code analysis, dependency mapping, API design, data migration. AI agents can handle 60–70% of execution tasks autonomously while architects manage design decisions. This is the highest-priority disruption target.

2. Cloud Infrastructure Management (Pure Intelligence, Already Commodity-Outsourced)

Infrastructure provisioning, Kubernetes cluster management, cost optimization, security patching, and monitoring configuration are nearly pure intelligence work. MSPs and cloud consulting firms charge premium rates for work that is increasingly automatable. The buyer wants “my cloud runs well” as an outcome, not a monthly retainer for a team to do it manually.

3. AI/ML Engineering and MLOps (Intelligence-Heavy, Fast-Growing)

Building and deploying AI pipelines — RAG systems, LLM integrations, model fine-tuning, ML monitoring — requires specialized skills that are scarce and expensive. But the engineering patterns are rapidly standardizing. AI-native platforms can accelerate delivery from months to weeks, creating enormous value for enterprises that need AI capabilities but lack in-house expertise.

4. QA and Testing Automation (Rule-Based, High Volume, Easily Outsourced)

Test case generation, regression suite maintenance, performance benchmarking, and security scanning are almost entirely intelligence work. They are widely outsourced to offshore QA firms at scale. AI agents are already replacing entire QA delivery functions with continuous, automated pipelines.

5. Data Engineering and Analytics (Pattern-Heavy, Outsourced at Scale)

ETL pipeline development, data warehouse design, reporting layer construction, and analytics engineering follow repeatable patterns. Data integration — connecting disparate enterprise systems — is a high-cost, high-friction activity that AI agents can accelerate dramatically.

6. DevSecOps and Compliance Automation (Rule-Based, High Stakes)

Security hardening, compliance posture management, audit trail generation, and DevSecOps pipeline configuration follow regulatory rules and industry frameworks. This work is frequently outsourced and is precisely the kind of intelligence-heavy, outcome-oriented service that AI-native delivery can transform.

5. From Naysayers to Adopters: How Enterprises Will Embrace the Shift

History rhymes. Cloud computing faced the same skepticism in 2008 that AI-native services face today. The progression follows a predictable arc:

Phase 1 — Skepticism (“Our data is too sensitive / Our use cases are too unique”)

The first objection is always security and uniqueness. Enterprises claim their requirements are different from everyone else’s. This is rarely true at the infrastructure and execution layer, though it is often true at the strategy and design layer. The AI-native approach doesn’t eliminate human judgment at the design phase — it amplifies it by freeing human experts from execution overhead.

Phase 2 — Experimentation (“Let’s pilot this on a non-critical workload”)

Forward-thinking enterprises run bounded pilots. A single application modernization. A proof-of-concept RAG pipeline. A contained infrastructure optimization exercise. The results consistently surprise them: faster delivery, fewer defects, and measurable cost reduction. The pilot becomes the proof point.

Phase 3 — Internal Advocacy (“Our team saw 40% faster delivery on the AI-assisted sprint”)

Engineering leads become internal champions. The numbers are compelling and visible. Budget holders start asking why the rest of the portfolio isn’t being delivered the same way.

Phase 4 — Institutionalization (“This is how we deliver software now”)

AI-augmented delivery becomes the standard, not the exception. The question shifts from “should we try this?” to “why would we ever do it the old way?”

The accelerant across all phases is the entry-point engagement. NStarX’s Discovery & Architecture Sprint is engineered precisely for this: a low-risk, time-boxed, high-value engagement that demonstrates the platform’s impact on a real problem, generating the internal proof point that triggers Phase 3 and 4 adoption.

6. Defining Success: What Does “Service as Software” Actually Deliver?

Success in a Service-as-Software model is not measured by utilization rates, headcount deployed, or hours billed. It is measured by outcomes. Here is how the success criteria shift:

| Traditional IT Services | Service as Software (NStarX Model) |

|---|---|

| Time & Materials billed | Outcomes delivered against milestones |

| Ramp-up time: 4–8 weeks | Productive from Day 1 (platform-bootstrapped) |

| Knowledge locked in consultants’ heads | Institutional knowledge embedded in platform |

| Quality dependent on individual skill | Quality floor set by platform standards |

| Improvement requires headcount growth | Improvement driven by AI capability compounding |

| Scale requires proportional cost increase | Scale decoupled from cost (AI handles execution) |

| Engagement ends, knowledge walks out | Platform artifacts, ADRs, pipelines persist |

The most critical success metric is the Time-to-Value (TTV) — how quickly an enterprise sees measurable improvement from an engagement. NStarX’s platform architecture is explicitly designed to compress TTV by delivering pre-configured environments, pre-built integration connectors, and AI-accelerated development pipelines from day one.

Secondary metrics include cost efficiency vs. equivalent traditional engagement, defect rates, deployment frequency, and architecture decision quality (measured through formalized ADR reviews).

7. The NStarX Service-as-Software Landscape: Platform Meets Delivery

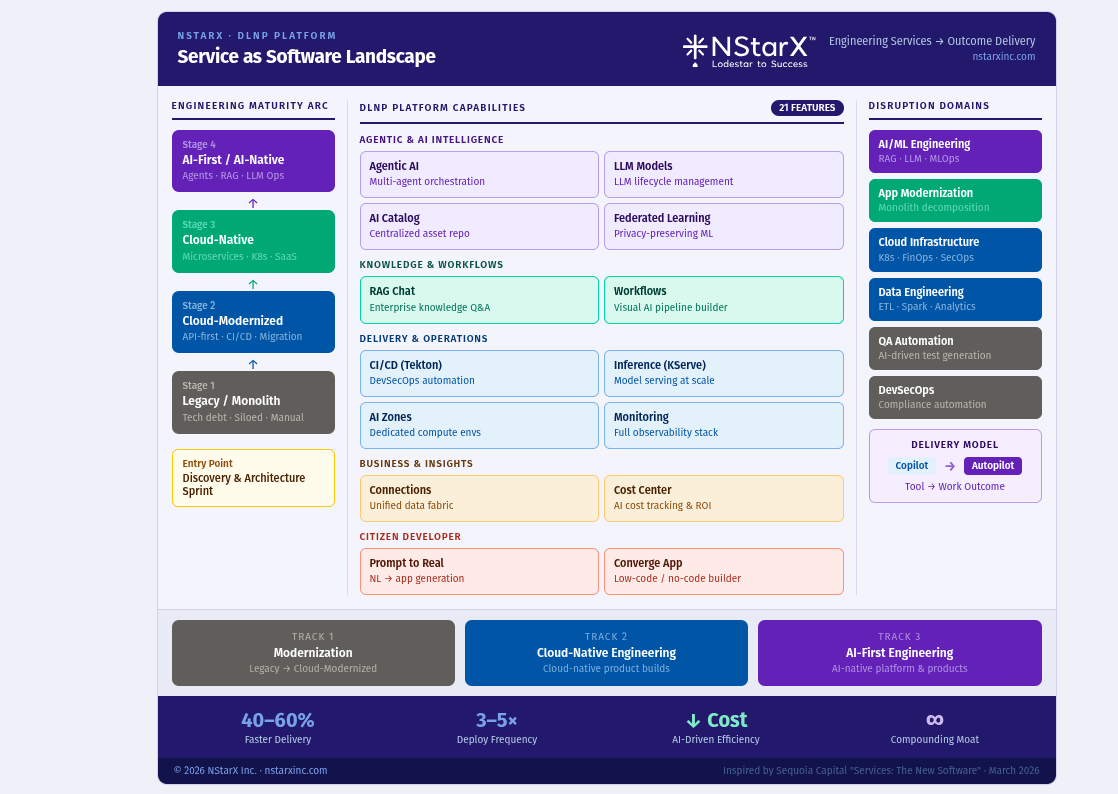

NStarX is uniquely positioned at the intersection of engineering services expertise and an enterprise AI platform. The NStarX Converged (DLNP) Platform is not a standalone product — it is the engine that powers how NStarX delivers services, and increasingly, how enterprises can run their own engineering operations autonomously. Please see the high level reference architecture:

Figure 1: NStarX Service as Software Delivery Architecture

Here is how the platform’s core capabilities map to the Service-as-Software vision:

Agentic AI — The Execution Engine

The platform’s Agentic AI module enables autonomous AI agents and multi-agent systems to handle complex engineering tasks end-to-end. Rather than AI assisting a developer, agents execute tasks — writing code, testing, deploying, monitoring — with human oversight at decision points. This is the core of NStarX’s autopilot capability.

AI Catalog & Artifact Management — Institutional Knowledge as a Product

NStarX’s AI Catalog is a centralized repository of AI assets — models, pipelines, prompt configurations, validated architecture patterns — that accumulates institutional knowledge across every engagement. Each project doesn’t start from zero. It starts from a curated, battle-tested library of reusable AI assets. This is the compounding data moat in practice.

RAG Chat — Conversational Access to Enterprise Knowledge

The RAG Chat system enables Retrieval-Augmented Generation interfaces over enterprise knowledge bases. Rather than a developer spending hours searching documentation or tribal knowledge, they query it conversationally and get precise, contextually grounded answers. This capability alone eliminates an enormous amount of intelligence work that currently occupies expensive engineering hours.

Visual Workflows — AI Pipeline Orchestration Without Code

The Workflows module provides visual AI pipeline orchestration, enabling business and technical teams to design, test, and deploy AI workflows without deep ML engineering expertise. This democratizes AI capability across the enterprise and reduces the specialist bottleneck.

CI/CD with Tekton — DevSecOps as Automated Infrastructure

NStarX’s CI/CD module — built on Tekton — delivers automated pipeline management for every deployment. Security scanning, compliance checks, and deployment automation are baked in. The result: DevSecOps not as a manual process, but as a continuously running automated system.

Cost Center & Monitoring — Outcome Accountability Built In

The Cost Center and Monitoring modules provide real-time visibility into AI infrastructure costs and platform performance. Enterprises can track exactly what their AI platform is spending, where the value is being generated, and where optimization is needed. This is outcome accountability made operational.

Connections — Universal Data Fabric

The Connections module provides unified data source connectivity across enterprise data stores, cloud services, and third-party APIs. This eliminates the integration tax that typically consumes 30–40% of data engineering project timelines.

Prompt to Real & Converge App — Citizen Developer Empowerment

Prompt to Real and the Converge App low-code/no-code builder represent the frontier of NStarX’s platform vision: enabling non-engineering stakeholders to generate functional applications from natural language prompts. This is the furthest evolution of the Service-as-Software model — the service being so embedded in the platform that business users can self-serve.

Federated Learning — Enterprise-Grade Privacy Preservation

NStarX’s Federated Learning capability enables privacy-preserving distributed ML across organizational boundaries — a critical capability for regulated industries (financial services, healthcare, government) where data cannot leave controlled environments.

How Enterprises Reap the Benefits

The NStarX platform operationalizes the Service-as-Software model across three tracks aligned to our Engineering Maturity Arc:

Track 1: Modernization — Enterprises on the Legacy-to-Cloud-Modernized arc use the platform’s AI Catalog, Workflows, and CI/CD to accelerate monolith decomposition, cloud migration, and API modernization. Delivery timelines compress by 40–60%. Architecture decisions are documented in platform-embedded ADRs that persist after the engagement.

Track 2: Cloud-Native Product Engineering — Enterprises building net-new cloud-native products use the Agentic AI, RAG, and Connections capabilities to ship faster with smaller teams. The platform handles the execution layer. NStarX architects and engineers handle design and strategy.

Track 3: AI-First Engineering — Enterprises on the AI-Native frontier use the full platform stack — Agentic AI, Federated Learning, LLM lifecycle management, RAG, Workflows — to build AI-native products and internal capabilities. NStarX becomes the engineering partner that builds the capability and the knowledge base simultaneously.

8. The Future: How Real Is This Disruption? Why Enterprises Must Get Ready Now

This disruption is not a ten-year horizon scenario. It is a two-to-three-year window of competitive advantage that is open right now and closing fast.

The Evidence Is Already Compelling

Sequoia’s opportunity map puts IT managed services at $100B+, with supply chain and procurement at $200B+ and recruitment and staffing at $200B+. Software engineering AI adoption is already at 50%+ across professions — ten times higher than any other category. The productivity gains are documented: companies using AI-augmented development are shipping 30–50% faster with smaller teams.

The Compounding Advantage Is Non-Linear

The enterprises that start now don’t just get six months of productivity gains. They get six months of proprietary data, six months of refined AI models, six months of tested and hardened pipelines — all of which compound into a widening capability gap versus those who wait. Early movers in cloud infrastructure (2010–2013) didn’t just save money; they built organizational muscle that late movers spent years trying to replicate.

The Cost of Inaction Is Asymmetric

If you adopt AI-native engineering now and it turns out to be slower than expected: you’ve lost a few months of investment. If a competitor adopts it and you haven’t: you face a structural cost and speed disadvantage that is very difficult to close. The asymmetry strongly favors early adoption.

The Talent Math Doesn’t Work Without AI

The global shortage of senior software engineers, cloud architects, and AI specialists is structural. Universities cannot produce enough graduates. Visa policies are tightening. Competition for talent from hyperscalers and AI labs is intensifying. The only viable path for enterprises that need to build and modernize at scale is to amplify the output of their existing talent with AI — or partner with a firm that has built that amplification into its delivery model.

What the Next Three Years Look Like

By 2027–2028, enterprises that have invested in AI-native engineering platforms will be operating with fundamentally different economics. Their software delivery cost per feature will be 40–60% lower. Their deployment frequency will be 3–5x higher. Their architectural quality will be more consistent and better documented. They will be better positioned to absorb and integrate the next wave of AI capability.

Enterprises that have not made the shift will face pressure from investors, boards, and customers who can see the operational gap. The question will no longer be “should we adopt AI-native engineering?” It will be “why haven’t you?”

9. Conclusion: The Autopilot Era for Enterprise IT Is Here

Sequoia’s thesis is both a market observation and a call to action: the next legendary companies will not sell tools that help services firms do their work more efficiently. They will sell the work itself — delivered by AI-native systems, continuously improving, and captured in platforms that compound over time.

For the enterprise IT services industry, this creates a clear fork in the road. One path leads toward continued reliance on human-staffed delivery models that are constrained by talent scarcity, geographic arbitrage, and utilization economics. The other path leads toward AI-native delivery — where platforms replace execution overhead, human experts focus on judgment and strategy, and every engagement generates data that makes the next one better.

NStarX has chosen the second path — and we have built the platform to prove it.

The Converged Platform is our expression of Service as Software: a system that embeds institutional knowledge, accelerates engineering delivery through agentic AI, automates the intelligence-heavy execution layer, and gives enterprise leaders real-time visibility into outcomes and costs. It is the infrastructure of an AI-native engineering services firm — available to enterprises as a partnership, not just a license.

The question for every enterprise technology leader is simple: Will you be a beneficiary of this disruption, or a casualty of it?

The window to get ahead of it is open. NStarX is ready to help you walk through it.

Ready to start? Engage NStarX for a Discovery & Architecture Sprint — a time-boxed, low-risk entry point that delivers a concrete architecture roadmap, an AI readiness assessment, and a working proof-of-concept on your real environment. It’s the fastest path from curiosity to conviction.

10. References and Further Reading

- Services: The New Software — Sequoia Capital (Julien Bek, March 2026)

- 2026: This Is AGI — Sequoia Capital (Pat Grady & Sonya Huang)

- NStarX Inc. Website

- Generative AI’s Act o1 — Sequoia Capital

- Harvey AI — Legal AI Platform (Transactional Legal Work Autopilot)

- Crosby — AI-Native Legal Services (NDA and Contract Autopilot)

- Rillet — AI-Native ERP and Accounting Close Automation

- Anterior — AI Platform for Healthcare Revenue Cycle Automation

- WithCoverage — AI-Native Commercial Insurance Brokerage

- Edra — Agentic IT Process Automation for MSPs

- AICPA Talent Pipeline Report — CPA Shortage and Workforce Trends

- GitHub Copilot Productivity Research — AI-Augmented Developer Studies

- McKinsey Global Institute: The Economic Potential of Generative AI

- KServe Model Serving Documentation (referenced in NStarX Inference Model)

- Tekton CI/CD Documentation (referenced in NStarX CI/CD module)

- The Opening, Midgame and Endgame in Startups — Sequoia Capital (David Cahn)