01 · Introduction: The Shift That Changes Everything

There’s a moment in the evolution of every transformative technology when it stops being a productivity aid and starts becoming infrastructure. The personal computer didn’t matter when it was a faster typewriter. It mattered when it became the operating environment for the modern enterprise. The cloud didn’t matter when it was cheaper hosting. It mattered when it became the default architecture for every software system built since 2010.

AI is approaching that same inflection point — not because of a single model breakthrough, but because of a structural change in how AI is deployed. We are moving from AI as a responder to AI as an operator. The implications for how enterprises are structured, staffed, and run are profound.

To understand where we are headed, it helps to trace the lineage honestly.

The Four Phases of Enterprise AI

- Phase 01: Chatbots (2016–2021) — Rule-based conversational interfaces that handled narrow use cases — FAQ deflection, basic service routing, scripted interactions. They were useful at the margins but brittle at the core. One edge case and the conversation fell apart.

- Phase 02: Copilots (2022–2024) — Large language models unlocked a new category: AI that could assist a human in real-time, drafting documents, summarizing data, generating code, offering analysis. But copilots were fundamentally reactive. They waited for a human to ask. They produced suggestions, not outcomes.

- Phase 03 (Now): Agents — Agents don’t wait to be asked. They perceive a goal, decompose it into steps, take actions against real systems, observe the results, adapt, and complete the task — with or without a human in the loop at each step.

- Phase 04 (Coming): Autonomous Systems — The full realization of AI as enterprise operating infrastructure.

The question is no longer whether AI can help your people work better. The question is whether AI can do the work itself — securely, compliantly, and at enterprise scale. — NStarX Enterprise AI Strategy Framework, 2026

This is where platforms like NemoClaw — the enterprise-grade agent orchestration layer built on NVIDIA’s NeMo ecosystem — enter the picture. NemoClaw is not a chatbot. It is not a copilot wrapper. It is an enterprise agentic runtime: a platform that connects LLMs to enterprise systems, orchestrates multi-agent workflows, enforces governance, operates securely inside your infrastructure boundary, and executes the kind of knowledge work that currently consumes thousands of hours of human labor every month.

The leaders who understand this shift early will have a genuine structural advantage. Those who treat it as another software procurement decision will find themselves explaining, eighteen months from now, why their operating costs haven’t moved.

02 · Problem Statement: The Broken Reality of Enterprise AI Today

Here is a scenario most enterprise technology leaders will recognize: Your organization has approved fifteen AI initiatives over the past two years. You have copilot deployments, vendor-managed LLM integrations, internal prompt engineering experiments, and a data science team building custom models. You have invested millions. And yet, your operations are running on roughly the same workflows they used three years ago.

This is not a technology failure. It is a structural failure — and it is nearly universal. Industry data consistently shows that fewer than one in five enterprise AI pilots reach production. Understanding why requires looking honestly at the actual barriers enterprises face.

The Nine Core Barriers

- Pilots That Never Scale: AI proof-of-concepts succeed in controlled environments and fail when they meet real enterprise data complexity, integration requirements, and security policies.

- Tool Sprawl: The average large enterprise now has dozens of AI tools — most of which don’t communicate with each other, creating a new layer of operational chaos on top of existing complexity.

- Data Silos: Enterprise knowledge is fragmented across CRM, ERP, legacy databases, document stores, emails, and SharePoint instances. AI that can’t see the full picture gives dangerously incomplete answers.

- Manual Workflows: High-value knowledge workers spend 30–40% of their time on repeatable digital tasks — data entry, report generation, ticket routing, status updates, and approval chasing.

- Knowledge Trapped in Documents: Institutional expertise lives in PDFs, contracts, SOPs, and emails that are never surfaced at the moment they are needed — and disappear when the author leaves.

- Swivel-Chair Operations: Employees routinely copy data between systems because integration was never built. This is expensive human labor doing machine work.

- Integration Complexity: Enterprise systems — SAP, Salesforce, ServiceNow, Workday, and custom legacy platforms — each have their own API paradigms, authentication models, and data schemas.

- Security and Privacy Risk: Most commercial AI tools require sending data to third-party clouds. For regulated industries, this is a non-starter without significant architectural investment.

- No Orchestration Layer: There is no enterprise-grade system for coordinating multiple AI tools toward a common workflow. Each tool operates in isolation, and humans are the connective tissue between them.

Taken individually, each of these problems is solvable. Taken together, they form a reinforcing system that prevents enterprise AI from moving beyond the innovation theater stage. The real problem isn’t AI capability. The real problem is the missing infrastructure layer between LLM capability and enterprise operational reality.

03 · Platform Value: What NemoClaw Actually Solves

Enterprise agent platforms like NemoClaw represent a fundamentally different architectural approach to deploying AI in production environments. Rather than wrapping an LLM in a chat interface and calling it enterprise-ready, NemoClaw is designed ground-up for the operational, security, and integration requirements that separate real enterprise deployments from sophisticated demos.

Autonomous Agents That Execute, Not Just Advise

NemoClaw agents are not suggestion generators. They are configured with a goal, given access to defined enterprise systems and tools, and tasked with completing multi-step workflows autonomously. A procurement agent doesn’t just tell a buyer what to do — it searches the vendor catalog, compares pricing against contract terms, generates the purchase request, routes it through the approval chain, and updates the ERP once approved.

Deep Integration with Enterprise Systems

Enterprise environments run on SAP, Oracle, Salesforce, ServiceNow, Workday, SharePoint, and dozens of industry-specific platforms. NemoClaw’s integration architecture treats these as first-class tool endpoints — not afterthoughts accessed through fragile API patches. A NemoClaw agent connecting to your CRM has the same contextual understanding of that system as an experienced operations analyst.

Secure Deployment Inside Your Infrastructure Boundary

NemoClaw is architected for deployment inside enterprise VPCs, private clouds, and on-premises environments — meaning your data, your queries, and your agent behaviors never traverse a third-party cloud. This isn’t a configuration option. It’s a first-class deployment model that allows organizations in financial services, healthcare, and defense to adopt agentic AI without compromising their data governance posture.

Multi-Agent Orchestration

The most powerful enterprise workflows involve multiple specialized agents working in parallel or sequence: a research agent gathering market data, an analysis agent synthesizing insights, a writing agent drafting the deliverable, and a routing agent sending it to the right distribution list. NemoClaw’s orchestration layer manages agent-to-agent communication, task delegation, state management, and conflict resolution — without requiring human coordination at every handoff.

Knowledge Work Automation at Scale

Enterprise knowledge work — report generation, compliance documentation, customer communications, internal briefings, technical analysis — represents an enormous proportion of white-collar labor cost. NemoClaw agents handle high-volume, repeatable knowledge work at a fraction of the cost and in a fraction of the time, while routing genuinely novel or high-stakes work back to human experts.

The shift from copilot to agent is the shift from AI that answers questions to AI that completes objectives. That is a fundamentally different operating model — and a fundamentally different business case.

The Digital Workforce Layer

NemoClaw creates a digital workforce layer that sits between your enterprise systems and your human workforce. It handles the high-volume, rule-intensive, data-heavy work that currently occupies a disproportionate share of your most expensive employees’ time. It doesn’t replace your people. It frees them to do the work that actually requires human judgment, relationship management, and creative problem-solving.

04 · Market Disruption: Why This Disrupts Traditional Enterprise Software

Enterprise software has been built on a consistent architectural assumption for thirty years: humans interact with interfaces, interfaces present data, humans make decisions, and humans execute actions. The entire SaaS business model is predicated on this assumption. You pay per seat because each seat represents a human user who needs access to your system.

AI agents break this assumption at the foundation. When an agent can interact with a system through its API with the same sophistication as a trained human operator, the interface becomes secondary. The seat-based pricing model becomes anachronistic. The entire category logic of enterprise software shifts.

SaaS Interfaces Replaced by Conversational and Agentic Interfaces

Consider what a finance team currently does with their ERP system: navigate menus, pull reports, enter data, reconcile figures, export to Excel. A NemoClaw-powered finance agent takes a natural language objective — ‘Produce the monthly variance analysis for Q1, flag any line item more than 15% over, and draft the CFO briefing document’ — and executes the entire workflow without a single UI interaction.

Automation Replacing Swivel-Chair Operations

One of the most expensive hidden costs in enterprise operations is the manual movement of data between systems that don’t talk to each other. An agent executes these multi-system data workflows in seconds, never forgets a step, never mis-keys a record, and never gets tired at 4:45 PM on a Friday.

AI Becoming an Operator, Not Just an Assistant

The strategic inflection point arrives when AI stops assisting the operator and becomes the operator. In shared services organizations — the finance operations, HR operations, IT help desk, and procurement teams that execute high-volume transactional work — agents can handle tier-one and tier-two workloads autonomously. Level-three escalations reach humans faster because agents have already triaged, documented, and contextualized the issue.

Enterprise Knowledge Becoming Executable

Your company’s pricing policies, compliance rules, escalation procedures, and contractual terms currently exist as documents that humans must read, interpret, and apply. An agent trained on that knowledge base doesn’t read the policy — it operationalizes it. Every decision the agent makes is already compliant with your policies because the policies are part of its operating context.

Impact on Shared Services and Core Functions

In IT support, agents handle password resets, access provisioning, incident triage, and knowledge base maintenance — reducing Level 1 ticket volume by 40–60% in mature deployments. In finance, agents automate accounts payable, reconciliation, and financial reporting preparation. In HR, agents handle onboarding coordination, benefits queries, and policy lookups. In sales, agents automate CRM hygiene, pipeline updates, and proposal generation. These are structural reductions in operational cost with simultaneous improvements in throughput and accuracy.

05 · Persona Adoption: A Platform for Every Persona

Enterprise technology adoption lives or dies on whether the people it affects can see themselves in the use case. Abstract benefits don’t drive change management. Specific, role-relevant value does.

CIO — Technology Strategy

The Problem: Managing a portfolio of disconnected AI tools that don’t integrate, don’t scale, and don’t have consistent governance. Every team has a different AI vendor and a different security posture.

NemoClaw Value: A single enterprise agentic platform that runs securely inside the corporate infrastructure boundary, integrates with existing IAM and data governance frameworks, and provides a consistent governance layer across all AI deployments. The CIO moves from managing AI chaos to managing an AI platform.

CTO — Engineering & Architecture

The Problem: Engineering teams spend disproportionate time on scaffolding, documentation, code review, and incident response — work that has high volume but low differentiation.

NemoClaw Value: Engineering agents that handle PR review triage, automated test generation, runbook-based incident diagnosis, release note drafting, and API documentation. Engineers focus on architecture and complex problem-solving; agents handle operational volume. Sprint velocity increases without headcount increases.

Head of Operations — Operations & Service Delivery

The Problem: Operations teams run on manual processes that are high-touch, error-prone, and expensive to scale. Every process improvement requires headcount or a multi-year IT project.

NemoClaw Value: Agents that execute defined operational workflows end-to-end — data gathering, processing, routing, escalation, and documentation — without human coordination at each step. Teams shift from doing work to supervising agents doing work.

Finance Team — FP&A · Accounting · Treasury

The Problem: Month-end close, variance analysis, board reporting prep, and audit preparation consume enormous analyst time on work that is largely procedural but highly sensitive.

NemoClaw Value: Finance agents that pull data from ERP, run configured analyses, generate draft reports and commentary, flag anomalies against thresholds, and prepare CFO briefing packages. The close process accelerates from days to hours.

Sales Team — Revenue · GTM · Account Management

The Problem: Sales reps spend fewer than 35% of their time selling. The rest goes to CRM updates, proposal preparation, internal coordination, research, and administrative tasks.

NemoClaw Value: Sales agents that maintain CRM hygiene automatically after calls, research target accounts and generate personalized outreach sequences, prepare meeting briefs, draft proposal sections, and update pipeline status based on email signals.

Engineers — Software · Platform · Data

The Problem: Talented engineers are frequently blocked by context-switching between coding, documentation, testing, and operational responsibilities that fragment deep work.

NemoClaw Value: Development agents that generate unit tests from code context, maintain and update technical documentation, respond to routine support escalations using runbooks, draft release notes, and surface relevant internal knowledge during investigation.

Analysts — Strategy · BI · Research

The Problem: Analysts spend 60–70% of their time on data gathering, cleaning, and formatting — the unglamorous upstream work that consumes capacity before any real analysis begins.

NemoClaw Value: Research and analysis agents that aggregate data from multiple enterprise and external sources, perform configured analyses, generate structured insights in defined formats, and surface relevant historical context from document repositories.

Customer Support — CX · Service · Success

The Problem: Support teams handle high volumes of repetitive tier-one queries while also managing complex escalations that require deep product and account context.

NemoClaw Value: Support agents that handle tier-one queries fully autonomously, perform account lookups, process routine requests, and escalate complex issues to humans with full context already documented. Resolution times drop and CSAT improves.

HR — People Operations · Talent

The Problem: HR teams manage high volumes of policy queries, onboarding workflows, benefits administration, and compliance documentation — work that is essential but resource-intensive.

NemoClaw Value: HR agents that handle employee policy queries instantly, coordinate onboarding task sequences across IT and facilities, process benefits change requests, and maintain compliance documentation. HR professionals focus on talent strategy and culture.

06 · Data Security & Architecture: Your Data Is Your IP — Protect It

The single most common reason enterprise AI deployments stall or fail security review is this: the default architecture for most AI tools requires sending enterprise data to a vendor-managed cloud environment. For organizations whose competitive advantage lives in their data, this is not an acceptable trade-off.

Private Deployment — Data Never Leaves Your Boundary

Enterprise-grade platforms like NemoClaw support deployment inside corporate VPCs, private cloud environments, and on-premises data centers. The LLM runtime, the agent orchestration layer, and all data processing occur within the enterprise network boundary. No query content, no retrieved documents, and no agent outputs traverse a third-party network. This is the foundational requirement for regulated industries.

Retrieval-Augmented Generation (RAG) — Ground AI in Your Knowledge

RAG architecture keeps the LLM’s reasoning capability separate from the enterprise knowledge base. At query time, relevant documents and data snippets are retrieved from a secure, access-controlled vector store and injected into the model’s context. The LLM reasons over your data without absorbing it into model weights. The knowledge base remains auditable, updatable, and governed independently of the model.

Role-Based Access Control (RBAC) and Data Entitlement

An agent operating on behalf of a user should have access to exactly the systems and data that the user is authorized to access — and nothing more. NemoClaw’s RBAC architecture propagates user-level entitlements into every agent action. Access governance is not a post-hoc addition — it is native to the agent execution model.

Federated Learning — Intelligence Without Data Centralization

For enterprises with distributed data environments — multi-region, multi-entity, or involving external partner data — federated learning allows model improvement to occur locally, with only model parameter updates (not raw data) shared across nodes. Organizations in healthcare and financial services can build increasingly capable AI models without ever centralizing sensitive records.

Confidential Computing and Encryption

Confidential computing environments (hardware-enforced trusted execution environments) protect data in use — not just at rest and in transit. Sensitive queries and document retrievals can be processed in memory environments that are cryptographically isolated even from the infrastructure operator. Combined with end-to-end encryption of all agent communication channels, this closes the primary attack surfaces for data exfiltration.

Audit Logging and Model Governance

Every agent action — every system call, every data retrieval, every output generated — should produce an immutable audit log entry. Beyond compliance, audit logs are the operational intelligence layer that allows governance teams to understand what agents are doing, identify anomalies, and demonstrate regulatory due diligence.

07 · Deployment Safety: Guardrails Before You Let Agents Run

Autonomous agents are powerful precisely because they can take real actions in real systems. That capability is also exactly why deploying them without a comprehensive guardrail architecture is professionally reckless. The question is not whether to implement guardrails — it is how to implement them without eliminating the operational value that makes agents worth deploying in the first place.

Human-in-the-Loop Approvals

The deployment architecture must define, for each agent capability, whether actions are: fully autonomous (execute without human review), supervised (execute but log for human review), or approval-gated (pause for explicit human authorization). High-value financial transactions, external communications, personnel actions, and irreversible system changes should default to approval-gated. Routine data retrieval and internal report generation can be fully autonomous.

Action Authorization Frameworks

Agents should have a clearly defined permission scope covering: which systems they can access, which operations (read vs. write vs. delete) they can perform on each system, under what conditions they can escalate their own permissions, and who can modify their permission scope. This applies the principle of least privilege to agent capabilities.

Prompt Injection and Adversarial Input Protection

Prompt injection — where malicious content in external data sources attempts to override the agent’s instructions — is a real and underappreciated attack surface in agentic systems. NVIDIA NeMo Guardrails provides a programmable policy framework specifically designed for this class of risk.

Output Validation and Hallucination Mitigation

For agents operating in contexts where accuracy has operational or legal consequences — financial analysis, compliance documentation, customer-facing communications — output validation pipelines are essential. This includes grounding checks, consistency checks, and human review triggers for outputs above defined risk thresholds.

Monitoring, Incident Response, and AI Safety Policies

Agents in production require observability infrastructure covering: task completion rates, anomaly detection in agent behavior patterns, latency and cost per agent execution, and output quality metrics. An incident response plan specific to AI agents should define the escalation path, rollback procedures, and communication protocols for scenarios where agent behavior produces unintended outcomes.

Compliance and Model Risk Management

For financial services, healthcare, and other regulated industries, AI agents that make or support consequential decisions may be subject to model risk management (MRM) requirements. Implementing MRM-compatible governance from the start — rather than retrofitting it after a regulatory inquiry — is the operationally intelligent approach.

08 · Enterprise Governance: An Enterprise Governance Framework for AI Agents

The deployment of autonomous AI agents creates governance questions that most organizations have not yet formally answered. Who is accountable when an agent makes an error? Who approves the deployment of new agents? Who has the authority to shut down an agent that is behaving unexpectedly? The absence of clear answers to these questions is a material organizational risk.

Ownership and Accountability

Every production agent deployment should have a designated Business Owner — a senior business leader accountable for the agent’s purpose, scope, and performance — and a designated Technical Owner responsible for configuration, integration integrity, and operational health. Shared ownership without clear accountability produces governance vacuums.

Agent Approval and Change Control

New agent deployments and material changes should follow a defined approval process involving: technology review (integration architecture, security, performance), compliance review (regulatory implications, data handling), risk review (potential failure modes, impact assessment), and business owner sign-off.

Monitoring and Ongoing Oversight

A designated AI Operations function should own ongoing monitoring of deployed agents: reviewing audit logs for anomalies, tracking performance metrics against defined thresholds, managing the escalation process when agents produce unexpected behavior, and maintaining the agent inventory and capability registry.

Responsible AI Framework

The governance model should be grounded in a formal Responsible AI framework addressing: fairness and bias monitoring, transparency (stakeholders should understand what agents are doing and why), accountability (clear chains of responsibility), and safety (priority protocols for scenarios where safety considerations override operational objectives).

Legal, Compliance, and Data Policy Involvement

Legal and compliance teams should be involved from the design stage. Key areas requiring their input include: data residency and cross-border transfer compliance, contractual implications of AI-generated communications and documents, IP considerations for AI-generated work product, and liability frameworks for agent errors.

Model Update and Deprecation Governance

Governance should define: testing requirements before model version updates are deployed to production agents, rollback procedures if a model update produces performance regression, and communication protocols to business owners when model updates are pending.

09 · Adoption Barriers: Entry Barriers — An Honest Assessment

Adoption enthusiasm without adoption realism produces failed implementations. Enterprise leaders deserve an honest analysis of what makes deploying agent platforms like NemoClaw genuinely difficult, and which organizations face the highest structural barriers.

| Industry / Category | Barrier Level | Primary Drivers |

|---|---|---|

| Financial Services (Banking, Insurance) | HIGH | Model risk management requirements, data sovereignty, regulatory scrutiny (OCC, FINRA, SR 11-7), legacy core systems integration |

| Healthcare & Life Sciences | HIGH | HIPAA data governance, FDA software validation requirements, EHR integration complexity, liability sensitivity in clinical contexts |

| Defense & Government | HIGH | FedRAMP, ITAR, classification boundary requirements, procurement cycles, multi-year infrastructure transitions |

| Manufacturing & Industrial | MEDIUM | OT/IT integration complexity, data quality in operational systems, workforce change management, safety-critical process risk aversion |

| Professional Services | MEDIUM | Client data confidentiality, AI ethics concerns in advisory contexts, partner/professional resistance, IP boundary questions |

| Retail & E-commerce | MEDIUM | Real-time data integration requirements, customer data privacy (CCPA/GDPR), thin margin tolerance for error |

| Media & Entertainment | LOW | IP licensing complexity, but generally cloud-native, data-rich, and innovation-tolerant with high digital content volume |

| Technology & SaaS Companies | LOW | Technically sophisticated, cloud-native, AI-familiar workforces. Governance and integration complexity still exist but are manageable |

| PE/VC-backed ISVs & Growth Companies | LOW | High motivation to achieve operational leverage, modern tech stacks, investor pressure to demonstrate AI ROI. Data maturity is the primary variable |

The barriers are real but they are not uniformly prohibitive. Organizations in high-barrier industries are not being advised to delay — they are being advised to invest appropriately in the governance and infrastructure foundations that make production deployment sustainable.

10 · Path to Adoption: How to Cross the Barriers

The most common mistake enterprises make when confronting AI adoption barriers is treating them as binary: either we are ready to deploy agents or we are not. Agent adoption is a journey with a well-defined progression, and the first steps are achievable for nearly every enterprise that has made a genuine commitment to the program.

Start with Internal Copilots Before Autonomous Agents

The path to autonomous agents runs through copilot deployments that build organizational familiarity, surface integration challenges, and develop governance reflexes without the operational risk of full autonomy. Internal knowledge assistants — agents that answer employee questions using the company’s document repositories — are a natural starting point.

Begin with Read-Only Agents

The governance and risk profile of an agent that can only read data from enterprise systems is dramatically lower than an agent that can read and write. Starting with read-only agents — research agents, analytics agents, document intelligence agents — allows organizations to develop operational confidence and governance processes before introducing the additional complexity of agentic action-taking.

Non-Critical Workflows First

Early agent deployments should target workflows where errors are recoverable, financial impact is bounded, and customer visibility is limited. Internal report generation, meeting summarization, knowledge base maintenance, and first-draft documentation are appropriate entry points.

Build an AI Center of Excellence Early

The organizations that deploy AI most successfully build a dedicated AI Center of Excellence (CoE) before they need it. The CoE owns: the enterprise AI platform architecture, the governance framework, vendor relationship management, enablement and training programs, and the performance measurement framework.

Hybrid Deployment — Cloud Flexibility, On-Prem Control

For enterprises with mixed data sensitivity profiles, a hybrid deployment model allows cloud-based agents for low-sensitivity workflows alongside private deployment for sensitive contexts. This provides cost efficiency at scale while maintaining the data control posture required for regulated information.

Partner Ecosystem Leverage

No enterprise should build enterprise agent infrastructure from scratch. The partner ecosystem — NVIDIA for the AI infrastructure and model stack, hyperscalers (AWS, Azure, GCP) for cloud components, and system integrators with enterprise integration expertise — provides the implementation capability and operational knowledge that accelerates deployment and reduces risk. NStarX’s Service-as-Software delivery model is specifically designed to bridge this gap.

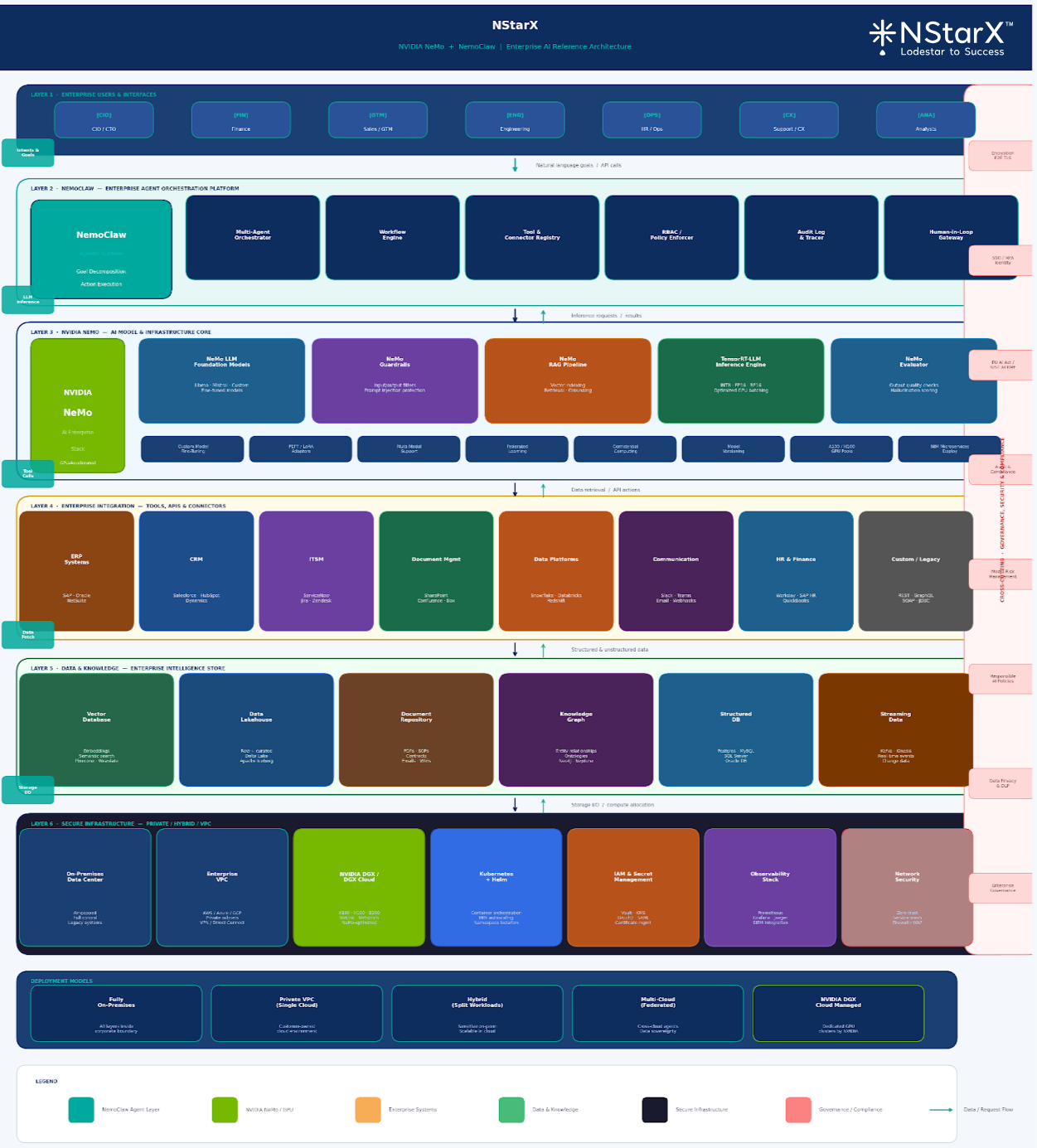

11 · Technology Architecture: NVIDIA NeMo + NemoClaw — The Architecture That Changes the Equation

For enterprise technology leaders who need to make build-vs-buy decisions about AI infrastructure, the combination of NVIDIA’s NeMo ecosystem and NemoClaw’s enterprise agent layer represents a compelling architectural case. See NStarX’s definition of NVIDIA NeMo+NemoClaw Ecosystem in Figure 1:

NVIDIA NeMo: Enterprise AI Infrastructure

NVIDIA NeMo is not a model — it is an end-to-end enterprise AI development and deployment framework. The NeMo LLM stack provides the model training, fine-tuning, and inference infrastructure required to operate large language models at enterprise scale. NeMo is designed for deployment on enterprise-grade NVIDIA GPU infrastructure, providing the performance characteristics that production agentic workloads require.

NeMo Guardrails: Programmable Safety

NVIDIA NeMo Guardrails provides a programmable policy layer that controls LLM behavior at inference time — preventing off-topic responses, enforcing factual grounding requirements, implementing topic restrictions, and providing prompt injection protection. Rather than relying on model training to produce safe behavior, Guardrails enforces behavioral constraints programmatically — an approach that is both more reliable and more auditable for enterprise governance purposes.

NeMo RAG Pipeline: Grounded Enterprise Knowledge

The NeMo retrieval-augmented generation pipeline connects LLM reasoning to enterprise knowledge stores — document repositories, databases, vector indexes — in a way that is both architecturally sound and operationally manageable. Enterprise knowledge is indexed, access-controlled, and surfaced to agents at query time, with source attribution that makes agent outputs auditable and traceable.

NemoClaw: The Agent Orchestration Layer

Sitting above the NeMo infrastructure stack, NemoClaw provides the enterprise-grade agent orchestration layer: the multi-agent coordination framework, enterprise system integration connectors, the workflow execution engine, RBAC enforcement, and audit logging infrastructure. NemoClaw translates the raw capability of NeMo LLMs into configured enterprise agents that operate within defined business workflows with defined permissions and governance controls.

GPU Acceleration and Inference at Scale

Enterprise agentic workflows — particularly multi-agent workflows involving multiple LLM calls per task — are computationally intensive. NVIDIA’s GPU acceleration and inference optimization (TensorRT-LLM) provides the throughput and latency characteristics required for production agentic workflows at enterprise scale. This is particularly relevant for time-sensitive operational workflows where agent response time affects business outcomes.

From an industry analyst perspective, the NeMo + NemoClaw stack represents the closest thing currently available to a coherent, production-grade enterprise AI platform architecture — addressing the infrastructure layer (NeMo), the safety and policy layer (Guardrails), the knowledge retrieval layer (RAG), and the agent orchestration layer (NemoClaw) in a way that is architecturally integrated rather than assembled from incompatible vendor components.

12 · Best Practices for Enterprise Agent Adoption

Technology

- Design for private deployment from the start — retrofitting security architecture is always more expensive than building it in.

- Build a unified data layer before deploying agents — agents that can’t access clean, governed data will produce unreliable outputs.

- Implement RAG architecture for enterprise knowledge retrieval rather than attempting to fine-tune proprietary data into model weights.

- Use NVIDIA NeMo Guardrails as a mandatory component, not an optional enhancement.

- Design the integration layer for enterprise systems before agent development begins.

- Build for multi-agent orchestration from the start, even if initial deployments are single-agent — the architecture should support the roadmap.

Security

- Apply RBAC at the agent action level — agents should not be able to perform actions their authorized users couldn’t perform.

- Implement comprehensive audit logging covering all agent actions, data retrievals, and outputs from day one.

- Conduct red-team exercises specifically targeting prompt injection vulnerabilities in production agents.

- Establish data classification policies and ensure agents respect classification boundaries.

Governance

- Establish the governance framework before the first production deployment — ownership model, approval process, monitoring responsibilities.

- Maintain an agent inventory and capability registry that is kept current as the agent portfolio grows.

- Include legal and compliance in governance design from the start, particularly for regulated industries.

- Align the governance model with the EU AI Act’s risk tiering framework — it represents best-practice governance thinking.

Change Management and User Adoption

- Co-design agent use cases with the business users who will work alongside them — adoption follows ownership.

- Communicate the purpose and limitations of agents to affected teams before deployment, not after the first error.

- Create internal AI champions in each business unit who understand the platform and can support adoption within their teams.

- Frame AI agents as capability amplifiers for existing teams, not headcount reduction mechanisms — the framing determines the adoption culture.

Pilot to Production Strategy

- Define production success criteria before the pilot begins — not when the pilot concludes and budget decisions are pending.

- Include integration testing, security review, and governance sign-off as formal pilot exit criteria.

- Budget for the infrastructure and operational overhead of production agent management from program inception.

- Measure ROI at the workflow level — time saved, error rate reduction, throughput increase — not at the technology investment level.

13 · Future Vision: What the Autonomous Enterprise Looks Like

The Five-Year Horizon

Within five years, the enterprises that have successfully deployed agent platforms will operate with a fundamentally different cost and capability structure than those that have not. The differential will not be a matter of degree — it will be structural. Agentic enterprises will execute operational work that currently requires hundreds of FTEs with a fraction of that headcount, redirecting human capital to genuinely differentiating activities.

The Ten-Year Horizon

Within ten years, the concept of a knowledge worker spending significant time on procedural, repeatable digital tasks will seem as anachronistic as manual bookkeeping seems today. Enterprise software will not be navigated — it will be instructed. Workflows will not be managed — they will be supervised. The enterprise operating model will be built around humans managing outcomes and agents managing operations.

The Digital Workforce as Organizational Infrastructure

The most significant structural shift will be the emergence of the digital workforce as a recognized organizational layer — not a technology initiative, but a component of the operating model with its own management structure, governance processes, and performance metrics. Enterprise org charts will include digital workforce capacity alongside human headcount in operational planning.

Autonomous Business Processes

Many current business processes that involve humans primarily as information conduits — approving standard requests, routing documented exceptions, generating periodic reports — will be fully automated. Human involvement will be reserved for processes where judgment, relationship, or accountability requirements exceed what autonomous systems can satisfy.

Multi-Agent Enterprises

Complex enterprise operations will be executed by coordinated multi-agent systems: specialized agents handling defined workflow segments, communicating through structured interfaces, supervised by human operators who define objectives and review outcomes. The enterprise of 2035 will have more agents in operation than human employees — not because humans are displaced, but because agent capacity is infinitely scalable in ways that human capacity is not.

Humans Managing Agents

The emerging job category of AI agent manager — professionals who configure, monitor, govern, and optimize AI agent deployments — will be a significant professional category within a decade. The skills required are a hybrid of business process knowledge, technical understanding of AI systems, governance and risk management, and systems thinking.

The enterprises that build genuine human-AI collaboration models — where humans focus on judgment, creativity, and relationship while agents handle operational volume — will build operating models that are simply not replicable by organizations that do not make this transition.—NStarX Enterprise AI Strategy Framework, 2026

This is not a prediction about technology. It is a prediction about competitive structure. The window for building the foundational infrastructure — the data layer, the governance model, the integration architecture, the organizational capability — is open now. It will not remain open indefinitely.

14 · References

Reference Categories

The following categories of sources should be cited in the final version of this report. Source-specific citations should be validated against current editions prior to publication.

- McKinsey Global Institute — Annual reports on generative AI economic impact, enterprise AI adoption rates, and productivity multipliers for knowledge workers

- Gartner — Hype Cycle for AI, Magic Quadrant for AI Platforms, research on AI governance maturity frameworks

- Deloitte Insights — State of AI in the Enterprise survey; AI governance and risk management frameworks for regulated industries

- NVIDIA — NeMo Guardrails documentation; NeMo LLM framework technical documentation; NVIDIA AI Enterprise deployment guides; GTC keynote materials

- IDC — Enterprise AI spending forecasts; AI platform market sizing; agentic AI adoption benchmarks

- Stanford HAI — AI Index Report (annual); research on LLM capability benchmarks and enterprise deployment

- EU AI Act — Official text and implementing regulations; European AI Office guidance on high-risk AI systems

- NIST — AI Risk Management Framework (AI RMF 1.0); NIST AI 100-1 (Trustworthy AI characteristics)

- US Executive Order on AI (October 2023) and subsequent agency guidance — Federal AI governance requirements

- Lewis et al. (2020) — “Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks” (foundational RAG architecture paper)

- Yao et al. (2022) — “ReAct: Synergizing Reasoning and Acting in Language Models” (agentic AI reasoning framework)

- Federal Reserve SR 11-7 — Guidance on Model Risk Management (applicable to financial services AI deployments)

- HIPAA Technical Safeguards documentation — Data privacy requirements for healthcare AI deployments

- Research on federated learning in regulated industries — McMahan et al. (2017) and subsequent applied research

- Intel / AMD / NVIDIA confidential computing documentation — Trusted Execution Environment (TEE) architectures

- Anthropic, OpenAI, Google DeepMind — Responsible AI and AI safety policy frameworks

- Forrester Research — Enterprise AI platform adoption; total economic impact studies for AI agent deployments

About NStarX

NStarX is an AI-native product and platform engineering services company operating under a Service-as-Software delivery model. We partner with PE/VC-backed ISVs and Russell 2000 enterprises to move AI from pilot to production — providing the domain expertise, integration capability, and ongoing operational management that enterprise AI initiatives require. Learn more at nstarx.com.