A NStarX Enterprise Perspective · 2026

Authors: Adrian Paleacu and Sujay Kumar

The moat is no longer what you know. It is what you can prove works in the real world.

1. The Ground Is Shifting — and Most Organizations Are Still Standing Still

Let us be blunt about something that tends to get buried under the optimistic language of analyst reports and vendor keynotes: most software organizations are not changing fast enough. They are adopting AI tools, yes. They are running pilots. They are putting “AI-native” somewhere in their strategy decks. But the underlying machinery of how they actually build and ship software — the teams, the handoffs, the approval chains, the specialist silos — remains almost entirely intact.

That is the quiet crisis that nobody wants to acknowledge, because acknowledging it means confronting some uncomfortable organizational truths.

We are living through the most disruptive period in software engineering since the internet changed everything in the 1990s. Not because AI writes better code than humans — sometimes it does, often it does not. But because AI is fundamentally changing the economics and logistics of who can build software, how quickly loops close between idea and deployment, and what kinds of human skills actually generate value in a delivery chain. That is a structural shift, not a tooling upgrade.

The concept we keep coming back to is what we at NStarX call the Coordination Tax. Every time a piece of work crosses a boundary between people — from a business analyst to an architect, from a developer to a QA engineer, from a product manager to a DevOps team — something invisible happens. Context degrades. Misunderstandings accumulate. Delays compound. None of this shows up on a project plan. It just silently erodes delivery speed and product quality. And for decades, we have accepted it as the natural cost of building complex software.

AI is not just automating tasks. It is collapsing the rationale for that fragmentation. When a single capable person, properly equipped with the right AI toolchain, can genuinely own a feature from customer conversation to deployed, monitored production code — the old model starts to look less like a system and more like a legacy habit.

This is not a distant future scenario. It is already happening, selectively, in pockets of enterprises and widely among startups. The question for every CTO and CIO reading this is: are you building the organizational and technical infrastructure to thrive in this new model, or are you layering new tools on top of old structures and wondering why the ROI is disappointing?

This piece works through the full picture: where the market actually is (not where the hype says it is), where the real gaps lie, what skills are becoming scarce versus obsolete, how the software development lifecycle itself is being redesigned, and what concrete moves technology leaders should be making right now before the window closes.

2. What This Actually Looks Like in Practice — Beyond the Press Releases

Reading about AI in software development and seeing it in practice are two entirely different experiences. The press releases tend to feature dramatic before-and-after stories with clean metrics. The reality we encounter with enterprises is messier, more interesting, and more instructive than that. Let us share what a few notable examples actually reveal.

Goldman Sachs: Making AI Work With What the Bank Already Knows

Goldman Sachs did something most banks have not been willing to do: they took their internal development platform and rebuilt it around AI in a serious way. Not by adding a general-purpose AI assistant on top of existing tools, but by fine-tuning models on the bank’s own codebase, internal documentation, and project history.

The insight here is underappreciated. The reason generic AI coding assistants underperform for large enterprises is not that the models are weak — it is that they have no knowledge of your system. They do not know your naming conventions, your architectural constraints, your security policies, or the reason that particular function was built the way it was five years ago. Goldman addressed this by giving the model institutional memory. That is a fundamentally different proposition than deploying GitHub Copilot off the shelf.

Amazon: Measuring the Right Things

Amazon built a metric called Cost to Serve Software, which attempts to capture the total organizational cost of delivering and maintaining a unit of software capability. It is a more honest measure than lines of code or story points because it includes the overhead, not just the output.

What makes this story worth telling is not the metric itself but what Amazon found when they actually optimized against it. AI tools alone did not move the number much. What moved it was AI tools combined with systematic process re-engineering — reexamining every step of delivery for places where the old human-to-human handoff could be shortened or eliminated. The year-over-year cost reduction they achieved did not come from telling developers to use Copilot. It came from rebuilding how work flowed through the organization.

Most organizations are only doing the first part and then wondering why the second does not follow.

The Rise of the Agentic IDE and What Cursor Tells Us

Cursor, an AI-powered development environment that understands your entire codebase rather than just the file you have open, went from a niche product to capturing a dominant share of the AI-assisted developer market in roughly two years. That growth rate is not primarily a story about marketing. It is a story about a genuine

capability gap that existing tools were not filling.

Developers were not frustrated that AI could not autocomplete a line of code. They were frustrated that AI had no idea what their codebase was actually trying to do. Cursor addressed that. The lesson for enterprises is that the tooling market is moving fast, and the tools that win are not the ones with the best AI model — they are the ones that best preserve context across the full development workflow. Context is the scarce resource.

Devin and the Autonomous Agent Question

Cognition’s Devin attracted enormous attention when it launched in 2024 because it represented something genuinely new: an AI that could be given a description of a software task in plain language and would attempt to complete it end-to-end, including writing, testing, debugging, and deploying code. The benchmark improvements since then have been remarkable — the ability of leading models to solve real open-source engineering problems has more than doubled in eighteen months.

We want to be careful here, though. The gap between “solving a well-scoped benchmark task” and “maintaining the architectural integrity of a production system over three years” is vast. Devin and its successors are impressive proof points that autonomous software development is not a fantasy. They are not yet a substitute for experienced engineering judgment in complex, ambiguous, production environments. The organizations learning to use these tools as amplifiers rather than replacements are going to win. The ones waiting for the tools to become complete replacements will be waiting a long time.

AI-Native from Day Zero: The Startup Baseline Has Changed

Perhaps the most structurally significant shift is happening not in large enterprises but in startups that have never known any other way to build software. Companies founded in 2024 and 2025 are designing their entire engineering workflow around AI from the beginning. They are not retrofitting AI onto a Jira board and a two-week sprint cycle. AI is the delivery system, and humans provide direction, judgment, and quality assurance.

These companies are setting a new competitive baseline. They ship with smaller teams, faster feedback loops, and lower unit costs than their predecessors. Every enterprise that built its engineering organization the old way is now competing against that baseline.

The most dangerous competitive threat is not a large competitor with more resources.

It is a small team that has eliminated the coordination tax entirely and can ship in hours

what takes your organization weeks.

3. How People, Process, Technology, and Tooling Are All Changing at Once

What makes this moment genuinely difficult to manage is that the change is not happening in one dimension. It is not just a tooling upgrade. It is not just a skills issue. It is all four pillars of the software delivery system changing simultaneously, at different rates, and in ways that interact with each other in complex ways.

People: The Role Redefinition Nobody Is Having Honestly

We talk to engineering leaders regularly who are navigating a real tension: they know that AI is changing what their developers need to do, but they are not sure how to have the conversation without it sounding like a threat. So the conversation often does not happen. Developers are given AI tools and told to “be more productive,” and the deeper question of what that productivity should be redirected toward goes unanswered.

Here is the honest version of that conversation: the tasks that defined a junior developer’s first two years — writing boilerplate, implementing straightforward features, debugging common errors, writing basic tests — are being automated faster than the profession has absorbed. A Stanford study tracking employment among early-career developers found a meaningful decline in that cohort between 2022 and 2025, coinciding precisely with the expansion of AI coding tools. That is not a coincidence.

At the same time, the demand for people who can do what AI cannot is intensifying sharply. Problem definition. Architectural reasoning under real constraints. The ability to look at AI-generated code and know, without running it, that it has encoded a business logic error three levels deep. Owning the edge cases that automated systems consistently miss. These are not skills you develop by reading documentation. They come from years of building things that failed in unexpected ways and learning from that failure.

The crisis underneath the surface is that we are eliminating the on-ramp for developing those deeper skills. When entry-level implementation work disappears, where do junior developers learn to build intuition? That is a pipeline problem the industry has not solved, and it is going to surface loudly in about five years.

Process: The Handoff Model Is the Problem, Not the Solution

The traditional software delivery process was designed to manage risk through structure: defined stages, gates, reviews, handoffs between specialists. This made sense when the cost of mistakes was high and the speed of iteration was low. AI is inverting both of those assumptions. Mistakes are cheaper to detect and fix when you have AI-assisted test generation and instant feedback loops. Iteration speed can be dramatically higher. But only if your process is designed to take advantage of that.

Most enterprise delivery processes are not. They are still built around the assumption that each stage takes weeks, that handoffs are necessary, and that the primary risk to manage is human error in implementation. The new primary risk is architectural drift, context loss across automated steps, and the slow accumulation of AI-generated technical debt that nobody is tracking.

The organizations that are genuinely benefiting from AI are not the ones that added AI to their existing process. They are the ones that asked: “if we could redesign this delivery system from scratch knowing what AI can do, what would it look like?” That is a harder, scarier question, and it is the one that actually needs to be answered.

Technology: Infrastructure Is Catching Up to the Vision

The hardware and platform layer is moving fast. The capability that previously required a dedicated data center is now available in desktop form factors. Cloud-native AI development platforms have matured significantly. Security scanning, license compliance checking, and vulnerability detection are increasingly embedded inline with code generation rather than bolted on at the end of the pipeline. This is genuinely good news because it means some of the production risk concerns around AI-generated code are being addressed at the toolchain level.

What is not catching up as fast is the observability and governance infrastructure needed to run AI-assisted systems reliably at enterprise scale. More on that shortly.

Tooling: The Market Has Saturated, Which Means the Easy Part Is Over

We have crossed the threshold where AI coding tools are essentially universal. Nine in ten engineering teams have them. The question of whether to adopt has been answered by the market. What has not been answered — and what most organizations are struggling with right now — is how to actually change the work to capture the value those tools make possible.

Buying a license is not a strategy. The organizations that have moved beyond the license and redesigned how work flows, who owns what, and how quality is validated are seeing real gains. The ones that handed out subscriptions and declared victory are mostly reporting flat or mixed results and quietly questioning their investment.

4. Cutting Through the Noise: Where the Hype Ends and Reality Begins

The AI in software development space has a hype problem. Not because the technology is fake — it is genuinely transformative — but because the stories that get told are systematically selected for drama and simplicity. The 10x developer. The startup built by two people and an AI agent. The enterprise that cut development costs in half overnight. These stories are real, but they are not representative, and treating them as a planning baseline is how enterprises end up with disappointed stakeholders and abandoned programs.

Let us offer a more honest accounting.

The Productivity Story Is Real, But Heavily Conditional

Studies consistently find that AI coding assistance produces meaningful productivity improvements — but the range is enormous, and the average is modest without structural change. Giving a developer an AI assistant and measuring whether they complete isolated coding tasks faster? Yes, that shows improvement. Measuring whether your organization ships better software more reliably with demonstrably better business outcomes? That is a much more complicated story, and the data is genuinely mixed.

The companies that report dramatic gains — sometimes two or three times the baseline — share a common characteristic: they did not just adopt tools. They redesigned the work. They made deliberate choices about what AI would own, what humans would own, where the handoffs were, and how quality would be verified. That is a different and harder investment than buying software.

The Trust Gap Is the Hidden Risk That Nobody Is Taking Seriously Enough

Here is a data point that should give every engineering leader pause: developer sentiment toward AI tools has actually declined over the past two years, even as adoption has become nearly universal. More than half of developers actively question the accuracy of what these tools produce. Only a tiny fraction report high confidence in AI-generated outputs.

Now layer that on top of the reality that AI-generated code is being shipped to production systems serving real customers at enormous scale. We have a situation where the people closest to the code do not fully trust it, yet organizational pressure — efficiency mandates, cost targets, competitive urgency — is pushing that code into production anyway.

This is not an abstract concern. It is a latent quality and security risk that is building in organizations that have not built the governance infrastructure to manage AI-generated outputs systematically. The reckoning will come through production incidents, security breaches, or compliance failures. The organizations that have built validation discipline now will be in a very different position than those that have not.

The Benchmark Illusion and What It Misses

AI model performance on software engineering benchmarks has improved at a pace that seems almost too fast to be real. Problems that stumped models eighteen months ago are now being solved reliably. This is being cited as evidence that autonomous software development is imminent.

The benchmarks, however, measure things that are only a subset of what software engineering actually involves. They measure the ability to solve well-scoped, clearly defined technical problems against known test cases. They do not measure the ability to navigate a system with fifteen years of accumulated decisions baked into it, to make architectural choices under competing constraints, to understand why a customer keeps experiencing a bug that only appears on the third Tuesday of the month under specific load conditions. Production software engineering is full of problems that resist clean specification, and those are precisely the problems where experienced human judgment remains irreplaceable.

The Fraction of the Job That AI Actually Speeds Up

Writing code is somewhere between a quarter and a third of what it actually takes to move a software idea from conception to reliable production deployment. The rest of that work — understanding what the customer actually needs, making the right architectural decisions, ensuring security and compliance, orchestrating deployment, monitoring and responding to what happens in production — has not been fundamentally accelerated by AI tools. Optimizing a third of the job while leaving the other two-thirds untouched is why so many AI productivity initiatives have underdelivered against expectations.

5. The Maturity Gaps Nobody Wants to Talk About

We spend a lot of time working with enterprises on their AI transformation roadmaps, and the most productive conversations are always the ones where we get honest about where the current model is actually breaking down. Here are the gaps we see most consistently.

No One Is Governing AI-Generated Code as a Distinct Category

In most organizations, code is code. It goes through the same review process, the same compliance checks, the same deployment pipeline regardless of whether a human wrote it line by line or an AI generated it in thirty seconds from a natural language prompt. These are not the same thing and should not be treated as the same thing.

AI-generated code has different failure characteristics. It tends to be locally coherent and globally naive — it solves the stated problem in isolation without necessarily respecting the broader architectural constraints or business rules of the system it is being added to. Standard code review processes are not calibrated for

this kind of error. Organizations need AI-specific quality gates, and most do not have them.

Context Does Not Travel With the Work

One of the underappreciated costs of AI-assisted development is what we think of as the context re-establishment tax. Every time you start a new session with an AI coding assistant, you are starting from zero. The model does not remember the architectural decision you made last week, the security requirement that applies to this module, or the reason the original developer wrote that function the way they did. You have to rebuild that context every time, or you risk the AI generating technically correct but contextually wrong code.

At scale, across a large engineering organization, this context re-establishment work is not trivial. It is one of the hidden costs of AI-augmented development that does not show up in the productivity benchmarks but absolutely shows up in the quality of what gets shipped.

Testing Has Become the New Bottleneck

Here is a pattern we see regularly: AI tools dramatically increase the rate at which code is produced. The testing infrastructure does not scale with it. Code review queues grow. Test coverage becomes inconsistent. Technical debt accumulates faster than anyone realizes because the velocity increase in production is not matched by a velocity increase in validation.

The answer is not to slow down code generation. It is to build AI-native testing intelligence that scales with code generation velocity — automated test generation, continuous quality analysis, AI-assisted code review that understands architectural context. This is a significant investment, and it is one that most organizations have not yet made.

Security Is Not Keeping Up With Speed

AI-assisted development can introduce security vulnerabilities at a rate that human security reviewers simply cannot absorb. The tools that generate code do not always understand the security context of the system being built, and developers under deadline pressure are not always catching the issues that the tools miss. The best AI coding tools are building security analysis into the code generation loop itself, but adoption of those capabilities is uneven, and organizational security review processes have not been redesigned to account for AI-generated code volumes.

The Organization Is Structured for a Problem That No Longer Exists

The deepest and most difficult gap is organizational. Most enterprise software delivery organizations are structured around the assumption that human specialists are the scarce resource and that coordinating them is the primary management challenge. AI changes that assumption. When a single person with AI leverage can do what previously took a team of five, the organizational structure built to coordinate those five people becomes overhead rather than infrastructure.

Changing organizational structure is slow, politically difficult, and personally disruptive. It is also, eventually, unavoidable. The organizations that begin redesigning now will have a meaningful head start on those that wait until the competitive pressure forces them to.

6. The Production Gap: Why “It Worked in the Demo” Is Not Enough

There is a specific kind of organizational disappointment that shows up about eighteen months into an AI adoption initiative. The pilots worked. The demos were impressive. The early productivity metrics were encouraging. Then the attempt to scale to production — to make this the way the entire engineering organization works, on real systems, under real load, with real consequences for failure — ran into problems that nobody had anticipated.

This is not a failure of AI technology. It is a failure to understand that production scale is a fundamentally different environment than a demo environment, and that the gap between them is where the hard work actually lives.

Complexity Does Not Scale Linearly

AI coding tools perform remarkably well on bounded problems with clear acceptance criteria. Add enough variables — a system with meaningful legacy constraints, distributed failure modes, business rules that evolved over a decade and exist only in institutional memory, compliance requirements that interact in non-obvious ways — and the quality of AI assistance degrades faster than you would expect from the benchmark performance numbers. The tools are improving, but the complexity of enterprise systems is also significant, and the gap between benchmark performance and production performance is one that practitioners experience constantly even if it is rarely discussed openly.

You Cannot Monitor AI-Assisted Systems the Way You Monitor Human-Written Systems

When a system behaves unexpectedly in production, you need to understand why. With human-written code, the mental model for debugging is well-established: you read the code, you understand the logic, you find the error. AI-generated code is often harder to reason about because it can be simultaneously syntactically clean and logically surprising. More importantly, when AI agents are operating with meaningful autonomy — making changes, running tests, deploying updates — the audit trail becomes critical. Most organizations have not built the observability infrastructure to understand, after the fact, what an autonomous system did and why.

The Cost of Running AI at Scale Is Not What the Pilot Suggested

A model that performs adequately in a developer’s local environment may generate very different cost and latency profiles when invoked hundreds of thousands of times per day across an enterprise deployment. Inference costs at scale are material, and they interact with cloud architecture choices in ways that require dedicated financial operations discipline. The organizations that treat AI cost management as an afterthought will face unpleasant surprises when they attempt to scale.

Accountability Without Visibility Is Not Accountability

The promise of autonomous AI development agents — systems that can build, test, deploy, and monitor software with minimal human intervention — is compelling and, in certain bounded contexts, increasingly achievable. But accountability structures have not kept pace. When an autonomous agent makes a bad decision at two in the morning that causes a production incident, who is responsible? How do you trace the decision back to its source? How do you ensure it does not happen again? These are not hypothetical questions. They are engineering governance questions that need answers before autonomous deployment becomes standard practice, not after the first major incident.

7. The Skills That Are Becoming Worthless — and the Ones Worth Everything

We want to be direct about something that the industry often softens to the point of uselessness: some specific technical skills are becoming significantly less valuable, and some skills that were previously considered “soft” or “strategic” are becoming the hardest currency in engineering organizations. Pretending otherwise does not help developers navigate their careers or help organizations build the talent base they need.

What Is Losing Value, and Why

The ability to write syntactically correct code in a given language has been progressively commoditized since IDEs with intelligent autocomplete first appeared. AI has accelerated that commoditization to the point where knowing Ruby syntax or being fluent in TypeScript idioms provides minimal competitive advantage. Any decent AI assistant can do that now, on demand, in any language.

Routine implementation work — building CRUD endpoints, writing standard integrations, scaffolding boilerplate, implementing common design patterns — is being automated. This is not speculation. Employment data for early-career developers shows a meaningful contraction that aligns precisely with the widespread adoption of AI coding tools. The entry-level work that used to develop foundational skills in junior developers is disappearing, and the profession has not yet worked out what replaces it.

Narrow domain expertise, in isolation, is also losing ground. The value of knowing a specific framework deeply is eroding as AI models become capable of retrieving, synthesizing, and applying that knowledge on demand. Being the person who knows Kubernetes best, absent other differentiation, is a weaker position than it was three years ago.

What Is Gaining Value, Rapidly

- Systems judgment: The ability to reason about how components interact, where failure will propagate, and what trade-offs are worth making under real constraints. This is architectural intuition built through years of watching systems behave in unexpected ways. It cannot be retrieved from a model. It lives in the head of someone who has built things that broke.

- Validation as a craft: The ability to look at AI-generated output — code, test cases, architecture proposals, documentation — and detect the subtle wrongness that passes every automated check but will cause problems in production. This requires domain depth, adversarial thinking, and an almost allergic sensitivity to things that are technically correct but contextually wrong.

- AI orchestration fluency: The ability to direct AI agents effectively: to decompose complex problems into AI-executable steps, write prompts that produce consistently useful outputs, chain multi-step autonomous workflows, and recognize when the AI is solving the wrong problem. Job postings requiring these skills have grown at a remarkable rate over the past year and show no signs of slowing.

- Algorithmic and systems thinking: As AI handles more execution-layer work, the value of people who understand how to design efficient algorithms, architect scalable systems, and reason about computational complexity has actually increased. You need to know what you are asking the AI to build and whether what it built is actually optimal.

- LLMOps and AI infrastructure: Managing the operational lifecycle of AI systems in production — model versioning, inference cost optimization, output monitoring, drift detection, rollback procedures — is an emerging discipline with enormous demand and very limited supply of practitioners who have actually done it in a production environment.

The uncomfortable question this raises for talent strategy is about pipelines. If entry-level implementation work disappears, how do the engineers of 2030 develop the systems judgment that makes them invaluable? The profession has not answered this yet. The organizations that figure out how to create deliberate on-ramps for that kind of learning — structured exposure to complex systems, mentored judgment development, supervised AI-augmented work that builds intuition rather than bypassing it — are going to have better talent ten years from now than those that simply automated their way out of entry-level roles.

8. The SDLC Is Being Redesigned Whether You Participate or Not

The Software Development Lifecycle as a concept — the sequential, stage-gated model that most enterprises have organized themselves around for the better part of thirty years — is not being improved. It is being replaced. Not everywhere and not all at once, but the direction is unmistakable, and the organizations that are designing the replacement rather than waiting to receive it will be in a fundamentally better position.

From Stage Gates to Continuous Intelligence

The traditional model assumes that each stage of development requires different specialists and produces distinct artifacts that get handed to the next stage. Requirements generate a specification document. A specification generates a design. A design generates code. Code generates test results. Test results generate a deployment. Each stage is a gate.

The emerging model is better understood as a continuous intelligence loop, where AI agents, automated feedback mechanisms, and human judgment operate concurrently rather than sequentially. A stakeholder conversation is immediately translated into an explorable prototype. Code generation happens with inline quality analysis and security scanning. Testing is not a phase — it is a continuous property of the development environment. Deployment is monitored and partially managed by systems that can detect and respond to anomalies without waiting for a human to notice.

The critical insight from the NStarX coordination tax framework is that this is not just a speed story. It is a quality story. Every sequential handoff in the old model was an opportunity for the original intent to degrade slightly. Compress the loop, eliminate the handoffs, and the fidelity between what the customer needs and what gets shipped improves.

Requirements to Working Code: The Front End Is Collapsing

The distance between “a stakeholder articulates a need” and “a developer has a buildable specification” used to be measured in weeks and involved multiple roles. AI tools are compressing that distance dramatically for well-scoped problems. Natural language to functional prototype is increasingly a matter of hours.

This does not eliminate the need for human judgment at the front end — it makes that judgment more consequential. When the loop between intent and implementation is short, the quality of the original intent matters more. Getting requirements wrong and catching it three weeks later is expensive. Getting requirements wrong and catching it three hours later is much cheaper. But the prerequisite is that someone asks the right questions at the front end, and that skill — drawing out and clarifying what a customer actually needs versus what they think they want — remains deeply human.

Quality Is Becoming a Property of the System, Not a Phase

The QA phase — a dedicated period of testing between code completion and deployment — is already an anachronism in the most advanced engineering organizations. Quality assurance is migrating from a stage to a continuous property of the development environment itself: AI-generated test cases that update as code changes, automated analysis of code quality and security posture with every commit, intelligent monitoring that detects behavioral anomalies before they become customer-visible incidents.

This is better. It catches problems earlier, when they are cheaper to fix. But it requires an investment in testing intelligence infrastructure that most organizations have not yet made, and it requires a cultural shift away from treating testing as something that happens at the end rather than something that is woven into every step.

Operations and Development Are Converging

The boundary that DevOps was supposed to dissolve is actually dissolving now, accelerated by AI. Deployment is increasingly automated and assisted. Incident management is increasingly supported by AI systems that can surface relevant context, suggest likely causes, and draft remediation steps in response to production alerts. The engineer of 2028 will be expected to own the full lifecycle of their software in a way that the engineer of 2018 genuinely was not. That is a significant skill expansion, and it is not optional.

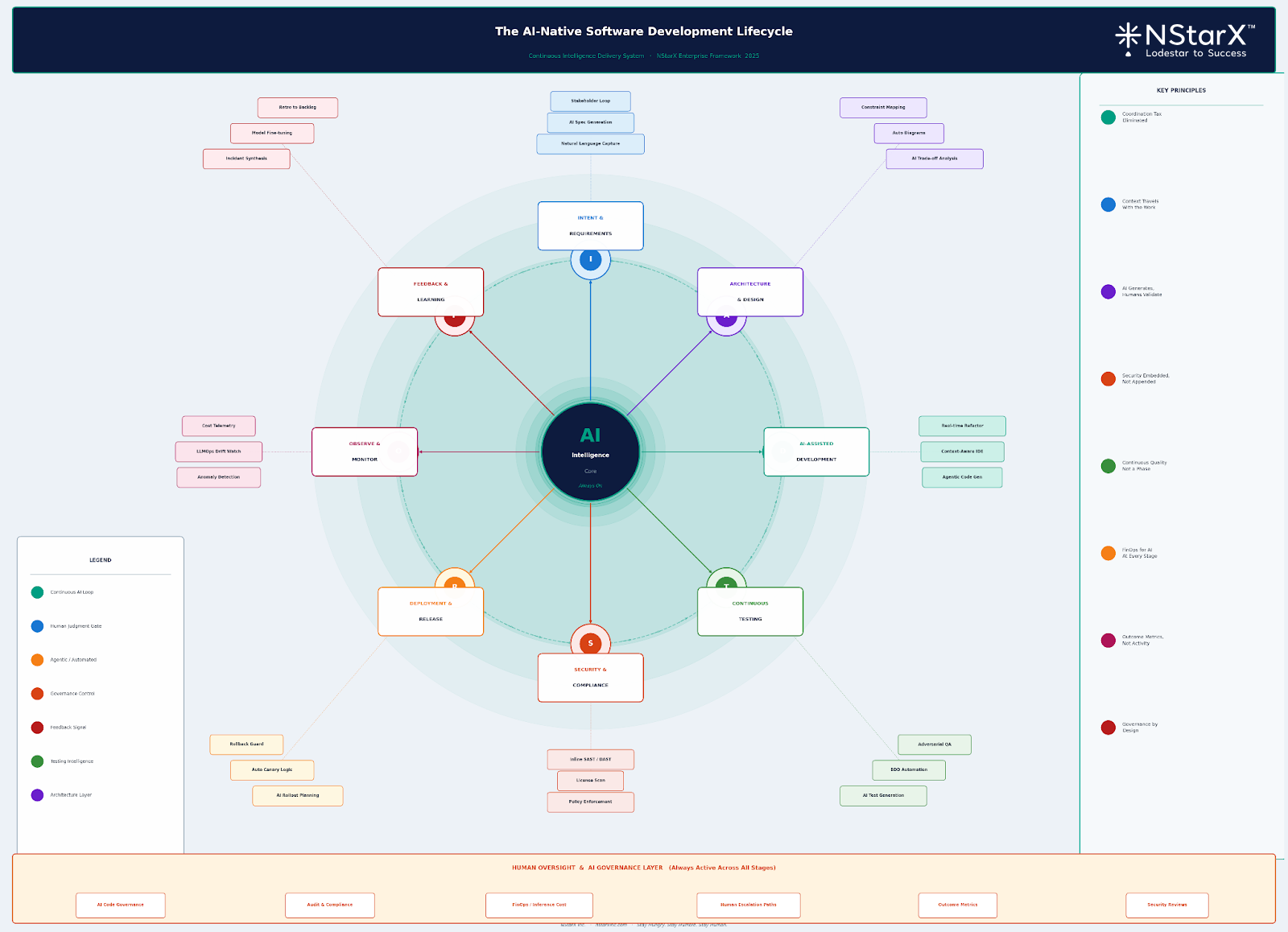

Here is the schematic as now NStarX Inc Engineering views the new age Software Development Life Cycle (SDLC) to appear as shown in Figure 1:

Figure 1: New Age SDLC (NStarX POV)

9. What 2026 Through 2030 Actually Looks Like

We want to resist the temptation to present a clean, confident roadmap for the next four years. The honest answer is that the rate of change in this space is high enough that any specific prediction beyond eighteen months should be held lightly. What we can offer with more confidence is a directional view of the inflection points ahead and the logic behind them.

2026: The Year You Either Redesign or Fall Behind

The dominant dynamic in 2026 is the gap between organizations that adopted AI tools and organizations that have actually redesigned their delivery model around them. That gap will become visible as a competitive performance difference in ways it has not been yet.

2026 is the year that organizations need to make a genuine commitment to the harder work: not “deploying AI” but “redesigning the workflow.” That means examining every stage of your software delivery chain and asking honestly whether it is still structured appropriately. It means building the governance infrastructure for AI-generated code before you need it, not after a major incident forces you to. It means establishing outcome metrics tied to business value, not activity proxies. The organizations that do this work in 2026 will have a compounding advantage through the rest of the decade.

2027: Agentic AI Becomes Part of Standard Practice

By 2027, AI coding agents operating with meaningful autonomy — taking a goal and iterating toward a solution across multiple steps without continuous human intervention — will move from experimental to mainstream in leading engineering organizations. The skills and organizational structures required to manage autonomous agents will need to be in place. This is the year that the governance and observability infrastructure built in 2026 starts to prove its value.

It is also the year that the skills bifurcation becomes pronounced. Engineering teams that have been building AI orchestration fluency and validation discipline will be operating at a fundamentally different level of leverage than those that have not. The talent market will reflect that difference.

2028: Three Quarters of Engineers Will Work With AI Daily, But That Is Not the Story

Usage saturation of AI tools among professional engineers is a near-certainty by 2028. The more important story is what those engineers are being asked to do with AI and whether their organizations have built the structures that allow AI to generate real business value rather than just generated code volume.

Gartner has made a striking prediction: the large majority of software engineers will need to have transitioned into fundamentally different roles compared to what they do today. That is not a small change. It is the equivalent of telling the entire field to retrain for a different job. The organizations that build credible transition pathways will retain their best people. Those that do not will watch them leave for organizations that have.

2029–2030: The Question Has Shifted Entirely

By 2030, arguing about whether AI should be used in software development will sound like arguing about whether the internet should be used for business communication. The question will have become: are you building reliable, secure, accountable AI-native systems, or are you not? The productivity conversation will be irrelevant. What will matter is whether your engineering organization has the architectural judgment, the governance maturity, and the operational discipline to build things that work reliably at scale with AI as a core component of the delivery system.

The organizations that will be in the strongest position at the end of this decade are the ones making deliberate investments in that capacity right now, not because they have a clear view of exactly what 2030 will look like, but because they understand the direction of the change and are building structures that are flexible enough to navigate it.

10. What CTOs and CIOs Need to Do, Starting This Quarter

We have had the same conversation enough times that it now has a familiar shape. A technology leader comes in with a clear diagnosis of the problem: their AI adoption has stalled, the productivity gains are not materializing, the talent strategy feels reactive, and the governance gaps are starting to worry them. The diagnosis is usually accurate. What is harder is identifying the specific first moves that will create real traction rather than adding another layer to an already crowded strategic agenda.

Here is what we actually believe needs to happen, in order of urgency.

Build Governance Infrastructure Before the Crisis Forces You To

Every organization we work with has some version of the same governance gap: AI tools are proliferating across development teams, but the policies and processes for managing AI-generated outputs as a distinct category do not exist. There is no systematic approach to AI code attribution, no clear policy on what levels of AI autonomy are acceptable in production systems, no defined escalation path when an AI-assisted process produces an unexpected outcome.

Building this infrastructure after a major incident is far more expensive than building it before. The governance framework does not need to be elaborate — it needs to exist and be operational. An AI council or governance board with clear ownership, a set of AI-specific quality policies, and a defined process for reviewing and updating those policies as the technology evolves. Start there.

Measure Outcomes, Not Activity

The metrics that most engineering organizations use to track AI adoption — code volume, task completion rates, pull request frequency — are measuring the wrong things. They measure whether AI is being used, not whether it is generating value. The shift to outcome metrics is not just philosophically preferable; it is practically necessary for making good investment decisions.

Define three to five metrics that connect engineering activity directly to business outcomes your organization cares about: time to deliver a customer-facing feature, cost per unit of software capability, reliability of production systems, speed of response to production incidents. Establish baselines before deploying AI at scale so you can actually see whether the investment is moving the needle on things that matter.

Design the Reskilling Pathway Now, Not When You Are Desperate for Talent

The talent market for AI-fluent engineers is already competitive and will get more so. The organizations that will be in the best position are not the ones trying to hire their way out of a skills gap — that strategy will be expensive and only partially effective. The ones in the best position will have built internal reskilling programs that develop AI fluency systematically in their existing engineering teams.

This means auditing your current team across the dimensions that actually matter: AI orchestration ability, output validation discipline, systems thinking, LLMOps fundamentals, algorithmic reasoning. Build a gap map. Design a twelve-month program with real milestones and real accountability. Create the on-ramps for early-career talent to develop judgment rather than just tool fluency. Make this a leadership priority, not an HR program.

Run the Organizational Design Experiment

The structural question — whether your engineering organization is designed for the world where AI enables small, end-to-end teams to outperform larger specialist organizations — cannot be answered in a strategy document. It needs to be tested.

Pick one or two high-value delivery streams. Assemble small, end-to-end teams with strong AI leverage and clear outcome accountability. Compare their performance against your existing delivery model on equivalent work. Be honest about what the data shows. Use it to make real decisions about organizational structure, not to confirm existing assumptions.

Get Serious About AI Security and Financial Discipline

AI-assisted development at scale introduces security risk through volume: more code, generated faster, with less consistent review. It introduces financial risk through inference costs that scale in ways that are hard to predict from pilot data. Both require dedicated operational disciplines that most engineering organizations are just beginning to develop.

Embed security scanning in the code generation loop, not at the end of the pipeline. Build AI cost management into your engineering financial operations with the same rigor you applied to cloud cost management when cloud adoption scaled. These are not optional disciplines. They are the difference between AI adoption that generates sustainable competitive advantage and AI adoption that generates a growing tail of security and financial exposure.

11. The New Moat Is Not What You Know. It Is What You Can Make Work.

There is a phrase that has been sitting with us throughout all of the enterprise AI work we have done over the past two years: the moat is shifting. The old competitive advantage in software development was rare knowledge — deep expertise in a specific domain, a framework, a system. That knowledge created a barrier because it was genuinely hard to acquire and broadly useful once you had it.

AI has not eliminated the value of deep knowledge. But it has changed the economics significantly. If a model can retrieve and apply domain knowledge on demand, then merely possessing that knowledge is no longer sufficient differentiation. The new scarcity is not knowing the right things. It is being able to take ambiguous intent and transform it into reliable, secure, production-quality systems that work at scale. That is a compound skill: part judgment, part craft, part organizational design, part technical depth. It cannot be outsourced to a model. It has to be built, deliberately, over time.

That is what the coordination tax framework ultimately points toward. Reducing the hops in your delivery chain is not just an efficiency play. It is a quality play and a competitive play. Every handoff you eliminate is an opportunity for intent to degrade that you have removed. Every specialist who can now own a broader scope of the delivery chain is an opportunity for better outcomes that did not exist in the fragmented model.

But the organizations that capture this opportunity are not the ones that hand out AI tool subscriptions and declare transformation. They are the ones that ask the harder questions: What should our delivery organization actually look like in 2028? What do our engineers need to know that AI cannot know for them? How do we build the governance and observability infrastructure that makes autonomous AI systems trustworthy at scale? How do we create the talent pipeline for a profession that is being fundamentally redesigned?

At NStarX, this is the work we are doing with our clients every day: not selling AI tools, but helping enterprises redesign their delivery systems, their talent strategies, and their governance frameworks for a world where AI is a core component of how software gets built. The Service-as-Software model we operate on is built for exactly this moment — helping organizations navigate the gap between the impressive demos and the reliable production systems.

The window is open. The organizations that move thoughtfully and decisively in the next eighteen months will establish advantages that compound. The ones that wait for the market to clarify further will find themselves playing catch-up in a market that no longer has the patience for it.

References and Further Reading

The following sources were consulted in developing the perspectives and analysis presented in this article. All statistics and data points have been drawn from these primary and secondary sources.

- NStarX: Coordination Tax & Builder Archetype Framework (2026).

- Bain & Company. (2025). “From Pilots to Payoff: Generative AI in Software Development.” Technology Report.https://www.bain.com/insights/from-pilots-to-payoff-generative-ai-in-software-development-technology-report-2025/

- MIT Technology Review. (2026). “AI Coding Is Now Everywhere — But Not Everyone Is Convinced.”https://www.technologyreview.com/2025/12/15/1128352/rise-of-ai-coding-developers-2026/

- Google Cloud / DORA. (2025). “State of AI-Assisted Software Development Report.”https://cloud.google.com/resources/content/2025-dora-ai-assisted-software-development-report

- Grand View Research. (2024). “Global AI in Software Development Market Report, Forecast to 2033.”https://www.grandviewresearch.com/industry-analysis/ai-software-development-market-report

- DevOps.com. (2025). “Five Trends That Will Drive Software Development in 2025.”https://devops.com/five-trends-that-will-drive-software-development-in-2025-2/

- BayTech Consulting. (2026). “Unlocking 2026: The Future of AI-Driven Software Development.”https://www.baytechconsulting.com/blog/unlocking-ai-software-development-2026

- BayTech Consulting. (2025). “AI and Software Development 2025.”https://www.baytechconsulting.com/blog/ai-and-software-development-2025

- Google Cloud Blog. (2025). “Real-World Gen AI Use Cases from Leading Organizations.”https://cloud.google.com/transform/101-real-world-generative-ai-use-cases-from-industry-leaders

- iTransition. (2026). “Software Development Statistics for 2026: Key Facts & Trends.”https://www.itransition.com/software-development/statistics

- CIO Dive. (2026). “The Challenge for Software Engineers in 2026 — and Beyond.”https://www.ciodive.com/news/software-development-challenges-2026-CIO/808413/

- CIO Magazine. (2025). “State of IT Jobs: AI Sparks a Rapidly Changing Market for Skills.”https://www.cio.com/article/4134254/state-of-it-jobs-ai-sparks-rapidly-changing-market-for-skills.html

- CIO Magazine. (2025). “Future-Proof Tech Skills for the Evolving AI Job Market.”https://www.cio.com/article/4134749/future-proof-tech-skills-for-the-evolving-ai-job-market.html

- CIO Magazine. (2026). “7 Challenges IT Leaders Will Face in 2026.”https://www.cio.com/article/4114004/7-challenges-it-leaders-will-face-in-2026.html

- CIO Magazine. (2025). “AI-Native Software Engineering May Be Closer Than Developers Think.”https://www.cio.com/article/3567138/ai-native-software-engineering-may-be-closer-than-developers-think.html

- CIO Magazine. (2025). “The Future of Programming and the New Role of the Programmer in the AI Era.”https://www.cio.com/article/4085335/the-future-of-programming-and-the-new-role-of-the-programmer-in-the-ai-era.html

- CIO Magazine. (2025). “The 10 Hottest IT Skills for 2026.”https://www.cio.com/article/4096592/the-10-hottest-it-skills-for-2026.html

- Aumni Tech Works. (2026). “The 2026 CIO + CTO Outlook: Trends Reshaping Enterprise Technology Leadership.”https://www.aumnitechworks.com/blogs/cio-cto-2026-ai-native-gcc

- PwC Middle East. (2026). “Agentic SDLC in Practice: The Rise of Autonomous Software Delivery.”https://www.pwc.com/m1/en/publications/2026/docs/future-of-solutions-dev-and-delivery-in-the-rise-of-gen-ai.pdf

- Research.com. (2026). “AI, Automation, and the Future of Software Development Degree Careers.”https://research.com/advice/ai-automation-and-the-future-of-software-development-degree-careers

- Stack Overflow. (2025). “Developer Survey 2025.”https://survey.stackoverflow.co/2025/

- LinkedIn Economic Graph. (2025). “AI Labor Market Update.”https://economicgraph.linkedin.com/research/ai-jobs

- World Economic Forum. (2025). “Future of Jobs Report 2025.”https://www.weforum.org/publications/the-future-of-jobs-report-2025/

- Gartner Research. (2025). “AI in Software Engineering — Forecasts and Practitioner Guidance.”https://www.gartner.com/en/newsroom

- NVIDIA. (2025). “DGX Spark and DGX Station Personal AI Supercomputers.”https://www.nvidia.com/en-us/products/workstations/