By the NStarX Engineering Team | Enterprise AI Strategy & Architecture

Estimated reading time: 15 minutes

Somewhere right now, a well-funded enterprise is reviewing slides from its eleventh AI pilot. The model hit 94% accuracy in the sandbox. The vendor presentation was impressive. The steering committee approved Phase Two.

And twelve months from now, it will still be a pilot.

This isn’t cynicism — it’s a pattern that has repeated itself across industries with remarkable consistency. Despite record levels of AI investment, the majority of enterprise AI initiatives stall before they generate a single dollar of measurable business impact. According to McKinsey’s 2023 State of AI report, while more than half of organizations have adopted AI in at least one function, only a small percentage have successfully scaled it to drive enterprise-wide financial outcomes. Gartner has estimated that through 2025, roughly 80% of AI projects will fail to reach production deployment.

The uncomfortable truth is this: technology is no longer the barrier. Leadership, architecture, and operational discipline are.

For CTOs sitting at the intersection of technology ambition and business accountability, the stakes couldn’t be higher. This article is a frank conversation about why AI pilots stall — and a practical playbook for turning AI investment into EBITDA that shows up on the income statement.

Section 1: The AI Pilot Trap — When Innovation Theater Replaces Business Impact

The Experiment Proliferation Problem

Ask the head of innovation at most large enterprises how many AI pilots are active, and you’ll often get a proud answer: dozens, sometimes hundreds. The assumption is that more experiments mean more chances of finding a winner. In practice, the opposite tends to be true.

Research from MIT Sloan Management Review found that organizations running a high volume of simultaneous AI pilots were significantly less likely to bring any single initiative to production scale — a condition researchers have termed “pilot purgatory”. The reason is resource physics: each pilot needs data access, engineering bandwidth, stakeholder time, and governance attention. Spread thinly across fifty experiments, none of these assets accumulates to the critical mass required for production deployment.

There’s also a cultural dimension. Pilots are politically safe. They signal ambition without demanding commitment. They can be quietly shelved when priorities shift, without anyone being accountable for failure. Full-scale deployment, by contrast, requires executive sponsorship, cross-functional coordination, and acceptance of the fact that a live AI system in production will occasionally make a mistake in front of a real customer or regulator.

The result: organizations accumulate an impressive portfolio of pilot results and almost nothing in production.

The Production Deployment Gap

The distance between a pilot environment and a production system is not measured in code — it’s measured in organizational complexity. A pilot runs on a data extract that someone manually prepared for a demo. A production system requires real-time data pipelines, access controls, failover mechanisms, monitoring dashboards, and a clear escalation path when the model behaves unexpectedly.

RAND Corporation’s research on AI deployment found that over 80% of AI models developed in enterprise environments are never deployed to production. The gap isn’t usually a lack of technical sophistication. It’s the absence of the operational infrastructure — the MLOps tooling, the model governance frameworks, the change management processes — that makes a model reliable enough to trust with real business decisions.

Fragmented Tools and Data: The Invisible Tax

One of the most consistent patterns in failed enterprise AI initiatives is the technology stack that produced them: a mix of independently procured point solutions, each serving a specific team or use case, none talking meaningfully to the others.

Business Unit A uses Azure OpenAI. The data science team built something on AWS SageMaker. The customer experience team licensed a conversational AI platform from a startup. Finance uses a BI tool with an AI assistant bolted on. And the data these tools need? Distributed across Salesforce, SAP, Snowflake, three legacy databases, and a set of Excel files maintained by people who have long since left the company.

IBM’s Global AI Adoption Index identified data complexity as the single most cited barrier to AI implementation, with 35% of enterprises pointing to fragmented data infrastructure as the primary obstacle to scaling. When every AI initiative has to independently solve the data access problem, organizations end up spending most of their AI budget on data engineering and almost nothing on the business value that data was supposed to unlock.

The AI Pilot Trap, in essence, is an organizational architecture problem masquerading as a technology problem.

Section 2: The Enterprise AI Maturity Model — An Honest Assessment

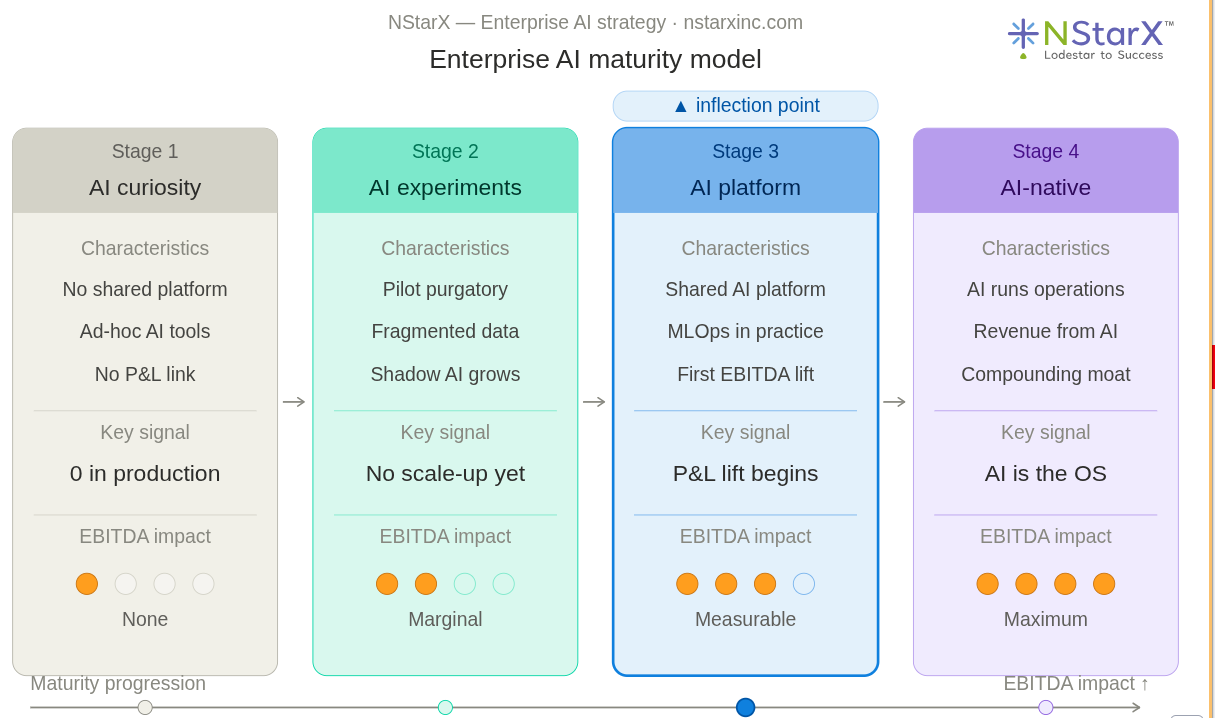

Before a CTO can chart a path forward, they need an accurate map of where their organization actually stands. AI maturity doesn’t arrive all at once — it progresses through identifiable stages, each with its own characteristics, challenges, and strategic imperatives. Drawing on frameworks from Harvard Business School, Accenture’s Technology Vision research, and industry practice, we can describe four stages:

Stage 1 — AI Curiosity

The organization is aware of AI’s potential and is beginning to explore. There may be a ChatGPT enterprise license in place, a few data scientists on the team, and occasional conversations at the board level about AI strategy. But there is no coherent platform, no dedicated governance, and no accountability for business outcomes from AI.

At this stage, the primary risk is mistaking vendor demonstrations for organizational capability. Curiosity is a starting point, not a strategy.

Indicators: Scattered use of AI tools at the individual level. No shared infrastructure. AI discussed as a future initiative, not a current operational priority.

Stage 2 — AI Experimentation

This is where most large enterprises honestly sit today, despite often believing they’re further along. Multiple teams are running pilots. There are some genuine early results. Data literacy is improving. The technology has proven it can work in controlled conditions.

The primary risk at this stage is exactly what was described in Section 1: proliferating experiments without a deliberate path to production. Shadow AI — employees using consumer AI tools in ways that bypass IT governance — is a growing concern at this stage, both as a productivity phenomenon and as a compliance liability.

Indicators: Multiple active pilots. Some documented quick wins. No shared platform or model governance. Business units acquiring AI tools independently.

Stage 3 — AI Platform

The inflection point. The organization has made a deliberate architectural commitment to building a shared AI platform: standardized data pipelines, a model registry, deployment infrastructure, governance tooling, and a centralized team responsible for AI operations. Business KPIs are being tracked against AI initiatives.

This stage is hard to reach and easy to underestimate in complexity. But it is the stage that separates organizations that generate measurable AI ROI from those that don’t.

Indicators: Centralized AI infrastructure in place. Cross-functional AI governance active. First measurable contributions to cost reduction or productivity. Business owners accountable for AI-driven KPIs.

Stage 4 — AI-Native Enterprise

AI is not a project. It is the operating model. Decisions across customer experience, supply chain, product development, and financial management are continuously informed or executed by AI systems. The competitive advantage is structural, compounding, and difficult for competitors to replicate quickly.

Indicators: AI embedded in core business workflows. Continuous model improvement at scale. AI-driven revenue streams alongside operational efficiency gains. AI considered a core strategic asset in investor communications.

The critical insight from this model is that Stage 3 cannot be skipped on the path to Stage 4. Organizations that try to jump from experimentation directly to AI-native transformation inevitably find themselves back at Stage 2, with a more expensive and more fragmented stack.

Figure 1: NStarX Enterprise Maturity Model

Section 3: The Architecture Required to Scale AI — Building the Platform, Not the Feature

For CTOs committed to moving to Stage 3 and beyond, the architectural decisions made in the next 12 to 18 months will define the AI trajectory of their organization for years. Four layers must be deliberately designed and integrated.

The Data Layer: The Foundation Everything Else Rests On

There is no AI at scale without a data strategy at scale. This is not a new insight, but it remains the most chronically underfunded dimension of enterprise AI investment.

Scaling AI requires a unified data architecture — one that allows data from disparate operational systems, customer platforms, external APIs, and historical archives to be reliably accessed, governed, and consumed by models. The modern answer to this challenge is the data lakehouse: an architecture that combines the cost efficiency and flexibility of a data lake with the performance, structure, and governance capabilities of a data warehouse.

Platforms such as Databricks Lakehouse Platform, Snowflake, and Google BigQuery have made this architecture progressively more accessible. But the architectural principles matter more than the vendor selection:

- Data lineage: Know where every data point came from and how it was transformed before it reached a model

- Feature stores: Reusable, versioned feature sets that prevent teams from independently recomputing the same derived data

- Real-time ingestion: Models that act on stale data produce stale decisions; streaming pipelines are increasingly necessary for high-value use cases

- Data quality monitoring: Automated checks that surface data anomalies before they corrupt model performance in production

The Model Layer: Owning Intelligence, Not Just Renting Access

The democratization of foundation models — GPT-4o, Claude, Gemini, Llama 3, Mistral — has created the impression that AI capability is now a commodity available to anyone with an API key. In one sense, this is true. In the sense that matters strategically, it is not.

The organizations that will extract durable competitive advantage from AI are those that build proprietary model capabilities on top of foundation model foundations. This means fine-tuning models on domain-specific data, building retrieval-augmented generation (RAG) pipelines that ground model outputs in proprietary knowledge bases, and developing evaluation frameworks that reflect the specific accuracy and reliability standards of the business.

The decision framework is straightforward: if a capability is differentiating and you have proprietary data that would improve model performance, invest in fine-tuning or custom training. If it’s commodity intelligence — summarization, basic classification, general language tasks — use a foundation model API and invest resources elsewhere.

The Agent Orchestration Layer: From Generating Outputs to Taking Actions

If the model layer is about intelligence, the agent orchestration layer is about action. Standalone language models generate text. AI agents complete tasks, call tools, retrieve information, make decisions, and hand off to other agents in a coordinated workflow.

Multi-agent frameworks — including LangGraph, CrewAI, Microsoft AutoGen, and Anthropic’s agent architecture patterns — are enabling a new class of enterprise AI application 9: systems that can receive a complex business task, decompose it into subtasks, execute each step using the appropriate tool or model, and return a completed result with a full audit trail.

For an enterprise, the implications are significant. A well-designed agent system in accounts payable doesn’t just flag an anomalous invoice — it cross-references the purchase order, queries the vendor database, drafts a resolution note, routes it to the appropriate approver, and updates the ERP. What previously required three people and two days can happen in minutes, with a human reviewing only the exceptions.

The architectural challenge is building agent workflows that are modular (components can be updated independently), observable (every step is logged and auditable), and interruptible (human review can be triggered at any point in the workflow based on confidence thresholds or risk signals). Agents without these properties are operational liabilities, not assets.

The Governance Layer: The Infrastructure for Trust at Scale

Governance is not a compliance exercise appended to the AI strategy. It is the architectural layer that determines whether an AI platform can operate at enterprise scale without creating legal, regulatory, or reputational exposure.

A mature governance layer includes:

- Model cards and documentation: Standardized records for every model in production — what data it was trained on, what it was designed to do, what its known limitations are, and who is accountable for its performance

- Bias and fairness auditing: Systematic evaluation of model outputs across demographic segments, integrated into the deployment pipeline rather than conducted as a one-time review

- Explainability tooling: The ability for business users, auditors, and regulators to understand why a model made a specific decision — increasingly a legal requirement under the EU AI Act and various U.S. state-level regulations 10

- Access and data residency controls: Particularly critical for enterprises operating across multiple jurisdictions with different data sovereignty requirements under GDPR, CCPA, and sector-specific regulations

- Version control and rollback: The ability to roll back a model to a previous version within minutes if a production issue is detected

The NIST Artificial Intelligence Risk Management Framework (AI RMF 1.0) provides a rigorous and increasingly adopted architecture for enterprise AI governance, organized around four core functions: Govern, Map, Measure, and Manage. CTOs building or upgrading their governance layer would do well to treat the NIST AI RMF as a design reference, not merely a compliance benchmark.

Section 4: Operationalizing AI — The Unglamorous Work That Makes Everything Else Possible

If there is a single reason enterprise AI initiatives fail to sustain their early promise, it is the underinvestment in AI operations — the disciplines, tools, and practices required to keep AI systems performing reliably in production over time. This work rarely appears in vendor pitch decks. It almost never gets coverage at AI conferences. It is, however, the difference between a model that delivers value for a quarter and a platform that compounds value for years.

MLOps and LLMOps: Treating Models as Products

MLOps — the discipline of applying software engineering and DevOps principles to machine learning workflows — has matured significantly over the past several years. Platforms including MLflow, Google Vertex AI, and AWS SageMaker MLOps have industrialized the core capabilities: automated training pipelines, model versioning, deployment orchestration, and production monitoring.

LLMOps extends these principles to large language model applications, which introduce a distinct set of operational challenges. Prompt engineering is not a one-time activity — it is a continuous discipline requiring version control, A/B testing, and systematic evaluation. Retrieval-augmented generation pipelines require monitoring for retrieval quality, not just generation quality. Output evaluation at scale requires both automated metrics and periodic human review, since the “correctness” of a long-form language model output is often not amenable to simple programmatic scoring.

Organizations building LLM applications in production are increasingly turning to platforms like LangSmith (from LangChain), Weights & Biases, and Arize AI for the observability infrastructure this discipline demands.

The cultural principle is simple, even if execution is hard: every AI model in production is a product. It has a product manager. It has an SLA. It has a roadmap. It has a deprecation policy. Organizations that treat deployed models as completed projects — rather than live products requiring ongoing investment — will find their AI ROI degrading silently over time.

Monitoring, Evaluation, and Guardrails

Production AI systems do not hold still. Data distributions shift as business conditions evolve. User behavior changes in ways that gradually push models outside the distribution they were trained on. A credit risk model trained on pre-pandemic data may behave unexpectedly as macroeconomic conditions shift. A customer service AI may develop blind spots as product catalogs update and pricing changes.

Continuous monitoring must cover three dimensions:

Performance monitoring tracks the technical metrics — accuracy, latency, throughput, error rates — that indicate whether the model is functioning as designed. Data drift detection uses statistical methods to identify when the distribution of incoming data has shifted significantly from the training distribution, which often predicts impending model degradation before performance metrics visibly decline. Output quality evaluation is particularly challenging for generative AI applications, where automated metrics like ROUGE or BERTScore are imperfect proxies for the semantic quality and factual accuracy of model outputs; best practice combines automated scoring with sampled human review.

Guardrails — constitutional constraints that prevent models from generating harmful, policy-violating, or factually unreliable outputs — are the last line of defense before a model’s output reaches a user or a downstream system. These are not optional features for enterprise deployments; they are table stakes. Frameworks like Anthropic’s Constitutional AI methodology and NVIDIA’s NeMo Guardrails provide reference architectures for implementing robust output control.

Human-in-the-Loop: Designing for Augmentation, Not Replacement

The most resilient enterprise AI deployments in practice are those designed from the outset around a deliberate human-in-the-loop (HITL) model. This does not mean humans reviewing every AI output — that approach eliminates the efficiency rationale for automation. It means identifying, with precision, the decision points where human judgment materially improves outcomes, and building structured review workflows around those specific points.

A well-designed HITL system might route 95% of decisions through automated AI workflows while escalating the 5% that exceed a confidence threshold, involve regulatory exposure, or display statistical characteristics that the system was not designed to handle. The human role shifts from executing routine decisions to reviewing edge cases and continuously improving the decision rules that govern escalation.

Research in human-computer interaction has consistently found that this type of “human-on-the-loop” design — where humans set parameters and review anomalies rather than executing every step — outperforms both full automation and full human execution on complex, high-volume decision tasks.

Section 5: The ROI Framework — Connecting AI to the Income Statement

The most important conversation that enterprise AI teams are not having often enough is this one: How, specifically, does this AI initiative show up in EBITDA?

Answering that question with rigor requires mapping AI investment to three distinct financial levers.

Cost Reduction: The Most Direct and Measurable Path

The most immediate path from AI deployment to EBITDA contribution is the automation of high-volume, low-discretion tasks that currently consume significant operational cost. The ROI case here is direct and, when scoped properly, relatively fast to materialize.

Documented enterprise use cases with strong cost reduction evidence include:

Intelligent document processing — automating the extraction, classification, and routing of structured and unstructured documents such as contracts, insurance claims, invoices, and regulatory filings. Studies from Deloitte have found that AI-powered document processing can reduce manual review effort by 60 to 80% in high-volume back-office operations.

Customer service automation — AI agents handling Tier 1 and Tier 2 customer inquiries across chat, email, and voice channels. Gartner projects that conversational AI will reduce contact center agent labor costs by $80 billion globally by 2026 2.

Predictive maintenance — using sensor data and historical failure patterns to predict equipment failures before they occur, reducing unplanned downtime in manufacturing, utilities, and infrastructure. McKinsey analysis suggests predictive maintenance can reduce downtime by 20 to 50% and reduce maintenance costs by 10 to 40%.

Developer productivity — AI-assisted code generation, testing, and documentation. GitHub’s research on Copilot found that developers using the tool completed coding tasks 55% faster, with improvements concentrated in repetitive and boilerplate-heavy tasks.

Productivity Gain: The Compounding Middle Layer

Productivity improvements don’t appear as discrete line items on the income statement, but they accumulate into material margin expansion over time. When knowledge workers spend measurably less time on information retrieval, report generation, meeting summarization, and first-draft creation, that reclaimed capacity flows toward higher-value activities — or reduces the headcount growth required to scale the business.

Microsoft’s 2023 Work Trend Index, covering organizations using Copilot-integrated productivity tools, found that users reported 29% faster task completion and 70% reported meaningfully reduced cognitive effort on information-intensive tasks. At enterprise scale — thousands of knowledge workers each reclaiming hours per week — those efficiency gains represent real structural margin improvement, even when they’re not captured in a single budget line.

The measurement discipline matters here. CTOs and their CFO counterparts should agree in advance on how productivity gains will be measured and translated into financial value, whether through reduced backfill hiring, faster time-to-market for products, or higher revenue per employee metrics.

New Revenue Streams: The Highest-Multiple Lever

The organizations that generate the most compelling AI-driven financial impact are not solely focused on cost reduction. They are building AI capabilities that create revenue opportunities that didn’t previously exist — or that allow existing revenue to be captured at margins that weren’t previously possible.

Examples of AI-enabled revenue creation at enterprise scale:

AI-powered personalization at scale enables retail, financial services, and media companies to deliver individualized experiences to millions of customers simultaneously, driving higher conversion rates, increased basket size, and improved customer lifetime value.

Data and analytics products sold as commercial services — where an organization’s proprietary AI capabilities, trained on unique operational data, become a revenue-generating offering for partners, customers, or other market participants.

AI-native product features that command premium pricing and create switching costs: embedded AI assistants, automated decision support tools, and intelligent workflow automation that competitors cannot quickly replicate.

Accenture’s Technology Vision 2024 research found that organizations in the top quartile of AI adoption generated revenue from new AI-enabled products and services at three times the rate of industry peers. This is where AI transforms from an efficiency tool into a strategic growth driver — the point where EBITDA impact becomes not just operational but competitive.

Section 6: The CTO Playbook — From AI Strategy to Monday Morning

The preceding sections have described the problem, the maturity model, the required architecture, and the financial framework. This section is about execution — the specific decisions and actions that separate CTOs who generate AI-driven business impact from those who generate AI-themed annual report commentary.

Decision 1: Build an AI Platform, Not a Portfolio of Point Tools

The most consequential architectural decision available to a CTO today is the choice between investing in a shared enterprise AI platform versus allowing each business unit to independently procure and operate its own AI tools.

The point-tool approach feels faster in the short term. Each team gets what they want, the vendors are happy, and there’s an appearance of rapid AI adoption. In practice, it produces exactly the fragmentation described in Section 1: incompatible data environments, duplicated costs, inconsistent governance, and no economies of scale in model development or deployment.

An enterprise AI platform consolidates the layers described in Section 3: a governed data layer that all AI applications can access, a model registry and deployment infrastructure that enables reuse and standardization, shared agent orchestration capabilities, and centralized monitoring and observability. Business teams interact with the platform through self-service interfaces that allow them to consume AI capabilities without requiring deep technical expertise.

This does not mean building everything from scratch. Hyperscaler platforms from AWS, Azure, and Google Cloud provide mature components for each layer. Open-source foundations like Hugging Face, LangChain, and Ray are increasingly enterprise-grade. The CTO’s responsibility is to make deliberate architectural decisions about what to build, what to adopt, and how to integrate — and to prevent the decision from being made by default through unchecked vendor proliferation.

Decision 2: Prioritize Three Enterprise Use Cases with Explicit P&L Accountability

Breadth is the enemy of impact. The fastest path to demonstrable EBITDA contribution from AI is going deep on a small number of high-value, high-readiness use cases — not hedging across a broad portfolio of incremental experiments.

Use case selection should be governed by a disciplined evaluation framework across four dimensions:

Business impact: What is the realistic, conservatively modeled EBITDA contribution over 18 months? Cost savings or revenue gain? At what probability of achievement?

Data readiness: Is the data required to train, deploy, and monitor this use case actually available, accessible, and sufficiently clean? If the answer requires a multi-year data infrastructure project, the use case is not ready.

Organizational readiness: Is there a business owner — someone with P&L accountability and genuine organizational commitment — who will champion this use case through deployment and adoption? Technical readiness without organizational readiness is a recipe for a very sophisticated pilot.

Technical feasibility: Is this a problem that has been demonstrably solved elsewhere in the industry? Is the approach proven, or are you pushing the research frontier? Enterprise AI strategy should generally prioritize applying proven approaches to new domains over conducting fundamental research.

Select three. Build them to production quality. Measure their contribution against the baseline with the same rigor applied to any capital investment. Then expand horizontally from a position of demonstrated capability.

Decision 3: Align Every AI Initiative with P&L Accountability

This is the organizational change that most consistently separates AI leaders from AI aspirants — and it is more cultural than technical.

Every AI initiative in production must have a business owner. Not a technical owner. A business owner: a Chief Revenue Officer, a VP of Operations, a CFO, a General Manager — someone whose performance metrics and compensation are tied to the business outcomes that the AI system is designed to deliver.

When business ownership is absent, the classic pattern plays out: the technology team builds and deploys the model, usage is lower than projected, benefits are hard to attribute, the initiative drifts to maintenance mode, and the platform investment is quietly written off as a “learning experience.”

When a business owner is genuinely accountable for outcomes, the dynamic inverts. Data access problems get solved with urgency. Change management becomes a priority, not an afterthought. Success metrics are defined in revenue and cost terms, not model accuracy scores. And when the system underperforms, there is a clear owner responsible for diagnosing and fixing it.

Structurally, this requires CTOs to actively partner with CFOs and business unit leaders to define AI ROI expectations in financial terms before deployment begins — not after. It requires establishing shared scorecards that track AI contribution to specific P&L lines. And it requires the organizational discipline to sunset AI initiatives that fail to deliver measurable value, rather than allowing them to persist indefinitely in a state of managed ambiguity.

Conclusion: The Execution Gap Is Now the Only Gap That Matters

The enterprise AI landscape has reached a genuine inflection point. The technology works. Foundation models have crossed the threshold from experimental to production-capable. Agent frameworks are maturing rapidly. The tooling ecosystem for MLOps, governance, and observability has never been more accessible.

What separates the 10% of enterprises that will convert AI investment into durable EBITDA impact from the 90% that will accumulate impressive pilot portfolios is not access to better technology. It is execution discipline — the organizational commitment, architectural rigor, and operational investment required to move from experimentation to scaled impact.

The CTOs who will define the next decade of enterprise competitiveness are those who resist the temptation of pilot proliferation in favor of platform investment. Those who tie AI accountability to P&L outcomes rather than technology showcase metrics. Those who build governance into the architecture rather than treating it as a compliance afterthought. And those who understand that the operational work of keeping AI systems performing reliably in production is as important as the intellectual work of designing them.

The window for building a durable AI platform advantage is open today. Foundation model capabilities are commoditizing quickly, and as they do, the sustainable competitive advantage will shift entirely to execution: the organizations that can deploy, operate, improve, and scale AI systems faster and more reliably than their competitors.

There will be enterprises that look back on 2025 as the year they built the platform that powered the next decade of growth. And there will be enterprises still reviewing their fourteenth pilot update.

The question every CTO needs to answer right now is not “Are we investing in AI?” It is “Are we building the capability to turn AI investment into income statement impact — and do we have the organizational architecture to sustain that capability over time?”

That question is harder than any technology decision. It is also more important than any of them.

Nstarx partners with enterprise technology leaders to architect, deploy, and operationalize AI systems that generate measurable business outcomes. From AI platform strategy to production deployment and MLOps, our team brings the cross-functional expertise to move organizations from pilot purgatory to EBITDA contribution. To start a conversation, visit us at dev-wp.nstarxinc.com/.

References

Footnotes

- McKinsey & Company. (2023). The State of AI in 2023: Generative AI’s Breakout Year. McKinsey Global Institute.Available at:https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-in-2023-generative-ais-breakout-year

- Gartner. (2022). Gartner Identifies Key Barriers to AI Deployment and Ways to Overcome Them. Gartner Research.Available at:https://www.gartner.com/en/newsroom/press-releases/2022-08-22-gartner-identifies-key-barriers-to-ai-deployment

- Ransbotham, S., Khodabandeh, S., Fehling, R., LaFountain, B., & Kiron, D. (2019).Winning With AI: Pioneers Combine Strategy, Organizational Behavior, and Technology.MIT Sloan Management Review and Boston Consulting Group.Available at:https://sloanreview.mit.edu/projects/winning-with-ai/

- RAND Corporation. (2023).Measuring AI Deployment and Organizational Readiness in Enterprise Environments.RAND Science and Technology Policy Institute.

- IBM Institute for Business Value. (2023).Global AI Adoption Index 2023. IBM Corporation.Available at:https://www.ibm.com/thought-leadership/institute-business-value/en-us/report/ai-adoption-index

- Iansiti, M., & Lakhani, K. R. (2020).Competing in the Age of AI: Strategy and Leadership When Algorithms and Networks Run the World.

Harvard Business Review Press. - Accenture. (2024).Technology Vision 2024: Human by Design — How AI Unleashes the Next Level of Human Potential.

Accenture Research.Available at:

https://www.accenture.com/us-en/insights/technology/technology-trends-2024 - Zaharia, M., Ghodsi, A., & Xin, R. (2021).Lakehouse: A New Generation of Open Platforms that Unify Data Warehousing and Advanced Analytics.

Proceedings of CIDR 2021.Available at:

https://www.cidrdb.org/cidr2021/papers/cidr2021_paper17.pdf - Anthropic. (2024). Building Effective Agents. Anthropic Research.Available at:https://www.anthropic.com/research/building-effective-agents

- European Parliament and Council of the European Union. (2024).Regulation (EU) 2024/1689 — Artificial Intelligence Act.

Official Journal of the European Union.Available at:

https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32024R1689 - National Institute of Standards and Technology (NIST). (2023).Artificial Intelligence Risk Management Framework (AI RMF 1.0).

U.S. Department of Commerce.Available at:

https://airc.nist.gov/RMF - Zaharia, M., Chen, A., Davidson, A., et al. (2018).Accelerating the Machine Learning Lifecycle with MLflow.IEEE Data Engineering Bulletin, 41(4), 39–45.

- Weights & Biases. (2024).The State of LLM Fine-Tuning 2024: Benchmarks, Practices, and Infrastructure.Available at:https://wandb.ai/site/research

- Rabanser, S., Günnemann, S., & Lipton, Z. C. (2019).Failing Loudly: An Empirical Study of Methods for Detecting Dataset Shift.

Advances in Neural Information Processing Systems (NeurIPS), 32. - Rebedea, T., Dinu, R., Sreedhar, M., Xiong, C., & Park, J. (2023).NeMo Guardrails: A Toolkit for Controllable and Safe LLM Applications with Programmable Rails.Available at:https://arxiv.org/abs/2310.10501

- Amershi, S., Weld, D., Vorvoreanu, M., et al. (2019).Software Engineering for Machine Learning: A Case Study.Proceedings of the 41st International Conference on Software Engineering (ICSE).

- Deloitte Insights. (2023).Intelligent Automation: Redefining the Enterprise Back Office.Available at:https://www2.deloitte.com/us/en/insights/focus/technology-and-the-future-of-work/intelligent-automation-enterprise-back-office.html

- McKinsey & Company. (2022).Capturing the True Value of Industry 4.0: Predictive Maintenance and Beyond.Available at:https://www.mckinsey.com/capabilities/mckinsey-digital/our-insights/capturing-the-true-value-of-industry-four-point-zero

- Peng, S., Kalliamvakou, E., Cihon, P., & Demirer, M. (2023).The Impact of AI on Developer Productivity: Evidence from GitHub Copilot.Available at:https://arxiv.org/abs/2302.06590

- Microsoft. (2023).2023 Work Trend Index Annual Report: Will AI Fix Work?Available at:https://www.microsoft.com/en-us/worklab/work-trend-index/will-ai-fix-work