Enterprise AI

Innovate Faster,

Operate Smarter

At NStarX Inc., we deliver Enterprise AI services to drive innovation, efficiency, and growth. Our expertise spans MLOps, AI Engineering, Data Science, and Advisory, enabling seamless AI adoption, scalable platforms, actionable insights, and tailored strategies. We empower businesses to integrate AI for transformative and sustainable outcomes.

Explore Our Comprehensive Range of Services

Explore how we can drive your enterprise forward with tailored AI solutions that deliver measurable results.

Advisory

Data Engineering

Federated Learning & Distributed AI

AI Platform

Data Science

Advisory Services

NStarX Inc. provides comprehensive Enterprise AI Advisory Services designed to empower organizations to adopt AI effectively, develop robust production architectures, and execute Proof of Concepts (POCs) or Minimum Viable Products (MVPs). Our advisory services are tailored to meet the unique needs of ISVs, healthcare providers, media companies, and investor communities (VCs and PE firms).

Key Advisory Services

Discovery Workshops

Uncovering the Path to AI-Native EnterprisePurpose:

Systematically assess organizational readiness and identify high-impact opportunities for Generative AI adoption that align with strategic business objectives and deliver measurable ROI.

Critical Discovery Elements

Key Deliverables

AI Opportunity Assessment Report

(20-30 pages)

- Executive summary with key findings

- Current state analysis across 8 dimensions

- AI readiness scorecard

- Identified use case portfolio (15-25 use cases)

- Prioritization matrix with ROI estimates

Use Case Catalog

(Structured Database)

- Detailed description of each use case

- Business value proposition

- Preliminary ROI calculations

- Feasibility assessment

- Resource requirements

- Risk factors

Stakeholder Alignment Presentation

- Key findings and recommendations

- Priority use cases with business cases

- Proposed next steps

- Investment requirements

Engagement Model

Duration

Team Composition

- 1 Senior AI Strategy Consultant (Lead)

- 1 Technical Architect

- 1 Business Analyst

- Domain experts as needed

Client Involvement

- Executive sponsor (5-10 hours)

- Functional leaders (10-15 hours each)

- Technical teams (15-20 hours)

- End users (5-10 hours)

Delivery Approach

Week 1

Week 2

Week 3

Generative AI Feasibility Analysis

From Ideas to Investable OpportunitiesPurpose:

Conduct rigorous technical and business analysis of prioritized use cases to determine viability, quantify ROI, and de-risk AI investments through data-driven decision frameworks.

Critical Feasibility Elements

Key Deliverables

Feasibility Study Report

(Per Use Case, 30-50 pages each)

- Executive summary with go/no-go recommendation

- Detailed technical feasibility analysis

- Data readiness assessment

- Comprehensive ROI model with assumptions

- Implementation complexity matrix

- Risk register with mitigation strategies

- Resource and timeline estimates

Business Case Documents

(Per Use Case)

- Problem statement and opportunity

- Proposed solution approach

- Financial model (5-year projection)

- Implementation roadmap

- Success metrics and KPIs

- Investment request

Technical Architecture Blueprints

- High-level solution architecture

- Data flow diagrams

- Integration patterns

- Technology stack recommendations

- Security and compliance considerations

Stakeholder Alignment Presentation

- Key findings and recommendations

- Priority use cases with business cases

- Proposed next steps

- Investment requirements

Engagement Model

Duration

Team Composition

- 1 Senior AI Strategy Consultant

- 1 Solution Architect

- 1 Data Engineer

- 1 Business Analyst/Financial Modeler

- Domain experts as needed

Client Involvement

- Executive sponsor (10-15 hours)

- Business owners (20-30 hours per use case)

- Technical teams (30-40 hours)

- Finance team (10-15 hours)

Delivery Approach

Weeks 1-2

Weeks 3-4

Weeks 5-6

Architectural Evaluations

Building the Foundation for AI-Native OperationsPurpose:

Design secure, scalable, and future-proof technical architecture that enables seamless Generative AI adoption while integrating with existing enterprise systems and ensuring compliance with governance requirements.

Critical Discovery Elements

Key Deliverables

Enterprise AI Architecture Blueprint (60-80 pages)

- Current state architecture documentation

- Target state architecture with NStarX Unified Platform

- Gap analysis and transformation roadmap

- Component specifications and technology selections

- Network and security architecture

- Cost model and sizing recommendations

Platform Integration Guide

- Integration patterns and best practices

- API specifications and contracts

- Data flow diagrams

- Authentication and authorization framework

- Error handling and resilience patterns

- Performance optimization guidelines

Security & Governance Framework

- Security architecture and controls

- Data governance policies and procedures

- Compliance mapping (GDPR, HIPAA, etc.)

- Responsible AI guidelines

- Audit and monitoring requirements

- Incident response procedures

Infrastructure as Code (IaC) Templates

- Terraform/CloudFormation templates

- Kubernetes manifests

- CI/CD pipeline configurations

- Monitoring and alerting setup

- Disaster recovery procedures

Technology Evaluation Matrix

- Component comparison and recommendations

- Build vs. buy analysis

- Vendor evaluation criteria

- Cost-benefit analysis

- Risk assessment

Engagement Model

Duration

Team Composition

- 1 Enterprise Architect (Lead)

- 1 AI/ML Solutions Architect

- 1 Data Architect

- 1 Security Architect

- 1 DevOps/Platform Engineer

- 1 Cloud Infrastructure Specialist

Client Involvement

- CTO/Engineering leadership (15-20 hours)

- Architecture team (40-60 hours)

- Security team (20-30 hours)

- Infrastructure team (30-40 hours)

- Compliance team (10-15 hours)

Delivery Approach

Weeks 1-2

Weeks 3-4

Weeks 5-6

Weeks 7-8

Roadmap Development

Strategic Planning for AI-First TransformationPurpose:

Create a comprehensive, phased implementation plan that balances quick wins with transformational initiatives, aligns AI investments with business priorities, and provides clear milestones for measuring progress and ROI realization.

Critical Discovery Elements

Key Deliverables

Strategic AI Roadmap

(40-60 pages)

- Executive summary and vision

- Strategic objectives and success criteria

- Phased implementation plan (Horizons 1-3)

- Initiative portfolio with timelines

- Resource and budget allocation

- Risk mitigation strategies

- Governance framework

Detailed Implementation Plans

(Per Initiative)

- Project charter and objectives

- Scope and deliverables

- Work breakdown structure

- Timeline with milestones

- Resource plan

- Budget (detailed)

- Risk register

- Success metrics

- Success metrics

Financial Model & Business Case

- Total investment required (36-month view)

- Phased ROI realization

- Cash flow projections

- NPV and payback period

- Sensitivity analysis

- Funding recommendations

Measurement & KPI Framework

- North star metrics

- Leading and lagging indicators

- KPI trees by initiative

- Dashboard mockups

- Reporting cadence

- Continuous improvement process

Change Management & Adoption Plan

- Stakeholder analysis and engagement plan

- Communication strategy

- Training and enablement roadmap

- Adoption metrics

- Support model

- Risk mitigation for organizational change

Governance Charter

- Governance structure and roles

- Decision-making framework (RACI)

- Meeting cadence and agendas

- Escalation procedures

- Reporting requirements

- Policy and standards

Engagement Model

Duration

Team Composition

- 1 Program Director / Strategy Lead

- 1 AI Strategy Consultant

- 1 Technical Architect

- 1 Financial Analyst

- 1 Change Management Consultant

- Domain experts as needed

Client Involvement

- Executive sponsor (20-30 hours)

- Cross-functional leaders (30-40 hours each)

- Finance team (20-25 hours)

- PMO team (30-40 hours)

Delivery Approach

Weeks 1-2

Weeks 3-4

Weeks 5-6

Weeks 7-8

Rapid Prototyping

Validating AI Concepts with Tangible POCsPurpose:

Build working prototypes to validate technical feasibility, demonstrate business value, and de-risk full-scale implementation through rapid iteration and stakeholder feedback.

Critical Discovery Elements

Key Deliverables

Working Prototype

- Functional demonstration environment

- Sample data and test cases

- User interface (if applicable)

- Documentation and code repository

- Demo videos and walkthrough guides

Prototype Evaluation Report

(20-30 pages)

- Executive summary with recommendation

- Hypothesis validation results

- Technical findings and learnings

- Performance metrics and benchmarks

- User feedback summary

- Comparison vs. success criteria

- Risks and challenges identified

Production Roadmap

- Gap analysis (prototype to production)

- Technical requirements for production

- Architecture recommendations

- Timeline and resource estimates

- Investment requirements

- Risk mitigation strategies

Business Case Update

- Validated ROI model

- Updated cost estimates

- Refined benefit projections

- Implementation recommendations

- Go/no-go recommendation

Technical Documentation

- Architecture diagrams

- Data flow documentation

- API specifications

- Model documentation

- Deployment guide

- Testing documentation

Knowledge Transfer Package

- Technical walkthrough sessions

- Code documentation

- Configuration guides

- Troubleshooting guides

- Best practices and lessons learned

Engagement Model

Duration

Team Composition

- 1 Technical Lead/Solutions Architect

- 2-3 AI/ML Engineers

- 1 Data Engineer

- 1 UX/UI Designer (if needed)

- 1 Business Analyst

- Domain experts as needed

Client Involvement

- Business owner (15-20 hours)

- Subject matter experts (20-30 hours)

- Technical team (30-40 hours)

- End users for testing (10-15 hours)

Delivery Approach

Week 1

Weeks 2-5

(2-week sprints)

Week 6

Weeks 7-8

Leveraging NStarX Unified Platform

- Pre-configured Kubeflow environment

- Access to foundational models (GPT, Mistral, Llama)

- Vector database infrastructure (Milvus, Pinecone)

- MLflow for experiment tracking

- Kubernetes for orchestration

Integrated Engagement Model:

Advisory to Execution

Phase 1: Foundation

(Months 1-3)

- Discovery Workshops → Use case identification

- Feasibility Analysis → Validated opportunities

- Architectural Evaluation → Platform strategy

Investment: $250K - $400K

Outcome: Clear direction, validated use cases, platform blueprint

Phase 2: Validation

(Months 3-6)

- Roadmap Development → Implementation plan

- Rapid Prototyping → Technical validation (2-3 prototypes)

- Platform deployment (NStarX Unified Platform)

Investment: $350K - $600K

Outcome: Proven concepts, operational platform, committed roadmap

Phase 3: Scale

(Months 6-18)

- Full-scale implementation of priority use cases

- Platform expansion and optimization

- Team capability building

- Change management and adoption

Investment: Varies by scope ($1M - $5M+)

Outcome: Production AI solutions, measurable ROI, organizational transformation

Why NStarX Advisory Services Drive AI-First Success

ROI-First Methodology

End-to-End Expertise

Proven Platform Approach

De-Risked Transformation

Sustainable Transformation

Data Engineering Services

NStarX Inc. offers robust Data Engineering Services tailored to help enterprises in healthcare, media, ISVs, and investor communities (PE and VCs) build reliable, scalable, and high-performance "Enterprise AI" applications. Our services focus on enabling seamless data management, integration, and preparation to fuel AI-driven business outcomes.

Key Data Engineering Services

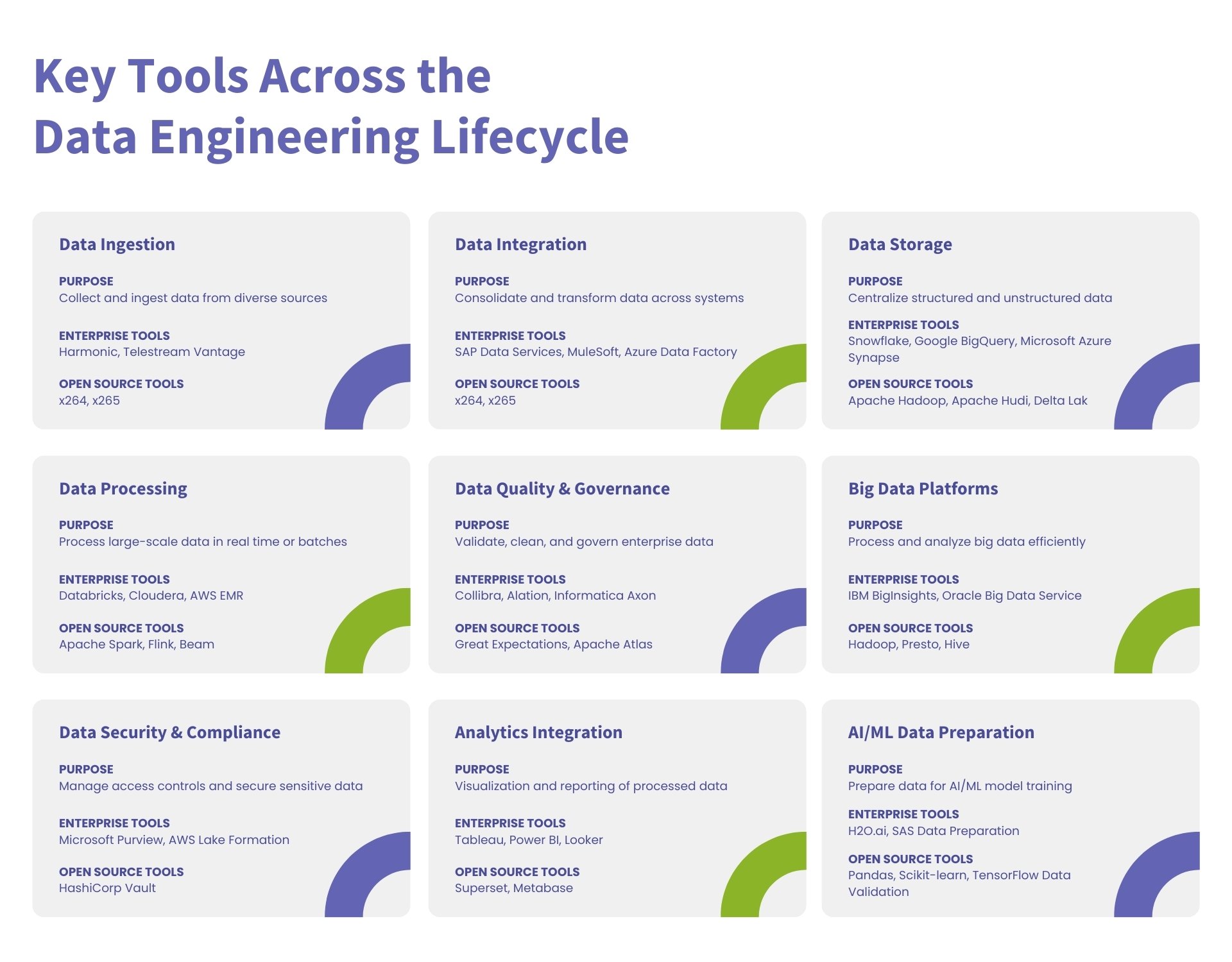

Key Tools Across the Data Engineering Lifecycle

| Lifecycle Stage | Enterprise Tools | Open Source Tools | Purpose |

|---|---|---|---|

| Data Ingestion | Informatica, Talend, Fivetran, AWS Glue | Apache NiFi, Logstash, Flume | Collect and ingest data from diverse sources |

| Data Integration | SAP Data Services, MuleSoft, Azure Data Factory | Apache Kafka, Airbyte, dbt | Consolidate and transform data across systems |

| Data Storage | Snowflake, Google BigQuery, Microsoft Azure Synapse | Apache Hadoop, Apache Hudi, Delta Lake | Centralize structured and unstructured data |

| Data Processing | Databricks, Cloudera, AWS EMR | Apache Spark, Flink, Beam | Process large-scale data in real time or batches |

| Data Quality and Governance | Collibra, Alation, Informatica Axon | Great Expectations, Apache Atlas | Validate, clean, and govern enterprise data |

| Big Data Platforms | IBM BigInsights, Oracle Big Data Service | Hadoop, Presto, Hive | Process and analyze big data efficiently |

| Data Security and Compliance | Microsoft Purview, AWS Lake Formation | HashiCorp Vault | Manage access controls and secure sensitive data |

| Analytics Integration | Tableau, Power BI, Looker | Superset, Metabase | Visualization and reporting of processed data |

| AI/ML Data Preparation | H2O.ai, SAS Data Preparation | Pandas, Scikit-learn, TensorFlow Data Validation | Prepare data for AI/ML model training |

Why Choose NStarX Data Engineering Services?

Domain Expertise

AI-Driven Focus

Scalable Solutions

Security-First Approach

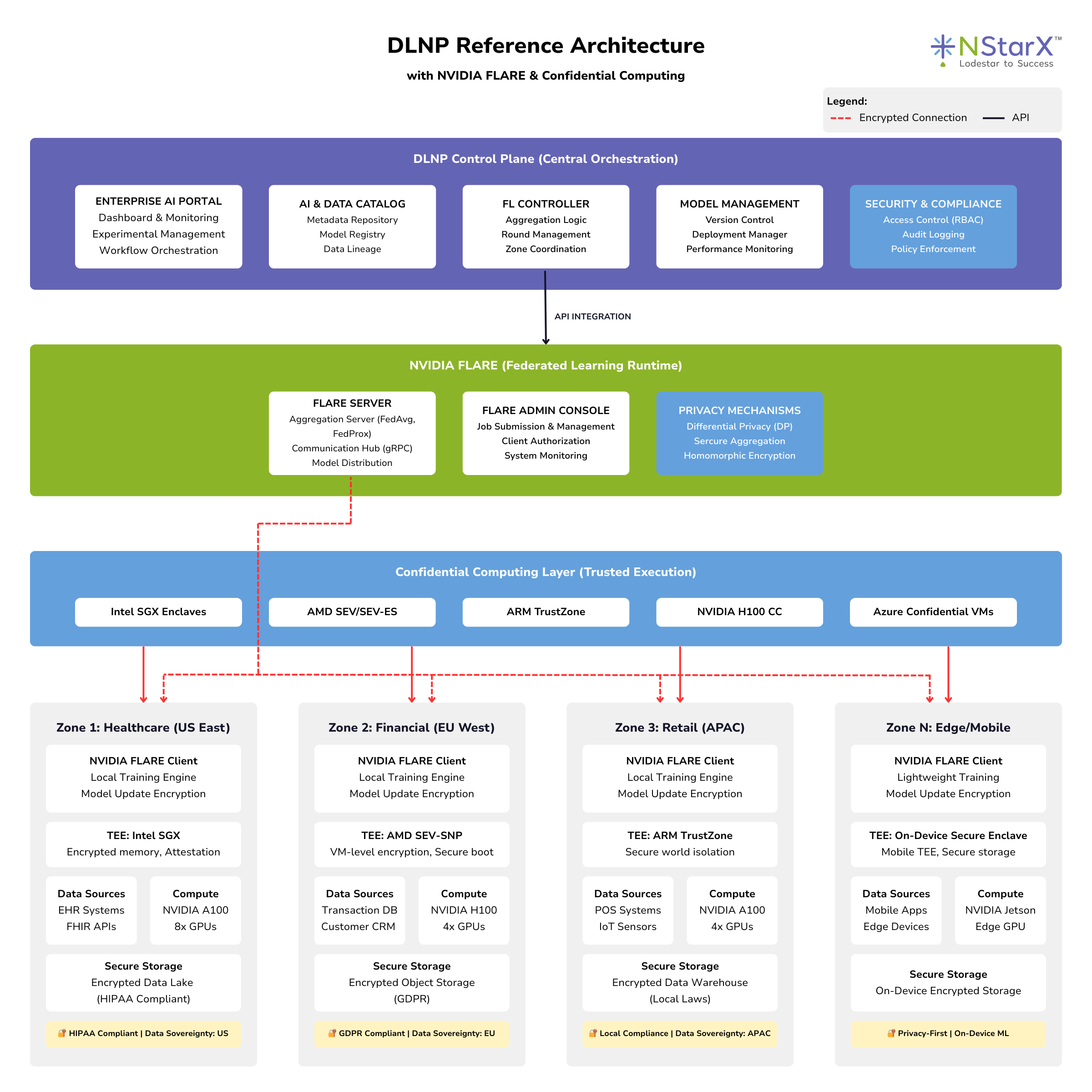

Federated Learning and Distributed AI Services

NStarX Inc. delivers an innovative open-source-based Distributed AI framework designed to address the challenges of data sovereignty and residency, enabling enterprises to build compliant, secure, and scalable AI solutions. With our focus on Federated Learning, we help regulated industries such as healthcare, media, ISVs, and investor communities (PEs and VCs) thrive in environments constrained by data localization laws.

The Federated Learning Advantage

Traditional AI requires centralizing data, but federated learning brings the model to the data:

100%

10-100x

Zero

compliance violations

80%

Data Sovereignty

Privacy-Preserving

Scalable Architecture

Enterprise Grade

Use Cases for Federated Learning

Healthcare

Financial Services

Telecommunications

Retail

Manufacturing

Key Federated Learning Services

AI and Data Catalog

A centralized yet compliant repository for metadata, datasets, and AI models across distributed zones.

Critical Components of the Catalog System

Key Deliverables

Technology Stack

| Component | Technologies | Purpose |

|---|---|---|

| Catalog Backend | Apache Atlas, DataHub, Amundsen | Metadata management and lineage |

| Search Engine | Elasticsearch, Apache Solr | Fast full-text search |

| API Layer | GraphQL, REST APIs | Programmatic access to catalog |

| UI Framework | React, Vue.js | User-friendly web interface |

Engagement Model

Duration

Team Composition

Success Metrics

Federated Learning Controllers

Orchestrates model training across distributed data zones without moving sensitive data.

Critical Components of FL Controllers

Key Deliverables

Technology Stack

| Component | Technologies |

|---|---|

| FL Framework | TensorFlow Federated, PySyft, Flower, FATE |

| ML Frameworks | TensorFlow, PyTorch, JAX |

| Communication | gRPC, Apache Kafka, MQTT |

| Privacy Libraries | TensorFlow Privacy, Opacus, PySyft |

| Orchestration | Kubernetes, Docker, Apache Airflow |

Engagement Model

Duration

Team Composition

Success Metrics

Enterprise AI Portal

A single-pane interface for managing AI pipelines, training, and monitoring across distributed zones.

Critical Components of the Portal

Key Deliverables

Engagement Model

Duration

Team Composition

Success Metrics

Distributed Model Management

Lifecycle management for AI models across multiple zones, ensuring compliance and efficiency.

Critical Components of Model Management

Key Deliverables

Engagement Model

Duration

Team Composition

Success Metrics

Security and Compliance Framework

Security and privacy by design, ensuring adherence to global and local data protection regulations.

Critical Components of Security Framework

Key Deliverables

Engagement Model

Duration

Team Composition

Success Metrics

Why NStarX for Federated Learning?

Privacy-First

Compliance Expertise

Production-Ready

Open-Source Leadership

Domain Expertise

DLNP Integration

Case Studies: Federated Learning

AI Platform Services

NStarX Inc. specializes in building advanced AI platforms leveraging open-source technologies like Kubeflow and Kubernetes and enterprise-grade tools like Databricks. We deliver scalable, secure, and robust AI solutions tailored to ISVs, healthcare providers, media companies, and investor communities (PEs and VCs). Our expertise spans AI portal development, MLOps, ModelOps, DataOps, and comprehensive AI testing to meet enterprise-grade demands.

Key AI Platform Services

Why Choose NStarX for AI Platform Development?

Expertise in Scalable AI Platforms

Security-First Approach

End-to-End Services

Customization for Industry Needs

Accelerated Development

Key Benefits of Building an AI Platform with NStarX

Enhanced Scalability

Improved Reliability

Faster Time-to-Market

Cost Efficiency

Enterprise-Grade Security

Cross-Team Collaboration

Regulatory Compliance

Key Tools for Building AI Platforms

| Category | Enterprise Tools | Open Source Tools | Purpose |

|---|---|---|---|

| AI Portal | Power BI, Looker | Superset | Visualization and management of AI processes |

| MLOps | SageMaker, Databricks MLflow | Kubeflow, Airflow | Model lifecycle automation |

| ModelOps | Domino Data Lab, Azure AI | MLflow, TensorFlow Model Garden | Operationalize and scale AI models |

| DataOps | Informatica, Talend, AWS Glue | Apache NiFi, dbt, Great Expectations | Manage data pipelines and governance |

| AI Testing | H2O.ai, SAS | PyTest, TensorFlow Data Validation (TFDV) | Test AI models for robustness and fairness |

| Security and Compliance | Azure Policy, AWS Lake Formation | HashiCorp Vault, Open Policy Agent | Ensure compliance and secure data handling |

| Monitoring and Observability | Dynatrace, Datadog | Prometheus, Grafana | Monitor AI performance and metrics |

| Collaboration and Experimentation | Databricks Workspaces, JupyterHub | Jupyter Notebooks, Google Colab | Collaborative experimentation and testing |

| Scalability | Kubernetes, OpenShift | Docker, Helm | Container orchestration and scalability |

Data Science Services

NStarX Inc. offers cutting-edge Data Science services designed to help enterprises unlock the full potential of their data. Serving ISVs, healthcare providers, media companies, and investor communities (PEs and VCs), NStarX provides tailored solutions for data-driven decision-making and innovation.

Key Data Science Services

Enterprise and Open Source Tools for a Comprehensive Data Science Practice

| Category | Enterprise Tools | Open Source Tools | Purpose |

|---|---|---|---|

| Data Preparation | Alteryx, Informatica, Talend | Pandas, Apache Arrow, DataWrangler | Cleaning, transforming, and organizing data |

| Data Visualization | Tableau, Power BI, Looker | Matplotlib, Seaborn, Plotly, Superset | Creating dashboards and visual analytics |

| Machine Learning Frameworks | H2O.ai, SAS Viya | TensorFlow, PyTorch, Scikit-learn | Model training and optimization |

| NLP Tools | AWS Comprehend, Azure Text Analytics | spaCy, NLTK, Hugging Face Transformers | Text analysis and processing |

| Computer Vision | Google Vision AI, AWS Rekognition | OpenCV, Detectron2 | Image and video data processing |

| Data Storage and Management | Snowflake, Google BigQuery, Azure Synapse | Apache Hadoop, Delta Lake, MongoDB | Storing and managing structured and unstructured data |

| Model Deployment | SageMaker, Databricks MLflow | Kubeflow, BentoML | Deploying and managing ML models |

| Experiment Tracking | Domino Data Lab, Neptune.ai | MLflow, Weights & Biases | Tracking model experiments and iterations |

| Big Data Processing | Cloudera, Databricks | Apache Spark, Dask | Processing large-scale datasets |

| Collaboration | Confluence, JIRA, Slack | Jupyter Notebooks, Google Colab | Collaboration and project management |

| Security and Compliance | Microsoft Purview, AWS Macie | Open Policy Agent, HashiCorp Vault | Ensuring data security and compliance |